A/B testing Mailchimp campaigns is not about guessing which subject line “feels better.” It is about turning one campaign into a controlled learning system, so every send teaches you something useful about your audience.

Mailchimp lets you test variables such as subject line, From name, content, and send time, with winners chosen by metrics like opens, clicks, or revenue depending on your setup and goal. The key is not just knowing where the button is. The key is knowing what to test, why it matters, and how to avoid fake wins that do not improve your actual business results.

Article Outline

This six-part guide will continue in this structure:

- Why A/B Testing In Mailchimp Matters

- The Mailchimp A/B Testing Framework

- Core Components Of A Strong Test

- Professional Implementation In Mailchimp

- Reading Results Without Fooling Yourself

- FAQ And Final Optimization Checklist

Why A/B Testing In Mailchimp Matters

Email still earns its place because it is measurable, repeatable, and owned. Recent Litmus research found that many companies see email ROI between $10 and $36 for every $1 spent, which makes small improvements in campaign performance worth taking seriously. When you improve one repeatable email decision, that insight can compound across newsletters, launches, promotions, automations, and lifecycle campaigns.

Mailchimp’s A/B testing feature is useful because it gives everyday marketers a structured way to compare campaign variations without building a testing system from scratch. Mailchimp explains that email A/B tests can compare one variable, such as subject line, From name, content, or send time, and send variations to different subscriber groups before identifying a winner based on performance data. That matters because a “better” email is not always the email the team likes most; it is the one your audience actually responds to.

The Mailchimp A/B Testing Framework

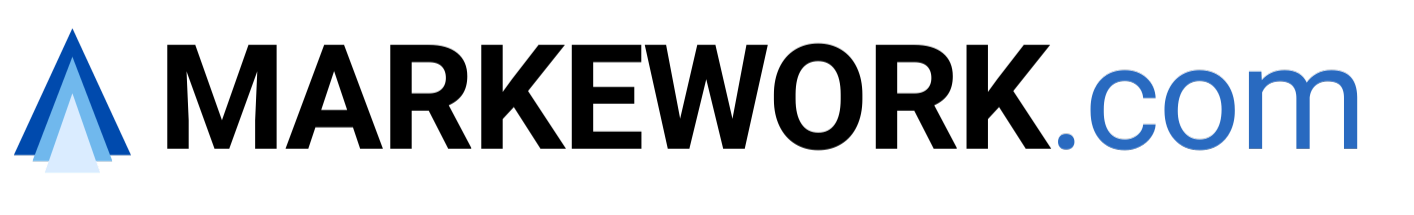

A strong A/B testing Mailchimp workflow starts before you open the campaign builder. You need a clear goal, a specific hypothesis, one variable, a meaningful audience, and a decision rule before the test goes live. Without those pieces, the test may still produce a winner, but the winner may not teach you anything useful.

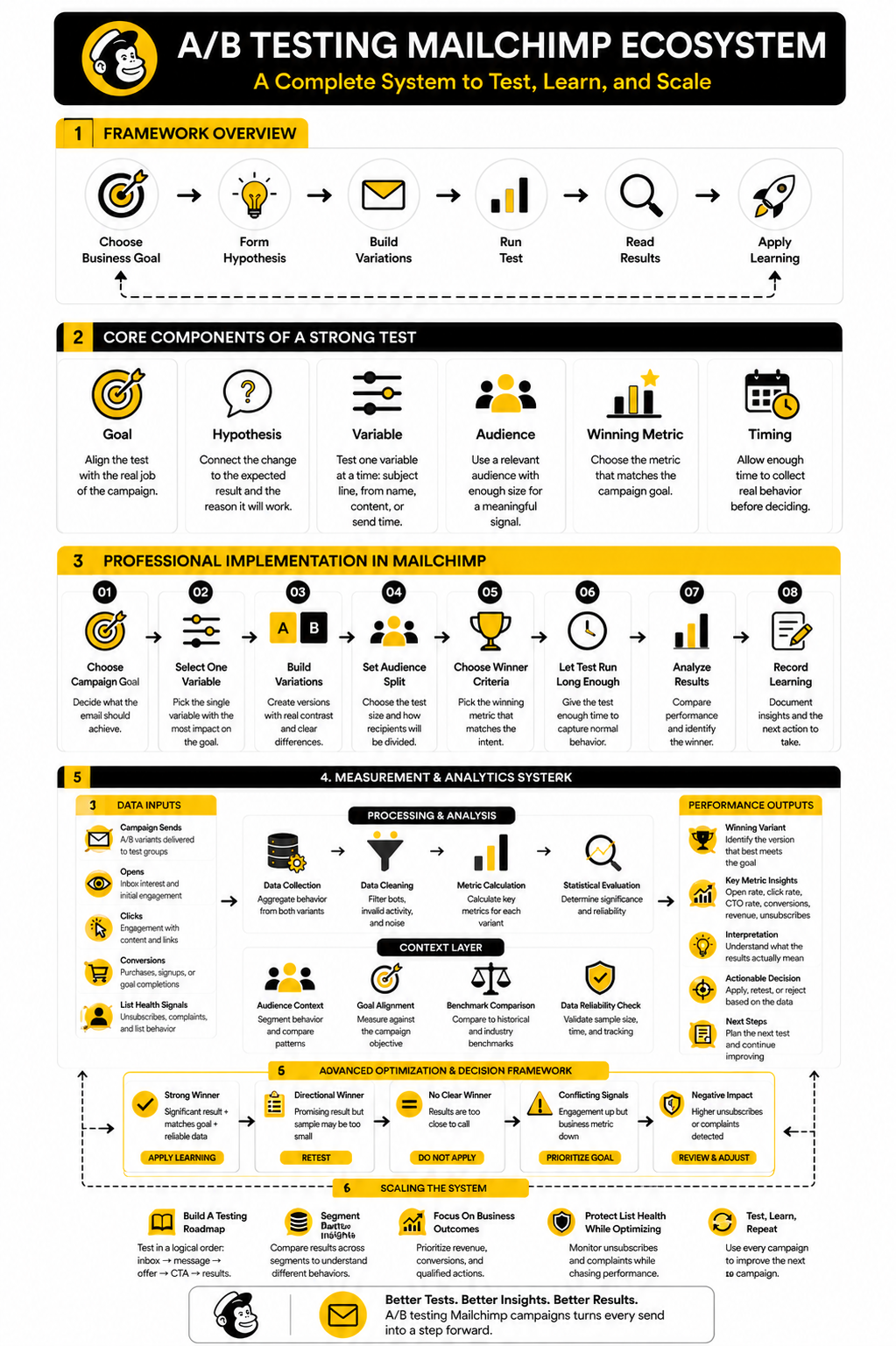

Think of the framework as a simple loop: choose the business goal, form the hypothesis, build the variations, run the test, read the result, then apply the learning to the next campaign. Mailchimp’s own setup flow follows a similar sequence by having you create the test, choose recipients, select the variable, configure the setup, add content, and confirm the campaign before sending. The professional difference is that you do not treat those steps as admin work; you treat them as the guardrails that keep your test honest.

Core Components Of A Strong Test

A good Mailchimp A/B test is built around one clean decision. You are not trying to redesign the entire campaign, rewrite the whole offer, change the audience, and test a new send time at once. That creates noise, and noise is where bad marketing decisions hide.

Start with the question you actually need answered. For example, “Does a benefit-led subject line get more qualified clicks than a curiosity-led subject line?” is much stronger than “Which email is better?” The first question gives you a practical next step after the test ends, while the second leaves you staring at numbers without knowing what to do next.

The Goal

Your goal decides the test. If the campaign is meant to drive traffic, clicks matter more than opens. If the campaign is meant to sell, revenue or downstream conversion matters more than a subject line win.

This is where many marketers get A/B testing Mailchimp campaigns wrong. They pick the easiest metric instead of the most relevant one. Opens can still help when you are testing subject lines or From names, but they should not be treated as the final truth when the real business goal happens after the email is opened.

The Hypothesis

A hypothesis is a simple statement that connects the change you are making to the result you expect. It should sound practical, not academic. You might say, “A clearer discount subject line will increase clicks because subscribers will understand the offer before opening.”

That sentence forces discipline. It tells you what to change, what to measure, and why the change might work. Without that clarity, you are not really testing; you are just sending variations and hoping one performs better.

The Variable

Mailchimp A/B testing works best when you isolate one variable at a time. That variable might be the subject line, From name, email content, or send time. If you change more than one major thing, you may get a winner, but you will not know what caused the win.

This matters more than most people think. A subject line test should not also include a different offer inside the email. A content test should not quietly change the CTA, layout, and discount at the same time unless you are intentionally testing a completely different creative direction.

The Audience

Your test audience needs to be large enough and relevant enough to produce a useful signal. A tiny list can still teach you something, but you should treat the result as directional rather than final. The smaller the sample, the easier it is for timing, inbox behavior, or a few unusually active subscribers to distort the result.

Segment quality also matters. Testing a campaign on cold, inactive contacts and applying the result to your best buyers is a mistake. The closer your test group is to the audience you care about, the more useful the result becomes.

The Winning Metric

The winning metric should match the job of the campaign. For a newsletter, click rate may be the cleanest measure of reader interest. For a sales campaign, revenue or conversion tracking gives you a stronger read than engagement alone.

Do not choose a winning metric because it makes the campaign look good. Choose it because it helps you make a better next decision. That is the whole point of a test.

Professional Implementation In Mailchimp

Once the strategy is clear, the execution should be boring in the best possible way. You are not looking for complexity. You are looking for a repeatable process that makes each A/B testing Mailchimp campaign easier to set up, easier to read, and easier to improve next time.

Mailchimp allows A/B tests to compare variables such as subject line, From name, content, and send time, while its A/B/n setup can test up to three variations of a single variable. That is enough for most email campaigns, especially when the goal is to learn one useful thing instead of creating a messy experiment that nobody can interpret later.

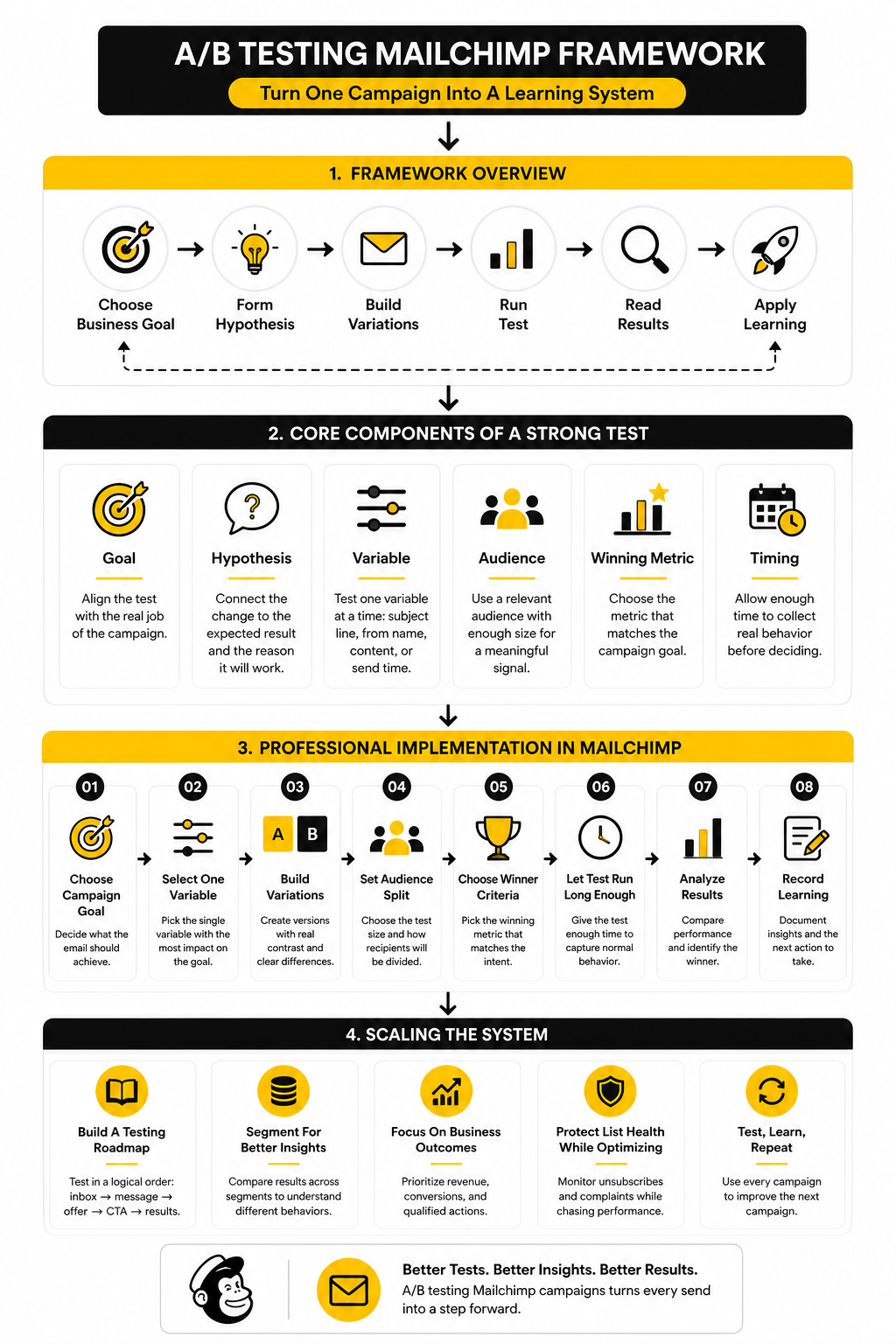

Step 1: Choose The Campaign Goal

Before touching the test settings, decide what the email is supposed to do. A product launch email, a newsletter, a webinar invite, and a reactivation campaign do not deserve the same success metric. The goal controls the entire setup.

For example, a newsletter may use clicks as the cleanest sign of reader interest, while a sales email should be judged closer to revenue or conversion when tracking is available. If you choose the wrong goal, the test can technically “win” while still pushing you toward the wrong marketing decision. That is why the campaign goal comes first.

Step 2: Select One Variable To Test

Next, choose the single variable that has the best chance of influencing that goal. If the issue is low visibility in the inbox, test the subject line or From name. If people open but do not act, test the body content, offer framing, or CTA section.

This is where discipline matters. Do not change the subject line, hero copy, layout, and CTA all in one standard A/B test unless you are intentionally comparing two complete creative directions. For normal optimization, keep the test narrow so the result gives you a usable lesson.

Step 3: Build Variations With Real Contrast

Weak variations create weak learning. “Save 10% today” versus “Today only: save 10%” may be too close to teach you anything meaningful. A stronger test compares different angles, such as urgency versus benefit, curiosity versus clarity, or personal sender versus brand sender.

That does not mean you should write gimmicky emails. It means each variation should represent a distinct strategic choice. If both versions are basically the same idea with slightly different wording, the test is not doing enough work.

Step 4: Set The Audience Split

Mailchimp requires A/B test campaigns to send to at least 10% of recipients, and send time tests are sent to 100% of the selected audience or segment. That gives you flexibility, but it also creates a decision: do you want to test on part of the audience and send the winner to the rest, or do you want every subscriber included in the test from the start?

For time-sensitive campaigns, sending the test to a small group and waiting too long can hurt momentum. For evergreen promotions or newsletters, a smaller test group can make sense because the winning version can still reach the remaining audience. The right split depends on the campaign’s urgency, list size, and how much confidence you need before declaring a winner.

Step 5: Choose The Winner Criteria

Winner criteria should match the intent of the test. If you are testing subject lines, open rate may be useful, although privacy changes mean opens should be interpreted carefully. If you are testing email content, click rate usually tells you more because it measures action inside the message.

For ecommerce campaigns, revenue can be the stronger winner metric when tracking is configured properly. For lead generation, form submissions, booked calls, or qualified replies may matter more than raw clicks. The point is simple: do not let the default metric make the decision for you.

Step 6: Let The Test Run Long Enough

A test needs enough time to collect meaningful behavior. Some audiences click quickly, while others open emails throughout the day or even the next morning. Ending the test too early can reward the version that got fast reactions, not the version that produced the best overall outcome.

Mailchimp gives you timing controls during setup, but your business context still matters. A daily flash sale may require a shorter decision window. A normal newsletter or nurture email can usually afford more breathing room before you judge the result.

Step 7: Record The Learning

The most valuable part of an A/B test is not the winning version. It is the insight you can reuse. After every test, write down the audience, variable, hypothesis, result, and what you will do differently next time.

This does not need to be fancy. A simple spreadsheet or campaign notes document is enough. Over time, those notes become your internal playbook, and that is where A/B testing Mailchimp campaigns starts to create real leverage.

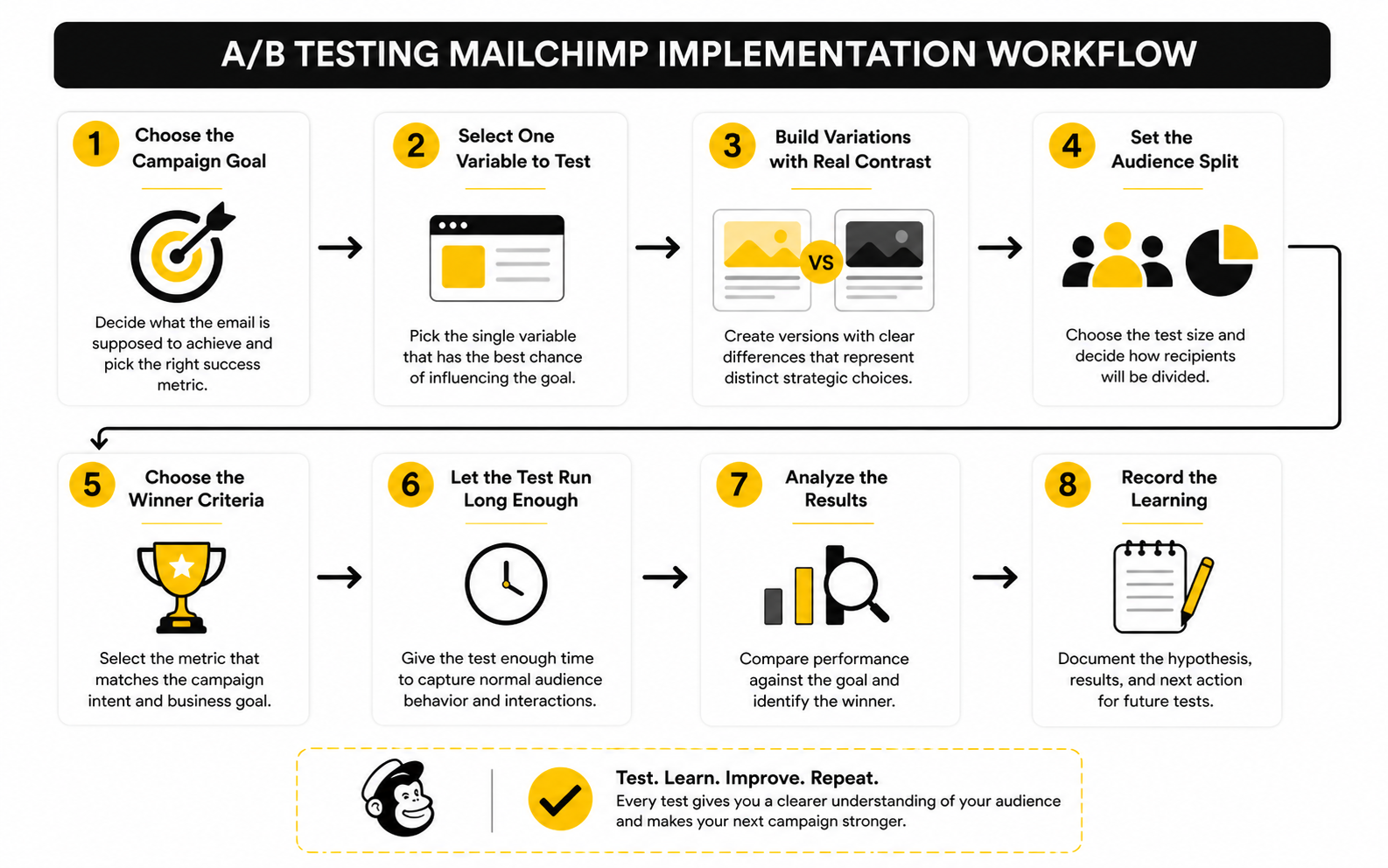

Statistics And Data

A/B testing Mailchimp campaigns becomes useful when you stop treating the report as a scoreboard and start treating it as a decision system. The numbers are not there to make you feel good or bad about a campaign. They are there to show which part of the email journey needs work next.

The main performance signals are opens, clicks, click-to-open rate, conversions, revenue, unsubscribes, and complaints. Each one answers a different question. If you mix them together without context, you can easily optimize the wrong thing.

Open Rate Shows Inbox Interest, Not Full Campaign Quality

Open rate can help when you are testing subject lines, preview text, or From names. It tells you whether the email created enough interest for someone to open it. That is useful, but it is not the whole story.

The problem is that open tracking has become less reliable because privacy features can inflate or obscure opens. Mailchimp notes that Apple Mail Privacy Protection affects opens and open-related metrics, while click activity is still reported for subscribers using Apple Mail. So if an A/B test wins on opens but fails to produce clicks, treat that win carefully.

Click Rate Shows Message Strength

Click rate is usually a stronger signal when the email’s job is to move people toward an action. It tells you whether the content, offer, CTA, and link placement gave readers enough reason to leave the inbox. For most practical campaigns, this is where the test starts becoming commercially useful.

When you are testing content inside the email, click rate often matters more than open rate. A subject line can win attention, but the body has to earn action. If version A gets more opens and version B gets more clicks, the better choice depends on the campaign goal, not the vanity metric.

Click-To-Open Rate Helps Diagnose The Gap

Click-to-open rate compares clicks against opens, which makes it useful for diagnosing what happens after the email is opened. If open rate is strong but click-to-open rate is weak, the inbox promise may not match the message. If both open rate and click-to-open rate are weak, the campaign may need a stronger angle from the beginning.

This metric is especially helpful when testing email content rather than subject lines. It narrows the focus to people who had at least some measured exposure to the message. That does not make it perfect, but it can make the analysis sharper than looking at clicks alone.

Revenue And Conversions Should Settle Commercial Tests

If the campaign is built to sell, revenue matters. If the campaign is built to generate leads, booked calls, demo requests, form submissions, or qualified replies matter. Engagement is useful, but business outcomes should carry more weight when the test is tied to money.

This is where A/B testing Mailchimp campaigns can expose a common trap. A “friendly” version may get more clicks, while a more direct version may produce more buyers. In that case, the better email is not the one with the prettiest engagement chart; it is the one that moves the business goal.

Benchmarks Are Context, Not Targets

Benchmarks help you understand whether your campaign is in a reasonable range, but they should not become your main goal. Mailchimp’s benchmark data is useful because it compares campaigns by industry and only includes campaigns sent to at least 1,000 subscribers. That gives you a broad reference point, not a personalized performance standard.

Your best benchmark is your own historical performance by audience, campaign type, and offer. A weekly newsletter, a launch email, and an abandoned cart message should not be judged by the same standard. The real question is not “Did we beat the internet average?” The better question is “Did we improve against our own baseline in a way we can repeat?”

What The Data Should Make You Do Next

Every test result should lead to one of three actions. You either apply the winner, run a follow-up test, or reject the result because the data is too weak to trust. Anything else usually turns into overthinking.

Use this simple decision filter after each test:

- If the winner matches the goal and the sample is strong, apply the learning to the next relevant campaign.

- If the winner is directionally better but the sample is small, treat it as a hypothesis for another test.

- If the engagement metric and business metric disagree, prioritize the metric closest to revenue or the campaign’s real objective.

- If the result is basically tied, do not invent a lesson just to feel productive.

- If unsubscribes or complaints rise sharply, review the promise, targeting, and frequency before scaling the winning version.

This is the difference between testing and tinkering. Tinkering changes things because the dashboard moved. Testing turns the dashboard into a smarter next move.

Reading Results Without Fooling Yourself

The dangerous part of A/B testing Mailchimp campaigns is not the setup. It is the interpretation. A clean-looking winner can still be a weak decision if the sample is small, the metric is mismatched, or the test was stopped too early.

This is where experienced marketers slow down. They do not just ask, “Which version won?” They ask, “Is this result strong enough to change how we send future campaigns?” That second question protects you from chasing noise.

Statistical Significance Is Not The Same As Business Significance

Statistical significance helps you judge whether the result is likely to be real rather than random. Business significance asks whether the difference is large enough to matter. You need both, because a tiny lift can be statistically interesting and still be commercially pointless.

For example, a subject line that improves clicks by a tiny amount may not deserve a full strategy change. But a content angle that produces more qualified buyers, even from a modest test, may deserve deeper follow-up. The smartest move is to treat the first result as evidence, not a final law.

Small Lists Need A Different Testing Mindset

If your list is small, do not pretend every test can produce a perfect answer. A small audience can still reveal useful patterns, but you need to be more careful with big conclusions. In that situation, A/B testing Mailchimp campaigns should be used to collect directional signals across several campaigns, not to crown one eternal winner after one send.

For smaller lists, test bigger differences. A bold positioning angle versus a soft educational angle will usually teach you more than two nearly identical subject lines. The smaller the sample, the more important it is that the variations are meaningfully different.

Avoid The Early Winner Trap

Early results can be seductive. One version pulls ahead after the first hour, the dashboard looks obvious, and the temptation is to call the race. That is exactly how teams end up scaling the wrong message.

Subscriber behavior does not arrive evenly. Some people open immediately, some check email after work, and some click the next day. Unless the campaign is genuinely urgent, give the test enough time to capture a fairer picture before deciding what won.

Watch For Segment Distortion

A test result is only as useful as the audience behind it. If your test group contains more loyal buyers, newer subscribers, or inactive contacts than the rest of the list, the result may not transfer cleanly. This is why segmentation should be treated as part of the test design, not as an afterthought.

When possible, compare results by meaningful segments after the campaign runs. New subscribers may respond to clarity, while long-time buyers may respond to exclusivity. That does not mean you need a complicated setup every time, but it does mean one average result can hide several different audience behaviors.

Do Not Optimize Yourself Into A Worse Brand

A/B testing is powerful, but it can reward short-term behavior if you let it. Aggressive subject lines may lift opens. Heavy urgency may lift clicks. But if those tactics also increase unsubscribes, complaints, or discount dependency, the “winner” may be quietly weakening your list.

This is especially important for brands that send often. You are not just optimizing one campaign; you are training your audience on what to expect from you. The best test result is not the one that gets the loudest reaction today, but the one that improves performance without damaging trust.

Know When To Move Beyond Mailchimp’s Built-In Test

Mailchimp’s built-in A/B testing is a strong fit for campaign-level decisions. It is practical for testing subject lines, sender names, content blocks, and send times. For many businesses, that is enough to make better email decisions every week.

But once you start testing full funnels, multi-step automations, landing pages, ads, and sales follow-up, the test may need to extend beyond the email itself. That is where a broader CRM or funnel system can help connect email behavior to pipeline, booked calls, and revenue. If your business has moved into that stage, tools like GoHighLevel, ClickFunnels, or Systeme.io may be worth comparing alongside your Mailchimp workflow.

Build A Testing Roadmap Instead Of Random Tests

Random tests create random learning. A roadmap creates momentum. Instead of asking, “What should we test this week?” decide which customer decision points matter most and test them in order.

A simple roadmap might start with inbox visibility, then move to offer clarity, then CTA strength, then landing page alignment. That order makes sense because each step depends on the previous one. When you plan tests this way, every campaign becomes part of a bigger learning system instead of a one-off experiment.

FAQ And Final Optimization Checklist

A strong email testing system is not built from one big win. It is built from small, clean decisions that keep improving how you understand your audience. Once you have the process in place, A/B testing Mailchimp campaigns becomes less about guessing and more about building a repeatable marketing advantage.

The final system is simple: test one meaningful variable, choose the right metric, read the result in context, and apply the learning carefully. Then do it again. That is where the compounding starts.

FAQ - Built For Complete Guide

What Is A/B Testing In Mailchimp?

A/B testing in Mailchimp is a way to compare different versions of an email campaign to see which one performs better. You can test variables such as subject lines, From names, content, and send times. The goal is to make future campaigns better by using real audience behavior instead of opinions.

What Should I Test First In Mailchimp?

Start with the variable closest to your biggest problem. If people are not opening, test the subject line or sender name. If people open but do not click, test the email content, offer framing, CTA, or layout.

How Many Variables Should I Test At Once?

For a normal A/B test, test one variable at a time. That keeps the result clean and easier to understand. If you change several things at once, you may see a winner, but you will not know what actually caused the result.

Is Open Rate Still Useful?

Open rate is useful, but it should be handled carefully. Privacy changes can affect open tracking, so opens are best used as a directional signal rather than the final measure of campaign quality. Clicks, conversions, purchases, and replies usually tell you more about real intent.

What Is The Best Winning Metric For A Mailchimp A/B Test?

The best winning metric depends on the campaign goal. Use open rate for inbox-level tests, click rate for content and CTA tests, and revenue or conversions for sales-focused campaigns. Do not let a vanity metric decide a business question.

How Long Should A Mailchimp A/B Test Run?

Let the test run long enough to capture normal audience behavior. A short flash sale may need a faster decision, while a standard newsletter or promotional campaign can usually wait longer. The goal is to avoid calling a winner before enough subscribers have had a fair chance to engage.

Can Small Lists Run Useful A/B Tests?

Yes, but small lists need realistic expectations. The result may be directional rather than definitive. If your list is small, test bigger strategic differences and look for patterns across multiple campaigns instead of overreacting to one result.

What Is A Good A/B Test Hypothesis?

A good hypothesis connects a specific change to a specific expected result. For example, “A clearer benefit-led subject line will increase clicks because subscribers will understand the value faster.” That gives the test a purpose and makes the result easier to interpret.

Should I Use Mailchimp A/B Testing For Automations?

A/B testing can be useful for automations, but you need to think beyond one campaign send. Automated emails often run continuously, so you should judge performance over enough time and enough contacts. For high-value automations, prioritize conversion quality over quick engagement wins.

What Is The Biggest Mistake With A/B Testing Mailchimp Campaigns?

The biggest mistake is testing randomly. Random tests create random learning. The better approach is to build a testing roadmap around inbox performance, message clarity, offer strength, CTA performance, and revenue impact.

When Should I Ignore A Winning Result?

Ignore or downgrade a winning result when the sample is too small, the difference is tiny, the metric does not match the goal, or the win comes with higher unsubscribes and complaints. A test result should help you make a better decision, not force you into a bad one. If the data is weak, treat it as a clue for the next test.

How Do I Turn A/B Testing Into A Repeatable System?

Keep a simple testing log. Record the campaign, audience, hypothesis, variable, winner, key metrics, and next action. Over time, this becomes your internal playbook for better email decisions.

Work With Professionals

Explore 10K+ Remote Marketing Contracts on MarkeWork.com

Most marketers spend too much time chasing clients, competing on crowded platforms, and losing a percentage of every project to middlemen.

MarkeWork gives you a better way. Browse thousands of remote marketing contracts and connect directly with companies desperate to hire skilled marketers like you, without platform commissions and without unnecessary gatekeepers.

If you're serious about finding better opportunities and keeping 100% of what you earn, explore available contracts and create a profile for free at MarkeWork.com.