AI copy stopped being a niche experiment the moment teams realized it could remove the slowest part of writing: getting from a blank page to a usable draft. What changed next was more important. The winners were not the people who let a model write everything. They were the people who learned how to direct it, shape it, fact-check it, and turn rough output into copy that actually sells.

That is the real conversation around ai copy now. It is not whether the tools can produce words. They obviously can. The real question is how to use them in a way that improves speed, keeps your standards high, protects trust, and still sounds like a real brand talking to a real customer. Google’s public guidance makes that line clear: useful content can use AI, but scaled, low-value content can still fail hard in search.

Organizations are moving in this direction fast, which is why the gap between “using AI” and “using it well” matters so much. McKinsey found that 65% of respondents said their organizations regularly use generative AI, and marketing and sales remain one of the most common functions for adoption. At the same time, trust is fragile: Adobe reported that a third of customers say they would disengage after discovering content is AI-generated, which means ai copy only works when the output still feels useful, credible, and human.

Article Outline

- Why AI Copy Matters Now

- A Practical Framework for AI Copy

- The Core Building Blocks of High-Performing AI Copy

- How Professionals Turn Drafts Into Revenue Assets

- Where AI Copy Breaks Down and How to Fix It

- The Future of AI Copy and the Questions That Matter Most

Why AI Copy Matters Now

The biggest advantage of ai copy is not magic. It is leverage. A good system lets one strategist, founder, marketer, or operator produce more landing page variations, email angles, ad hooks, follow-up sequences, and content drafts than they could manually create in the same amount of time.

That matters because content volume alone is no longer enough. Search, social, paid media, and lifecycle channels are all crowded, and the generic middle is getting crushed. HubSpot’s recent marketing data points to broad adoption of AI in content creation, which means basic usage is no longer a differentiator on its own. Over 80% of marketers report using AI for content creation, so simply publishing faster will not separate you from competitors anymore.

What separates strong ai copy from forgettable output is judgment. The model can give you speed, structure, and options. You still need positioning, proof, voice, and editorial discipline. That is why the best workflows look less like “press a button and publish” and more like “brief, generate, select, refine, verify, and deploy.”

There is also a practical reason this topic matters right now: the tools themselves are getting better at following instructions. OpenAI’s GPT-4.1 release notes and prompting guidance both highlight gains in instruction following and long-context use, which makes it easier to feed a model brand voice notes, offer details, customer objections, and existing assets in one workflow. Better models do not remove the need for strategy, but they do make a disciplined ai copy process far more useful than it was even a year ago.

A Practical Framework for AI Copy

The easiest way to get weak ai copy is to start with the prompt instead of the problem. Professionals do the opposite. They begin by defining the job the copy needs to do, who it needs to move, what resistance it must overcome, and what action should happen next. Once that is clear, AI becomes a drafting engine instead of a guessing machine.

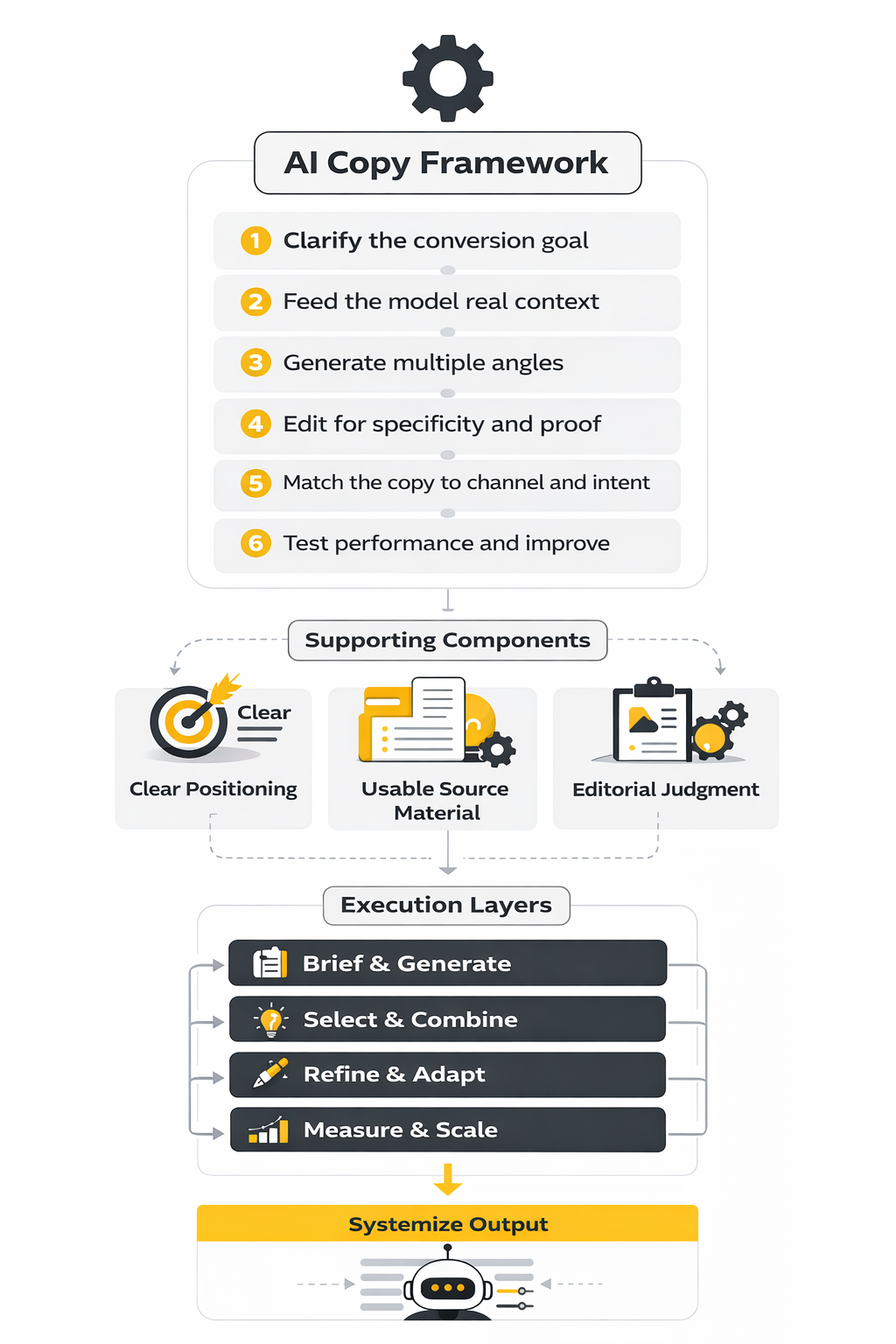

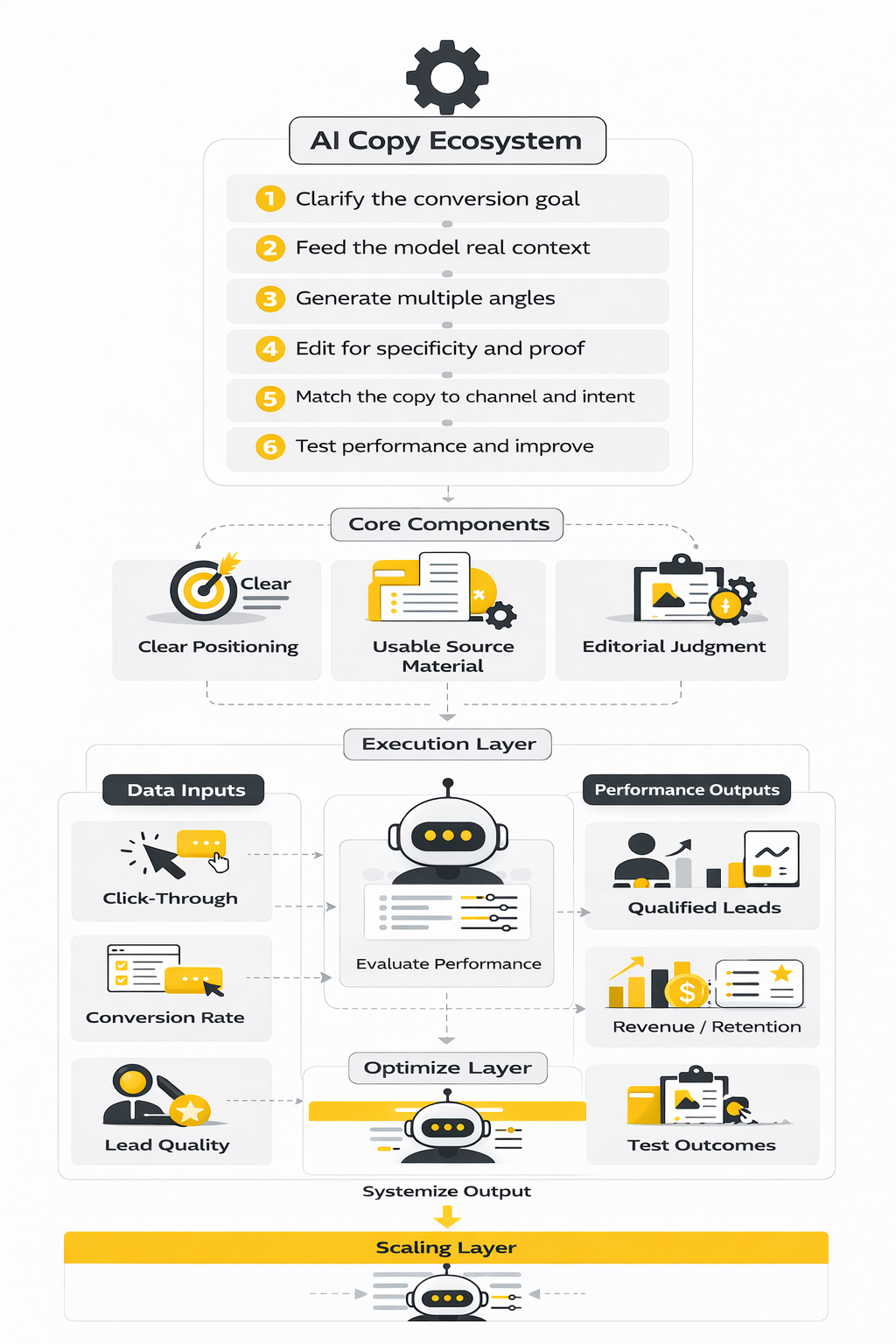

A simple framework works well here:

- Clarify the conversion goal

- Feed the model real context

- Generate multiple angles

- Edit for specificity and proof

- Match the copy to channel and intent

- Test performance and improve

This framework matters because ai copy usually fails in predictable ways. It becomes too polished, too broad, too repetitive, or too eager to sound smart. The fix is not “better vibes.” The fix is better inputs, stronger constraints, and a tighter review process.

In practice, that often means pairing a writing model with the rest of your operating stack. If the goal is lead capture and follow-up, a platform like GoHighLevel AI can connect copy, CRM actions, and downstream automation in one workflow. If the goal is conversational support or on-site qualification, Chatbase can turn the same messaging logic into a customer-facing assistant. The key is not the tool itself. The key is making sure the copy is tied to a real business outcome instead of sitting in a document waiting to be used.

That is where the rest of this article goes next. We will move from the high-level idea of ai copy into the specific building blocks that make it persuasive, controlled, and worth publishing.

The Core Building Blocks of High-Performing AI Copy

Strong ai copy is not built from one clever prompt. It comes from a handful of inputs that dramatically change the quality of the output. When those inputs are weak, the result usually sounds polished on the surface but empty underneath. When they are strong, the copy starts sounding like it actually belongs to a real business with a real point of view.

The first building block is clear positioning. A model cannot invent a sharp market position if the business itself has not defined one. That matters even more now because AI is flooding every channel with similar-sounding content, and HubSpot’s latest marketing reporting keeps pointing back to the same practical truth: distinct brand perspective is becoming more valuable, not less, in an AI-heavy market. (hubspot.com)

The second building block is usable source material. AI copy gets better when you feed it customer interviews, call transcripts, product docs, sales notes, reviews, founder language, support tickets, and existing high-converting assets. OpenAI’s prompt guidance keeps reinforcing the same pattern: better instructions and better context produce better outputs. That sounds obvious, but it is still where most teams cut corners. (openai.com)

The third building block is editorial judgment. This is the layer that decides what is too vague, what feels inflated, what needs proof, and what does not sound like the brand. Google’s search documentation is useful here because it frames the standard the right way: content has to be helpful, reliable, and built for people rather than churned out at scale with no added value. That is as true for conversion copy as it is for search content. (developers.google.com)

Positioning Comes Before Prompting

A lot of people start their ai copy workflow by asking the model to write a landing page, email, or ad. That is backwards. The smarter move is to define the offer, the audience, the problem, the stakes, the desired action, and the reason someone should trust you before the model writes a single line.

This is where weak brands usually get exposed. If five competitors can all say the same sentence, it is not positioning. It is filler. AI tends to amplify that problem because it naturally reaches for the most statistically common phrasing unless you force it toward sharper language and more specific claims.

That is why ai copy improves fast when you hand the model structured inputs such as:

- who the offer is for

- who it is not for

- what outcome matters most

- what objections show up first

- what proof already exists

- what tone the brand should avoid

Once that foundation is clear, the model can help you explore angles instead of manufacturing identity. That is a much better use of the tool.

Voice Is a System, Not a Vibe

Brand voice gets talked about like it is some mystical creative trait. In practice, it is much more operational than that. Voice is the repeatable set of choices a brand makes around tone, vocabulary, pacing, sentence length, emotional intensity, and how directly it speaks.

That matters because ai copy will default to median internet language unless you interrupt that pattern. The model is good at sounding acceptable. It is not automatically good at sounding like you. If your brand voice is “clear, blunt, practical, no hype, no clichés, no fake urgency,” that has to be stated directly and reinforced with examples.

A useful way to manage this is to create a voice brief with:

- words and phrases the brand uses often

- words and phrases the brand avoids

- examples of great past copy

- examples of copy that sounds wrong

- rules for sentence rhythm, formatting, and calls to action

This is one place where an operational tool stack can help. If you are building pages fast and want the final output to stay closer to one conversion style, Replo is useful for turning controlled messaging into landing page execution without constantly rebuilding from scratch. The tool does not solve voice by itself, but it gives a cleaner environment for preserving it once you have done the hard strategic work.

Specificity Is What Makes Copy Believable

Most bad ai copy has the same disease: it says things that are technically fine but emotionally weightless. Phrases like “streamline your workflow,” “unlock growth,” and “save time and money” are not always false. They are just too generic to do any serious persuasive work on their own.

Specificity fixes that. Specificity means naming the audience more clearly, describing the problem in concrete terms, using sharper verbs, and attaching claims to real mechanisms or proof. Instead of saying a tool “improves communication,” strong ai copy explains what actually changes, for whom, and at what moment in the workflow.

This is also where professional review matters most. Google’s guidance on generative AI content warns against producing lots of pages without adding value, and that is exactly what generic copy does. It adds volume without adding clarity. (developers.google.com)

A simple editing question helps here: could a competitor copy-paste this paragraph onto their site without changing much? If the answer is yes, the copy is still too broad. Keep pushing until the language feels anchored to a distinct problem, offer, and user.

Proof Beats Fluency

One reason ai copy can be dangerous in the hands of inexperienced teams is that it sounds finished before it is trustworthy. The sentences come out smooth. The rhythm feels polished. That creates false confidence, and false confidence is expensive when the copy is making claims about results, performance, or outcomes.

This is where proof has to enter the process. The strongest copy is usually doing one or more of these things:

- linking claims to product features people can verify

- using customer language pulled from real conversations

- grounding performance claims in actual internal data

- referencing public standards, documentation, or policies when relevant

McKinsey’s 2025 workplace research is useful context because it shows the scaling problem clearly: nearly all companies are investing in AI, yet just 1% describe themselves as being at maturity. That gap exists partly because output alone is easy, but reliable operational use is harder. The same thing applies to ai copy. Anyone can generate drafts. Fewer teams can turn those drafts into trustworthy assets that hold up under scrutiny. (mckinsey.com)

Channel Fit Changes Everything

The same ai copy should not be pushed unchanged into every format. A homepage headline, a product page explanation, a sales email, a LinkedIn post, and an SMS follow-up all operate under different attention conditions. The copy has to respect that or it starts feeling off even when the core message is sound.

This is why a strong system separates message architecture from channel execution. First, define the main promise, proof, objections, and CTA. Then adapt the structure for the channel. Long-form pages need narrative flow and proof stacking. Ads need fast contrast. Email often needs curiosity plus clarity. Chat and DMs need speed and relevance.

This is also where automation platforms become more than a nice extra. If you want ai copy to move from messaging into triggered follow-up, segmentation, and conversion workflows, ManyChat and Brevo are useful examples of where copy and distribution start working together instead of living in separate silos. Good ai copy gets stronger when it is built for the system it will actually run inside.

How Professionals Turn Drafts Into Revenue Assets

The professional move is not publishing the first decent draft. It is treating the first draft as raw material. That mindset changes everything because it turns ai copy from a shortcut into a compounding production process.

A practical professional workflow usually looks like this:

- collect source material from sales, support, product, and customer research

- define the conversion goal and audience segment

- prompt for multiple angles instead of one final answer

- select the strongest ideas and combine them

- edit aggressively for specificity, proof, and voice

- adapt the message to each channel

- test performance and keep only what earns its place

That workflow sounds simple, but it is where the real edge lives. Most people stop at step three. Professionals make their money in steps four through seven.

The reason this matters is that AI is very good at helping teams expand the option set. It can generate five hooks instead of one, three email structures instead of one, or ten CTA variants instead of one. That has real value. But the financial upside only appears when someone knows how to evaluate those options against actual business goals.

In the next part, that is the shift we will make. We will move from the building blocks of ai copy into the implementation layer: how strong teams use these drafts in campaigns, pages, sequences, and workflows that are meant to perform, not just read nicely.

How Professionals Turn Drafts Into Revenue Assets

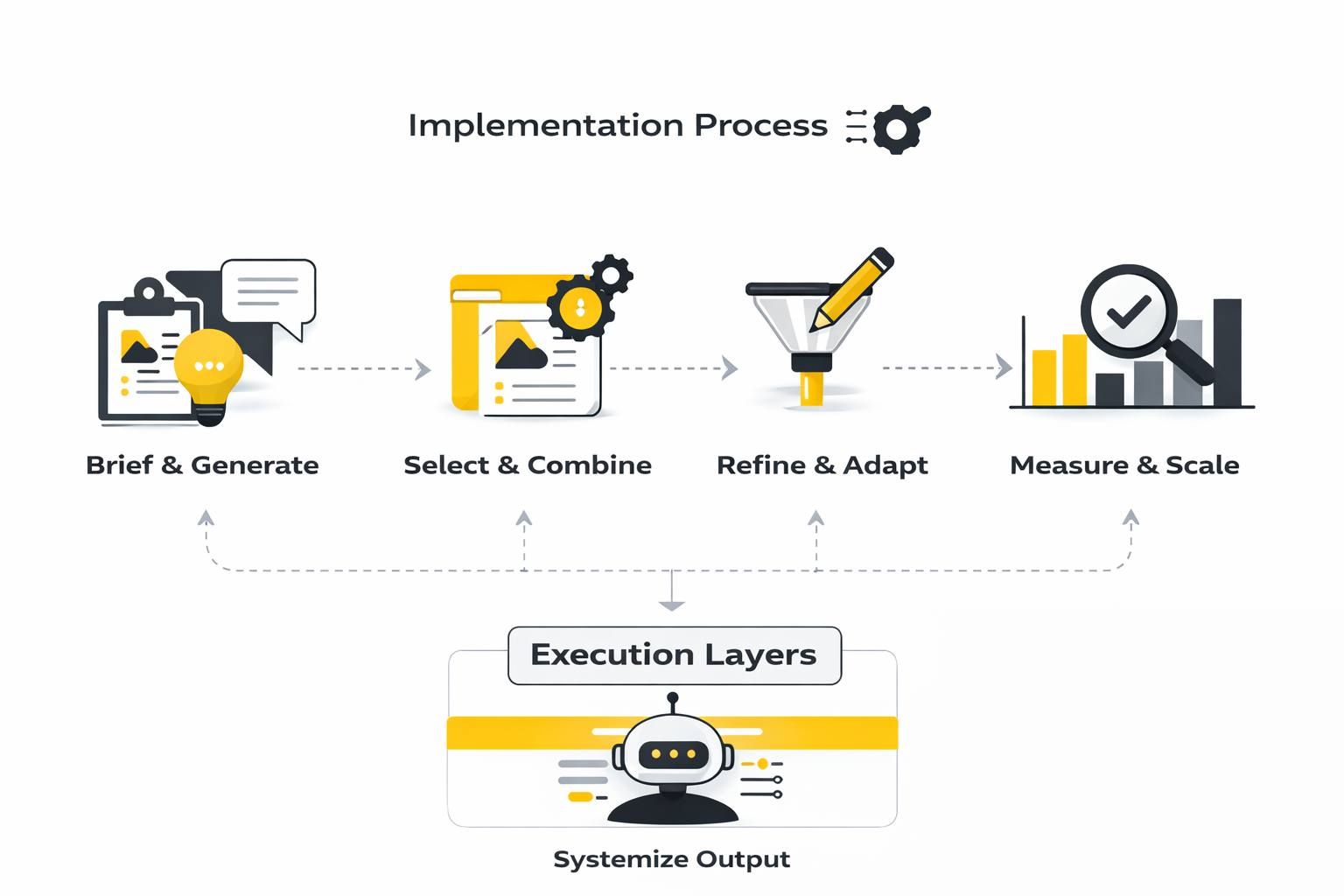

The jump from decent ai copy to useful ai copy happens in execution. This is the stage where strategy turns into assets, assets turn into workflows, and workflows start producing pipeline, sales conversations, booked calls, or support deflection instead of just looking good in a doc. A lot of teams never make this leap because they treat AI like a writing toy instead of a production layer.

That is the mistake to avoid. The best operators do not ask ai copy to replace thinking. They use it to compress the path from message strategy to live execution, then they tighten the system with review, testing, and feedback loops.

Start With One Conversion Path, Not Ten

The cleanest way to implement ai copy is to begin with one path that matters. That could be a lead-gen landing page tied to an email sequence, a product page tied to retargeting ads, or a chatbot flow tied to qualification and handoff. One focused path is easier to measure, easier to improve, and much harder to fake.

This matters because complexity hides bad copy. If you launch five channels, twelve offers, and three audiences at the same time, you will never know whether the messaging failed, the targeting failed, or the workflow failed. A single conversion path gives you a controlled environment where ai copy can actually be judged on performance.

It also forces clarity. You have to define the promise, the audience, the CTA, and the handoff point. That alone improves the writing before the model even starts generating drafts.

Build a Source Pack Before You Generate Anything

Professionals do not improvise their inputs. They assemble a source pack that gives the model something real to work with, because ai copy is only as good as the material underneath it. This is where most weak implementations fall apart.

A useful source pack usually includes:

- product or service description

- audience segment details

- customer objections

- customer language from calls, reviews, or support tickets

- proof points, case study notes, or performance data

- brand voice rules

- examples of copy that has already worked

Once you have that pack, prompting gets dramatically easier. You are no longer asking a model to invent a message from thin air. You are asking it to organize, sharpen, and expand material that already reflects the business.

Turn One Message Into a System of Assets

This is the point where implementation becomes tangible. Instead of writing one isolated page, you break the message into reusable pieces that can travel across the funnel. That is how ai copy stops being a draft generator and starts acting like an operating system for marketing execution.

A practical rollout looks like this:

- write the core offer statement

- generate headline and hook variations

- build the landing page structure

- create the lead magnet or booking CTA copy

- draft the follow-up email sequence

- write retargeting ad angles

- adapt the message for chat, SMS, or DMs

- collect performance feedback and revise

This process works because it keeps the message consistent while letting the format change. The page can be more detailed, the ad can be more contrast-driven, and the follow-up can be more direct, but the underlying sales logic stays intact. That consistency is what makes implementation feel professional instead of scattered.

Use AI Copy Inside the Stack It Will Actually Run In

A lot of copy gets weaker the moment it leaves the writing document. Headings break in the page builder, follow-ups lose context inside the CRM, and chat flows become robotic because nobody adapted the message to the tool. Good implementation solves this early.

If your main job is building conversion pages fast, Replo makes it easier to move from approved messaging to page execution without rebuilding the structure every time. If the bigger need is capturing, nurturing, and routing leads, GoHighLevel is useful because the ai copy can be tied directly to forms, automations, pipelines, and follow-up sequences.

That connection matters more than people think. Ai copy that lives in a document is just potential. Ai copy connected to the delivery system is where actual business results start to show up.

Give Each Channel Its Own Job

One of the fastest ways to ruin implementation is to force the same ai copy into every channel unchanged. The landing page should carry explanation and proof. The email should create movement. The ad should earn the click. The chat flow should reduce friction and move the person to the next step.

That means your process has to assign jobs clearly. A paid ad does not need to explain the whole offer. A nurture email does not need to sound like a homepage. A chatbot does not need a grand brand manifesto. Each asset should do its own part of the work and then hand off cleanly to the next one.

This is where specialized tools can help the system stay tight. For example, ManyChat is useful when the message needs to keep moving inside chat-based journeys, while Brevo fits better when email, segmentation, and automation are part of the core execution path. The copy gets stronger when the channel logic is clear from the start.

Review Like an Operator, Not a Fan

Implementation is where discipline starts paying for itself. The first generated draft is not the finish line. It is just the fastest way to find out what is missing.

A strong review pass checks for:

- vague claims with no proof

- headlines that sound impressive but say little

- repeated phrases across assets

- channel mismatch

- brand voice drift

- weak calls to action

- awkward transitions between assets in the funnel

This is the part many people skip because the draft already sounds polished. That is exactly why the review matters. Smooth language can hide weak logic, and weak logic kills conversion faster than clunky phrasing ever will.

Build Feedback Loops Into the Process

The smartest implementation teams do not treat ai copy as a one-way output. They feed performance data back into the system. Winning subject lines, stronger objection handling, better-performing offers, and cleaner CTA phrasing all become new source material for future drafts.

That creates compounding gains. The copy gets sharper because the inputs get sharper. Over time, you stop relying on generic prompting and start working from a growing body of real evidence about what your audience responds to.

This is also where a simple operational habit pays off: store your best lines, best hooks, strongest objections, and best-performing CTA patterns in one place. Then use them in future prompts. Ai copy improves very quickly when you train the workflow around your own wins instead of asking the model to keep guessing from zero.

Where AI Copy Breaks Down and How to Fix It

Once ai copy is live, the weaknesses become easier to spot. Some assets sound too polished to trust. Some drift into blandness. Some create clicks but not conversions because the promise is louder than the actual value. None of that means the system failed. It means the next job is diagnosis.

The good news is that the failure patterns are usually predictable. That makes them fixable. In the next part, we will break down the most common ways ai copy goes wrong in real-world use and the practical adjustments that bring it back under control.

Where AI Copy Breaks Down and How to Fix It

The hardest part of working with ai copy is not generation. It is interpretation. A draft can look clean, a campaign can launch fast, and the dashboard can still tell you the message is underperforming because the copy is attracting the wrong click, creating weak intent, or losing trust before the action happens.

That is why measurement matters so much. Without it, teams often keep editing sentences when the real problem is offer-market fit, page structure, or audience mismatch. With it, you can tell whether ai copy needs a sharper hook, stronger proof, a different CTA, or a completely different angle.

What the Numbers Should Actually Tell You

A lot of performance reporting around ai copy is shallow. People celebrate speed, lower production costs, or higher content output, then act surprised when conversion barely moves. Volume is useful, but it is not the same as effectiveness.

The numbers that matter are the ones that reveal message quality at each stage of the journey. A headline is doing one job. A landing page is doing another. A nurture email, an ad, and a chatbot each have their own threshold for success. If you judge all of them by one blunt metric, you will make bad decisions fast.

That is also why generic benchmarks have to be handled carefully. For example, Unbounce’s latest landing page data puts the median conversion rate at about 6.6% across industries, while the top quartile in many industries starts around 11.4% or higher. That does not mean every page below those numbers is bad. It means you need context. A cold B2B offer with a higher-friction CTA will behave very differently from a warm ecommerce offer with a discount-led entry point.

The Performance Signals That Matter Most

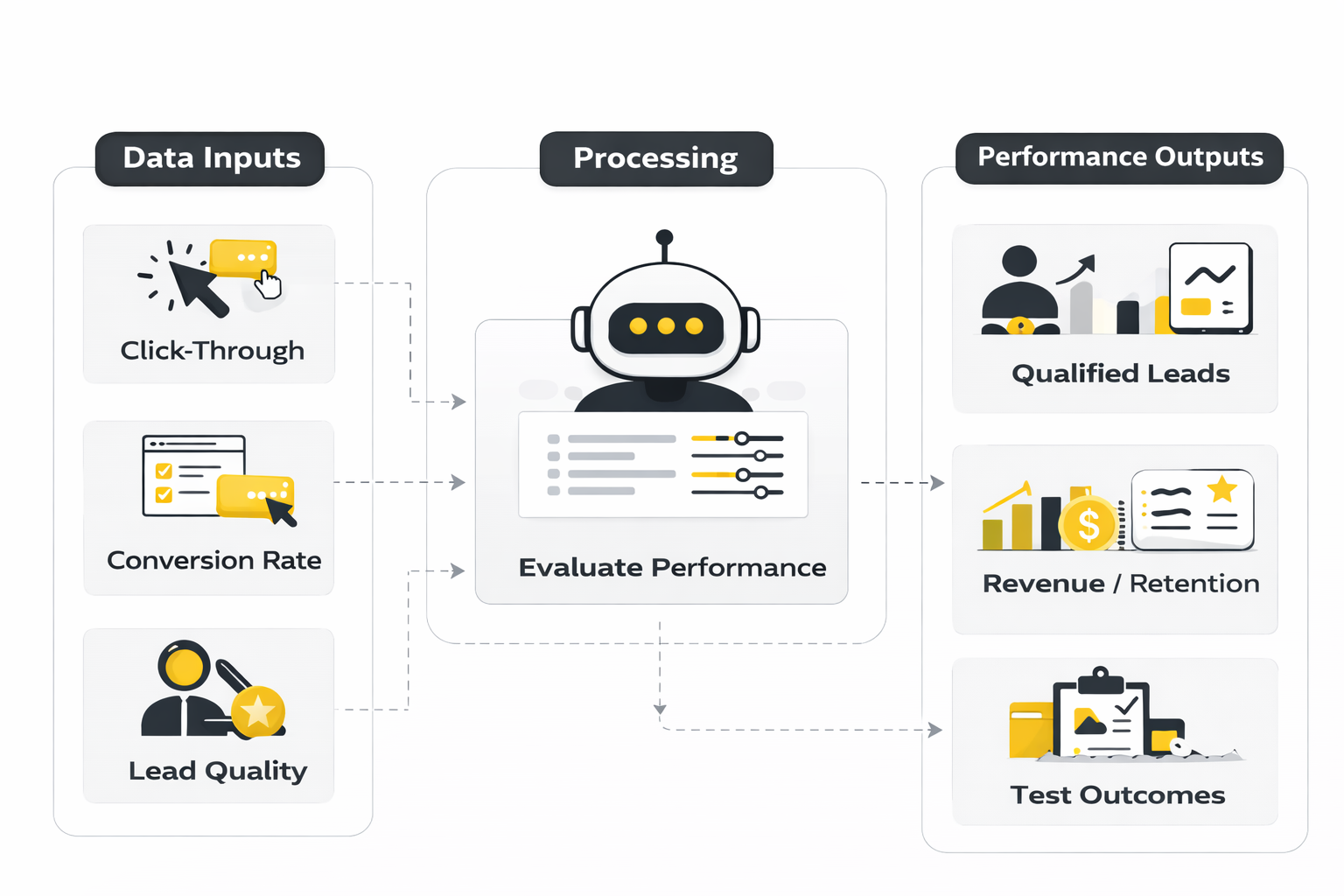

The best way to evaluate ai copy is to treat it like a chain of decisions. Each metric should tell you whether the next step in the chain is working. That keeps you from overreacting to one isolated number.

A strong measurement set usually includes:

- click-through rate

- landing page conversion rate

- form completion rate

- reply rate or booked-call rate

- sales-qualified lead rate

- revenue per visitor or pipeline per lead

- retention or downstream quality when relevant

These metrics matter because they answer different questions. Click-through rate tells you whether the message earned attention. Conversion rate tells you whether the promise, proof, and CTA held together. Lead quality tells you whether the copy attracted the right people in the first place.

Clicks Are a Signal, Not a Verdict

Ai copy often improves click-through rate before it improves revenue. That is normal. Better hooks and cleaner offers can lift curiosity quickly, but curiosity by itself does not pay the bills.

Recent benchmark data is useful here as a rough frame. LocaliQ’s 2025 search advertising benchmark puts average search ad CTR at 6.66%, and WordStream’s 2025 Google Ads benchmark reports an average conversion rate of 7.52%. Those numbers matter because they remind you that attention and action are not the same event.

If ai copy lifts clicks but conversion stays flat, the likely problem is one of three things. The hook is stronger than the page. The audience is wrong. Or the copy creates expectation that the offer does not fully match. In all three cases, the lesson is the same: do not celebrate top-of-funnel gains until downstream behavior confirms them.

Landing Page Conversion Is Where the Copy Gets Exposed

Landing page conversion rate is where ai copy usually faces its first serious test. This is where the headline, subhead, proof, structure, and CTA all have to work together. If one piece is off, the whole page starts leaking attention.

That is why median and top-quartile benchmarks are useful when handled properly. Unbounce’s benchmark dataset covers 41,000 landing pages, 464 million visits, and 57 million conversions, which makes it a practical reference point for reality checking your page. Not because their number is your target, but because it helps you spot when performance is clearly outside a normal range and needs diagnosis.

If your page gets healthy traffic but weak conversion, read that as a copy problem only after ruling out friction. A long form, poor visual hierarchy, weak mobile experience, or low-trust offer can suppress performance even when the ai copy itself is directionally solid. That is why the best operators review the whole conversion environment, not just the wording.

Email and Follow-Up Metrics Reveal Message Quality Over Time

Ai copy is often strongest at first touch and weaker in follow-up, because follow-up requires more nuance. You are no longer just earning interest. You are trying to maintain momentum, answer resistance, and move a prospect closer to a decision without sounding repetitive or desperate.

Email benchmarks make that clear. HubSpot’s 2025 benchmark reporting shows an average email open rate of 42.35%, while their 2026 marketing statistics page lists average email CTR at 2.5%. That gap matters. It tells you that subject lines can succeed while body copy and CTA logic still fail.

So if ai copy delivers strong opens but weak clicks or replies, the issue is not usually curiosity. It is relevance, clarity, or next-step friction. In plain English, the message got attention but did not create enough desire or confidence to move the reader.

Lead Quality Matters More Than Cheap Conversion

This is where many teams get fooled. Ai copy can absolutely help lower cost per lead or increase raw form fills. But if those leads are weak, misaligned, or never convert downstream, you have optimized the wrong outcome.

That is why serious measurement has to go past the page. HubSpot’s 2025 CPL and CAC benchmark research is useful not because it gives a universal cost target, but because it reinforces the idea that different channels produce very different economics and lead quality patterns. Ai copy should be judged partly on the quality of demand it creates, not just the quantity.

For a service business, that often means looking at booked-call quality, show rate, close rate, and pipeline created. For SaaS, it may mean product-qualified leads, activation, and trial-to-paid rate. For ecommerce, it could be add-to-cart rate, checkout completion, and repeat purchase behavior. The right metric depends on the model, but the principle does not change: cheap conversion is not the same as good conversion.

Build a Simple Analytics System Around the Funnel

A clean measurement system makes ai copy easier to improve because it turns vague opinions into specific decisions. You stop arguing about whether the copy “feels strong” and start asking which stage is leaking, why, and what test should come next.

A practical analytics system for ai copy looks like this:

- track impression-to-click performance for hooks, ads, or subject lines

- track click-to-conversion performance on the landing page

- track conversion-to-qualified-lead performance in the CRM

- track qualified-lead-to-revenue performance downstream

- compare winners by angle, promise, proof type, and CTA structure

- feed the best-performing patterns back into future prompts

This matters because the copy rarely fails everywhere at once. Usually one stage is responsible for most of the loss. Maybe the ad is weak. Maybe the page is overexplaining. Maybe the CTA is asking for too much too soon. A simple stage-by-stage view makes those problems visible.

If you want the operational side of that system to stay tight, GoHighLevel is useful when you need one place for pages, forms, follow-up, and pipeline tracking. If the main challenge is email and segmentation, Brevo gives a cleaner environment for seeing how copy performs across campaigns and lists. The exact stack matters less than making sure the message and the measurement live close enough together to inform each other.

Benchmarks Are Starting Points, Not Commands

Benchmarks are helpful when they stop you from guessing. They are harmful when they replace judgment. If a page converts below average but sells a high-ticket service with strong close rates, the copy may still be doing its job. If a campaign beats average CTR but brings in weak-fit leads, the copy may need to be rebuilt.

This is why interpretation matters more than the stat itself. Numbers should drive action, not ego. A low CTR might push you to test stronger hooks. A healthy CTR with weak conversion might push you to rewrite the promise and proof. A good conversion rate with poor downstream quality might mean the copy is attracting the wrong segment altogether.

That is the real job of analytics in ai copy. Not to decorate a report. To tell you what to change next.

The Future of AI Copy and the Questions That Matter Most

Once you start measuring ai copy properly, the conversation shifts. It stops being about whether AI can write and starts becoming about how teams keep output useful, differentiated, and trusted as the market gets noisier. That is where the final part of this article goes next.

We will look at what is changing, what is likely to get harder, and what smart operators should focus on if they want ai copy to stay valuable instead of becoming just another source of cheap words.

The Future of AI Copy and the Questions That Matter Most

The next phase of ai copy is not about whether teams can generate more words. That part is already solved. The real shift is that more brands now have access to the same baseline writing power, which means the durable advantage is moving toward judgment, source quality, distribution discipline, and the ability to turn raw output into something distinctive and trustworthy.

That change is already visible in how organizations are using AI. McKinsey’s 2025 State of AI survey shows generative AI use rising sharply, with marketing and sales still among the functions where companies most often report revenue impact. That is encouraging, but it also means ai copy is becoming a normal capability rather than a novelty. Once everyone has access to the tool, the edge comes from how well the system is run.

Scale Creates Sameness Faster Than Most Teams Expect

One of the biggest strategic tradeoffs with ai copy is that scale is both the benefit and the danger. You can produce more pages, more variants, more emails, more ads, and more support content far faster than before. But if the source material is weak or the review layer is lazy, you do not get scale with quality. You get scale with sameness.

Google has been unusually direct about this. Its guidance on creating helpful, reliable, people-first content and using generative AI content on your site makes the standard clear: AI can absolutely support content creation, but scaled pages without real value can still become a quality problem. That matters because ai copy often fails quietly. It does not always look broken. It just starts sounding interchangeable, and then performance softens over time.

For experienced operators, this creates a simple rule. Do not scale output until you have a proven editorial system for protecting originality, specificity, and usefulness. Otherwise the workflow becomes a machine for manufacturing average content faster.

The Winning Teams Will Treat AI Copy Like a Managed System

The most mature use of ai copy will look less like individual prompting and more like operational design. That means shared voice rules, approved source packs, legal and compliance review where needed, human QA checkpoints, and structured testing loops. It is not glamorous, but this is exactly where value compounds.

McKinsey’s 2025 survey data shows a wide spread in how organizations review AI-generated outputs before use, including a meaningful share that review all outputs and many others that review only part of what they publish. That gap matters. The difference between high-trust ai copy and risky ai copy is often not the model. It is the governance around the model.

NIST’s Generative AI Profile for the AI Risk Management Framework pushes the same idea from a different angle. The guidance is broader than writing, but the lesson applies directly: generative AI systems need documented controls around risk, oversight, and evaluation. For copy teams, that translates into practical habits such as source validation, claim review, disclosure decisions, escalation rules, and clear ownership over what gets published.

Prompting Skill Will Matter Less Than Context Engineering

Right now a lot of the conversation still revolves around prompts. That makes sense, because prompting is the visible part of the workflow. But over time, the bigger competitive advantage is likely to come from context engineering: the ability to feed the model the right customer language, historical winners, objection data, offer logic, product constraints, and brand rules in a structured way.

OpenAI’s official prompt engineering guide and best practices article both reinforce the same pattern. Better outputs come from clearer instructions, stronger context, and iterative refinement rather than one magical command. That matters because it changes what “good at AI copy” really means. It is less about writing clever prompts and more about designing a repeatable information flow.

This is good news for serious teams. It means the advantage is still very human. The people who understand their market, their product, and their buyers most deeply will usually get better results from the same model than the people who only know how to ask for “10 persuasive headline variations.”

Trust Will Become a Bigger Constraint Than Production Speed

There is a reason this topic keeps coming back to trust. Ai copy is now good enough to sound polished before it is fully accurate, well-calibrated, or aligned with the brand. That creates a risk profile that older content systems did not have at the same scale. The output can look finished while still being strategically wrong.

NIST’s AI risk management guidance is relevant here because it frames trustworthiness as something that has to be actively managed, not assumed. In marketing terms, that means teams need to think about more than grammar and persuasion. They need to think about factual integrity, misleading claims, disclosure decisions, security around inputs, and whether the system is quietly drifting away from the brand’s real standards.

This is also where the market may get harsher. Adobe’s 2026 AI and Digital Trends consumer report points to growing consumer exposure to AI across search, shopping, and support. As audiences become more familiar with machine-generated interactions, they are likely to become more sensitive to generic wording, fake confidence, and copy that feels optimized but not genuinely helpful. In other words, the bar for believable ai copy is going up, not down.

Search, AI Interfaces, and Discovery Are Changing the Job of Copy

Another advanced shift is that ai copy is no longer being written only for a blue-link search result or a human reading a static page from top to bottom. Content now lives inside AI Overviews, AI-assisted search experiences, chat interfaces, recommendation systems, and multi-step discovery journeys. That changes what strong copy needs to do.

Google’s guidance on AI features in Search and its advice on succeeding in AI search experiences both point toward the same direction: unique, non-commodity content is more valuable in environments where users ask detailed questions and expect direct answers. For ai copy, that means generic filler becomes even less useful. If your content does not add something concrete, it has fewer places to hide.

The strategic implication is simple but important. Copy teams need to think beyond page-level optimization. They need to think in terms of extractable insights, quotable specificity, crisp answers, and content structures that remain useful when parts of the message are surfaced out of context by AI systems.

The Real Risk Is Letting AI Flatten Your Thinking

This is the part experts worry about for good reason. If ai copy becomes the first draft, the second draft, and the final draft, teams can slowly lose the muscle that made the messaging sharp in the first place. The danger is not just factual error. It is strategic drift.

There is early evidence that the human side of the workflow still matters enormously. Harvard Business Review’s coverage of research on generative AI and work keeps returning to the same tension: AI can boost productivity, but human skills like framing, judgment, and creativity become more important, not less, as adoption deepens. That is exactly the right way to think about ai copy. The tool can speed up expression, but it should not replace the hard work of deciding what is worth saying and why anyone should believe it.

So the expert-level rule is this: use ai copy to expand options, reduce blank-page friction, and accelerate production. Do not use it to outsource your positioning, your standards, or your understanding of the customer. That is where the real asset still lives.

What Smart Teams Should Do Next

The most practical next step is not chasing every new AI writing feature. It is building a tighter operating model around the basics that already matter:

- collect better customer language

- define stronger offer positioning

- create reusable voice and proof libraries

- connect copy performance to downstream business results

- set review standards before scaling output

- keep human judgment at the center of final decisions

That approach may sound less exciting than “fully automated content.” Good. It is also far more likely to produce work that converts, holds up under scrutiny, and still sounds like it came from a business with a brain.

The final part of this article will bring everything together with the most common questions people still have about ai copy, when it works best, where it goes wrong, and how to use it without letting it weaken the brand.

The practical future of ai copy is not one perfect model or one perfect prompt. It is an ecosystem where strategy, source material, editing, automation, analytics, and governance all work together. The teams that win will be the ones that treat ai copy as part of a managed system instead of a shortcut for publishing more words faster.

That matters because the market is moving in two directions at once. Adoption keeps rising, and McKinsey’s 2025 State of AI survey continues to show strong revenue impact in marketing and sales, but Google’s guidance on helpful content and generative AI content still makes one thing clear: scale without value is not a strategy. So the endgame is not “more AI.” It is better systems, sharper judgment, and stronger execution.

FAQ

What is ai copy?

Ai copy is written content created with the help of artificial intelligence tools, usually language models that can draft, rewrite, expand, summarize, or adapt text for different channels. In practice, that can include ads, emails, landing pages, product descriptions, sales scripts, chatbot responses, and blog content. The important detail is that the tool generates language, but the business still needs to supply the positioning, proof, judgment, and final approval.

Is ai copy good for SEO?

Ai copy can support SEO when it helps you publish useful, original, people-first content with real value. It becomes a problem when teams use it to mass-produce thin pages, generic rewrites, or scaled content that adds nothing new, which is exactly the risk Google warns about in its guidance on generative AI content. The smart move is to use AI for structure, research organization, and first drafts, then add expertise, specificity, and editorial review before publishing.

Can ai copy replace human copywriters?

No, not if the goal is strong work. Ai copy can remove repetitive drafting work and speed up production, but it still struggles with positioning, emotional calibration, fact discipline, and the kind of strategic sharpness that separates average messaging from persuasive messaging. The real shift is not replacement. It is that strong copywriters who know how to direct AI can usually produce more and test more without sacrificing standards.

How do I make ai copy sound more human?

Start by feeding the model real human language instead of generic instructions. Customer interviews, call transcripts, support conversations, reviews, and past winning copy will do far more for quality than asking the tool to “sound natural.” Then tighten the output by removing clichés, flattening fake hype, and replacing vague benefits with more concrete language.

What is the biggest mistake people make with ai copy?

The biggest mistake is trusting fluent output too early. Ai copy often sounds polished before it is specific, accurate, persuasive, or aligned with the brand, which creates false confidence. The fix is simple but not optional: treat the first draft as raw material, not as something ready to publish.

How should I measure whether ai copy is working?

Measure ai copy by the business result it is supposed to drive, not just by how quickly it was produced. That usually means tracking one stage at a time, from click-through rate to conversion rate to qualified lead rate to revenue or retention, depending on the model. If the top of the funnel improves but downstream quality gets worse, the copy may be creating interest without attracting the right people.

Does ai copy work better for short-form or long-form content?

It can work for both, but the job is different. In short-form copy, AI is often useful for variations, hooks, and CTA testing because speed matters and options matter. In long-form copy, AI is more useful as a drafting and structuring assistant, while the human writer still needs to control argument flow, evidence, differentiation, and consistency.

What tools pair well with ai copy in a real workflow?

The best tools depend on where the copy needs to live after it is written. If you are turning messaging into conversion pages quickly, Replo can help with execution, while GoHighLevel is more useful when copy needs to connect to automations, pipelines, and follow-up. For chat journeys, ManyChat is a practical fit, and for email execution and segmentation, Brevo is often a cleaner match.

Is prompt engineering the main skill behind good ai copy?

It matters, but it is not the main thing. OpenAI’s own guidance on prompt engineering and best practices keeps pointing back to the same reality: better context and clearer instructions lead to better outputs. In real work, context engineering wins. The team with better customer language, better proof, and better voice rules usually beats the team with the flashier prompt.

How do I keep ai copy compliant and trustworthy?

Build a review layer around it. NIST’s Generative AI Profile exists for a reason: generative systems create risks around accuracy, trust, oversight, and evaluation that need to be managed deliberately. For copy teams, that means checking claims, validating sources, defining approval rules, and deciding where human review is mandatory before anything goes live.

Should I tell readers that copy was created with AI?

That depends on the context, the expectations of the audience, and the level of risk in the content. For routine marketing copy, the more important question is often whether the content is accurate, useful, and honest rather than how the draft was produced. But in sensitive areas such as finance, health, legal information, or high-trust brand communication, transparency and tighter oversight become much more important.

Can small teams get a real advantage from ai copy?

Yes, and this is one of the most practical upsides. A small team can use ai copy to test more angles, launch assets faster, and keep a larger content engine moving without hiring at the same pace. The advantage only holds if the team stays disciplined, though, because speed without standards just produces faster waste.

What should I do first if I want better ai copy this week?

Do not start with a new tool. Start by collecting better inputs: your best customer quotes, your best-performing copy, your main objections, your clearest proof points, and a simple voice guide. Once you have that, your prompts improve immediately, your edits get easier, and the output stops sounding like borrowed internet language.

Work With Professionals

Explore 10K+ Remote Marketing Contracts on MarkeWork.com

Most marketers spend too much time chasing clients, competing on crowded platforms, and losing a percentage of every project to middlemen. MarkeWork gives you a better way. Browse thousands of remote marketing contracts and connect directly with companies desperate to hire skilled marketers like you, without platform commissions and without unnecessary gatekeepers.

If you're serious about finding better opportunities and keeping 100% of what you earn, explore available contracts and create a profile for free at MarkeWork.com.