An ai copy writer is no longer a novelty tool sitting on the edge of a marketing stack. It has become part of the daily workflow for teams that need to publish faster, test more angles, and keep up with the sheer volume of content modern channels demand. That shift is easy to see in current market data: 80% of marketers use AI for content creation, while Adobe reports that 53% of organizations still say their content supply chain is too linear and resource-intensive, which explains why the pressure to use AI keeps rising.

The real story is not that AI suddenly writes better than skilled humans. It is that businesses now have a practical way to move from blank page to first draft, from one campaign angle to ten, and from one landing page to a structured testing program without multiplying headcount. At the same time, the risks are just as real, because Google still prioritizes helpful, reliable, people-first content, and McKinsey found that only 27% of organizations using gen AI say all AI-created content is reviewed before use, which leaves plenty of room for sloppy execution.

That is why the useful question is not whether an ai copy writer works. The useful question is how to structure it so the output is faster without becoming generic, cheaper without becoming risky, and scalable without losing the voice, judgment, and originality that make copy convert in the first place. This article is built around that exact problem.

Article Outline

The topic needs a structure that moves from definition to execution, because most readers are not struggling to find an AI writing tool. They are struggling to understand where it fits, what good implementation looks like, and how to avoid the trap of publishing polished-looking copy that says nothing new. So the article will follow a six-part path that starts with the big picture and ends with practical decisions, examples, and FAQs.

The section names below are the exact headings the rest of the article will continue using. They are ordered to help the reader go from understanding the category to evaluating tools, building a workflow, and using an ai copy writer professionally instead of casually.

- Why AI Copy Writers Matter Now

- The AI Copy Writer Framework

- Core Components of High-Performing AI Copy

- Where AI Copy Writers Work Best

- Professional Implementation and Workflow Design

- Choosing the Right AI Copy Writer and Final FAQ

Why AI Copy Writers Matter Now

The demand problem came first. Marketing teams are expected to produce more landing pages, more email variants, more ad angles, more nurture sequences, more product messaging, and more channel-specific rewrites than a traditional editorial workflow was ever built to handle. That pressure shows up clearly in current research: content creation remains one of the top AI use cases for marketers, and Adobe’s latest digital trends reporting shows that 53% of organizations still describe their content supply chain as linear and resource-intensive.

That is why the ai copy writer category keeps expanding. Businesses are not buying into the idea because they want robotic blog posts. They are buying into it because faster ideation, faster drafting, and faster variation creation solve a real operational bottleneck long before they solve a creative one.

There is also a hard productivity argument behind the trend. In Ahrefs’ 2025 survey work, 87% of content marketers said they use AI to help create content, and teams using AI reported publishing 42% more content each month. That does not automatically mean better content, but it absolutely means the economics of first-draft production have changed.

Speed Is Valuable, but Only If Quality Survives

This is where a lot of teams get sloppy. They assume that if an ai copy writer can generate text quickly, the main problem is solved, when in reality speed without editorial control just creates a larger pile of average content. Google’s current search guidance is still built around helpful, reliable, people-first content, which means fast output only matters if the result is genuinely useful.

That tension is now one of the defining realities of AI-assisted marketing. McKinsey’s 2025 global survey found that only 27% of respondents whose organizations use gen AI say all AI-created content is reviewed before use. So the upside is obvious, but so is the gap between experimentation and disciplined implementation.

This is the point most people miss. An ai copy writer is not just a writing shortcut. It is a leverage tool that magnifies the strength of your process if your process is good, and magnifies the weakness of your process if your standards are vague.

The Market Has Moved from Novelty to Infrastructure

A few years ago, AI writing tools were treated like clever sidekicks for headline ideas and awkward first drafts. That is not the situation now. The market has moved toward workflow integration, structured prompting, brand voice controls, multi-step campaign production, and connected systems that feed AI with product data, customer context, and channel constraints.

You can see that shift in the way platforms position themselves. Tools are no longer selling “write a paragraph in seconds” as the main promise. They are selling campaign orchestration, content systems, automated follow-up, CRM-connected messaging, and landing page production, which is why platforms like GoHighLevel, Replo, and ClickFunnels increasingly show up in the same operational conversations as AI copy tools.

That matters because it changes how you should evaluate an ai copy writer. The question is not just whether it can produce decent prose. The real question is whether it helps you ship stronger assets inside a real business workflow where approvals, brand voice, offers, segmentation, and conversion goals all matter.

The AI Copy Writer Framework

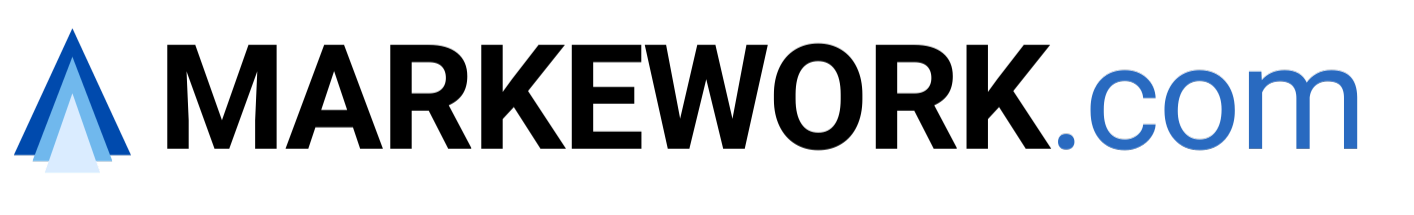

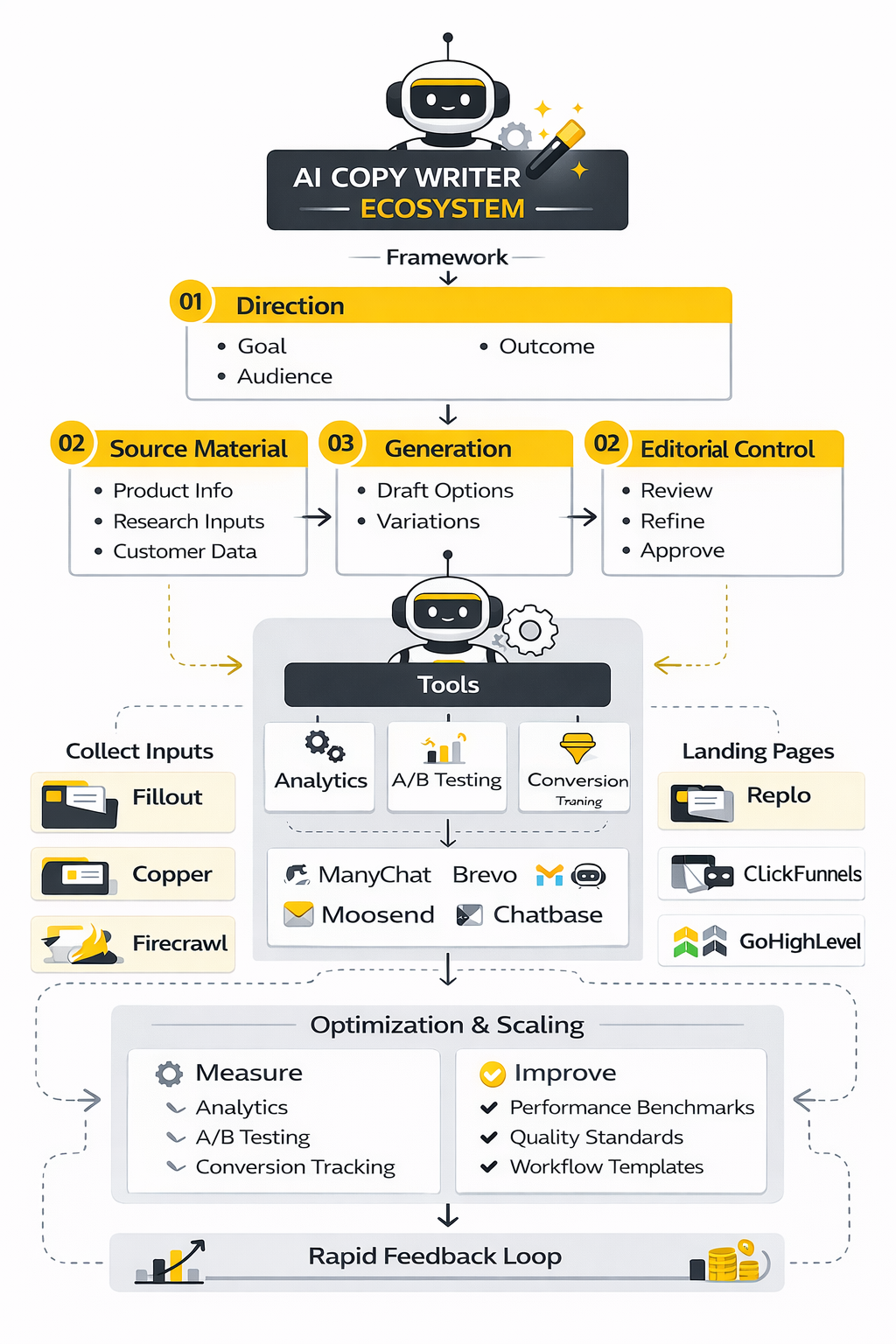

The cleanest way to think about an ai copy writer is as a four-layer system. First comes direction, because the model needs a goal, audience, and outcome. Then comes source material, because the model needs something real to work from. Then comes generation, where drafts and variations are produced. Finally comes editorial control, where human judgment turns plausible copy into publishable copy.

This framework matters because most disappointment with AI writing does not come from the model being incapable. It comes from users skipping the earlier layers and expecting the tool to invent strategy, research, positioning, and proof on its own. When that happens, the copy often sounds polished on the surface while staying empty underneath.

Layer 1: Strategy Before Prompting

A strong ai copy writer workflow starts before anyone types a prompt. You need clarity on the offer, the audience, the level of awareness, the objection landscape, and the action you want the reader to take. Without that, the model fills the gaps with generic patterns, and generic patterns are exactly what make AI copy feel interchangeable.

This is why good teams treat prompting as an extension of strategy, not a replacement for it. The better the strategic inputs, the more useful the draft becomes. The worse the inputs, the more time the team spends editing around vagueness that should never have been there in the first place.

In practice, this is also where businesses begin separating “toy use” from professional use. A freelancer playing with a blank prompt can still get decent ideas. A team trying to produce on-brand sales pages, outbound sequences, or lifecycle emails needs a repeatable briefing system.

Layer 2: Real Inputs Beat Clever Prompts

Prompt engineering gets too much credit when the real differentiator is usually input quality. An ai copy writer performs better when it is grounded in actual product information, customer interviews, sales call notes, review language, support tickets, and brand positioning documents. That is how the model stops sounding like the internet’s average opinion and starts sounding like your business.

This is also why connected workflows matter so much. If you can pull structured context from forms, CRMs, scheduling systems, or scraped product data, the model has something concrete to work with instead of being forced to improvise. Tools like Fillout, Copper, Cal.com, and Firecrawl become relevant here not because they are “writing tools,” but because they help feed the writing system with cleaner inputs.

This is where many weak AI workflows fail. They try to solve a context problem with more prompting. Usually, the fix is not a smarter instruction. It is better source material.

Layer 3: Generation Should Create Options, Not Final Truth

Once strategy and inputs are in place, generation becomes genuinely powerful. An ai copy writer can create multiple hooks, headline families, CTA variations, email versions, offer framings, and angle-based rewrites much faster than a human can draft them one by one. That gives the team something extremely valuable: more strong options before publishing, not just more words.

This framing matters because it keeps expectations realistic. AI is excellent at expansion, recombination, reformatting, and pattern-based variation. It is far less reliable when you ask it to act like a source of original evidence, lived expertise, or verified market truth without giving it those materials first.

The operational win is obvious. Instead of spending most of your time producing version one, you can spend more of your time comparing versions three through ten. That is a much better use of human judgment.

Layer 4: Human Review Is the Multiplier

The final layer is where professional implementation either works or falls apart. Human review is not there just to catch grammar issues. It is there to check whether the copy is true, sharp, differentiated, strategically aligned, and persuasive without drifting into overclaiming or bland filler.

That review layer is becoming more important, not less. Google’s public guidance on AI-generated content makes it clear that automation itself is not the issue, while manipulative, low-value, search-first production still is a problem in search quality systems and spam policies focused on content created primarily for ranking rather than helping people. So the real job of a human editor is not to “humanize” a draft with random stylistic tweaks. It is to make sure the draft deserves to exist.

That is the core framework in one line: strategy gives the ai copy writer direction, inputs give it substance, generation gives it scale, and human review gives it credibility. Skip any one of those, and the whole system gets weaker fast.

Core Components of High-Performing AI Copy

The difference between mediocre AI output and strong AI-assisted copy usually comes down to a few core components working together. Most weak drafts do not fail because the model cannot form sentences. They fail because the system behind the prompt is missing clarity, evidence, structure, and editorial pressure.

A good ai copy writer setup needs real inputs, not vague enthusiasm. It needs a clear offer, a clear audience, a real point of view, and enough source material to keep the copy grounded in something specific. Once those pieces are in place, the draft becomes much easier to shape into something persuasive.

A Clear Conversion Goal

Every piece of copy needs one job. That sounds obvious, but a lot of teams still ask an ai copy writer to produce email copy, landing page copy, and brand messaging without deciding what action the reader should take next. When the goal is fuzzy, the copy usually becomes broad, safe, and forgettable.

That is why the first filter should always be conversion intent. Is the copy trying to get a click, a reply, a booked call, a trial signup, a purchase, or a deeper step in the funnel? Once that is defined, the language gets tighter, the CTA gets sharper, and the draft stops trying to do five jobs at once.

Strong Source Material

An ai copy writer becomes more useful when it has something real to work with. Product pages, founder notes, customer research, review mining, sales call transcripts, onboarding answers, support tickets, and offer breakdowns all make the output more specific. Without that layer, the model falls back on patterns it has seen before, and that is exactly how bland copy gets produced at scale.

This is also where implementation gets more serious. If your workflow pulls form responses from Fillout, customer context from Copper, scheduling data from Cal.com, or site content through Firecrawl, the writing system starts with better raw material. Better raw material usually beats more clever prompting.

A Defined Voice and Message Hierarchy

Voice is not just tone. It is the combination of vocabulary, rhythm, depth, confidence level, and messaging priorities that make copy feel like it came from one company and not another. If those rules are not defined, an ai copy writer may produce clean sentences that still sound generic.

The fix is simple, but not optional. Give the system brand examples, banned phrases, preferred phrase patterns, proof points, objection handling language, and message hierarchy. That way the model is not guessing what matters most. It is following a useful editorial frame.

Proof, Specificity, and Constraint

AI is good at making copy sound finished. That is exactly why it needs constraints. A strong ai copy writer workflow should force the draft to use approved claims, real features, specific differentiators, and actual customer language wherever possible.

Constraint improves output because it removes the temptation to let the model improvise. When you define what the copy must include, what it must avoid, and what proof it can reference, the result becomes more credible and easier to approve. In other words, quality usually goes up when freedom goes down.

Where AI Copy Writers Work Best

AI writing is not equally useful across every format. Some jobs benefit massively from speed and variation, while others still depend heavily on original reporting, expert judgment, and nuanced narrative structure. The smart move is not to use an ai copy writer everywhere. The smart move is to use it where leverage is highest.

This matters because misuse creates false expectations. If a team expects AI to replace strategic thinking or produce deeply original insight from nowhere, disappointment is almost guaranteed. If the team uses it to accelerate structured tasks, the value becomes obvious fast.

Short-Form Conversion Copy

This is one of the strongest use cases. Headlines, subheads, CTA options, ad variants, subject lines, preview text, SMS copy, and retargeting angles all benefit from speed, contrast, and iteration. An ai copy writer can generate a much larger testing pool than a human usually would in the same amount of time.

That matters because performance copy often improves through comparison rather than first-draft brilliance. The more credible options you can generate and refine, the better your odds of finding the angle that actually converts. AI is extremely useful in that environment.

Email Sequences and Lifecycle Messaging

Email is another strong fit because the structure is usually clear. Welcome sequences, abandoned cart flows, lead nurture, webinar reminders, reactivation campaigns, and post-purchase follow-up all follow a strategic pattern that AI can help scale. The model is especially useful when the team already understands the sequence goal and just needs faster production.

This is where platforms matter too. If the execution layer lives inside tools like Brevo, Moosend, or ManyChat, the ai copy writer becomes part of a live messaging system instead of a disconnected drafting toy. That is where the time savings start compounding.

Landing Pages and Offer Pages

Landing pages are a great fit when there is already a real offer and real proof on the table. AI can help assemble structure, section drafts, headline families, objection handling blocks, FAQ candidates, and alternate positioning angles. It can also repurpose the same offer for different traffic sources or awareness levels without forcing the team to rebuild the page from scratch every time.

The key is that the page still needs strategic control. AI can help draft the bones, but the business still has to decide what promise leads, what proof matters most, and where the CTA should carry the weight. Used that way, an ai copy writer becomes a force multiplier for conversion pages rather than a shortcut to cookie-cutter templates.

That is also why builders like Replo, ClickFunnels, Systeme.io, and GoHighLevel naturally enter the conversation. Once copy production and page deployment are connected, testing becomes much easier to sustain.

Professional Implementation and Workflow Design

This is the point where an ai copy writer either becomes useful infrastructure or stays a novelty. Casual use is easy. Professional implementation is harder because it requires process, roles, templates, approvals, and a clear path from input to published asset.

The good news is that the workflow does not need to be complicated. It just needs to be consistent enough that the team can repeat it without reinventing the process every time. Once that happens, the quality becomes more stable and the speed gains start becoming real.

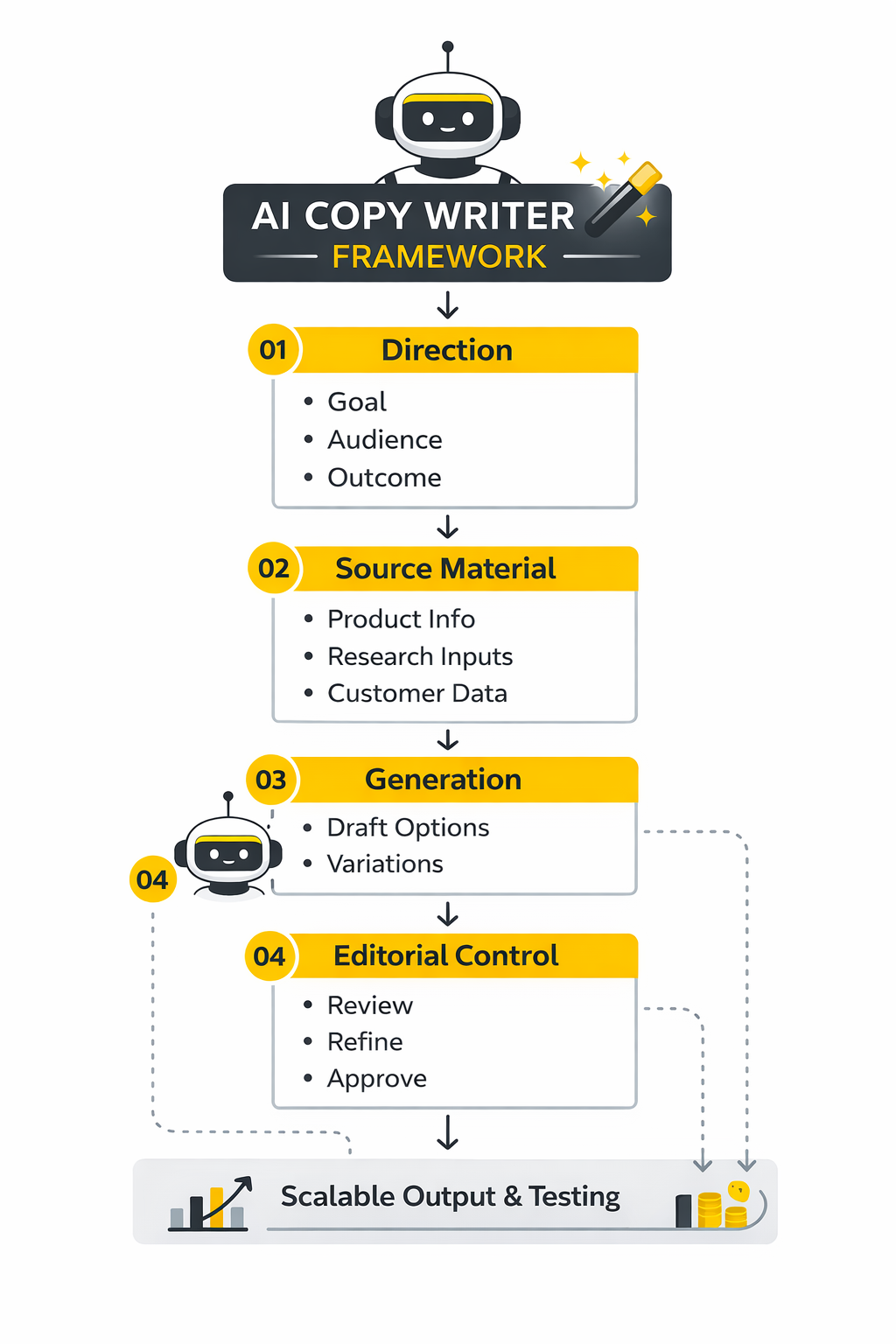

A Practical Step-by-Step Workflow

The cleanest implementation process is to move through the same sequence every time. First gather the source material. Then define the copy brief. Then generate multiple structured drafts. Then review, trim, fact-check, and adapt the winner to the final channel.

A working version of that process usually looks like this:

- Collect the inputs

Gather offer details, audience notes, objections, proof points, customer language, and any mandatory claims or constraints. This stage matters more than most people think, because weak input quality creates weak draft quality. If the foundation is vague, the ai copy writer will sound polished but hollow.

- Build a tight brief

Write down the channel, goal, audience awareness level, desired action, tone boundaries, and what the draft must include. This gives the system a frame. It also makes review much easier because the team can judge the copy against a real brief instead of against instinct alone.

- Generate multiple angles

Do not ask for one draft and hope it is perfect. Ask for several versions built around different mechanisms, objections, hooks, or levels of intensity. That makes the ai copy writer useful as a variation engine, which is one of its strongest jobs.

- Score the outputs

Review each draft for clarity, specificity, believability, brand fit, and conversion strength. Cut anything that sounds inflated, repetitive, or suspiciously generic. This stage is where human judgment earns its keep.

- Rewrite with intent

Take the best parts of the strongest draft and rewrite aggressively where needed. Add sharper transitions, stronger proof, better examples, and cleaner CTA logic. At this point, the copy should stop sounding like it was “generated” and start sounding like it was deliberately built.

- Deploy into the actual system

Move the approved copy into the final workflow, whether that means email automation, page builders, CRM sequences, chatbot flows, or social scheduling. Tools like Buffer, Flick Social, Chatbase, and GoHighLevel become useful here because the copy is no longer sitting in a doc. It is being used inside a live system.

The Team Setup That Usually Works Best

Professional use does not require a massive content team. It usually works best when one person owns strategy, one person owns execution, and one person owns approval, even if those roles sit with the same operator in a smaller business. The point is not bureaucracy. The point is clarity.

Without role clarity, AI workflows create weird gaps. One person assumes another person checked the claims, someone else assumes the brand voice is fine, and the final asset goes live with avoidable weaknesses. Even a simple review chain makes a major difference.

What to Standardize First

Do not try to standardize everything on day one. Start with the pieces that create the biggest quality swings: the brief template, the source-material template, the prompt structure, the review checklist, and the final approval standard. Once those are consistent, the whole ai copy writer system gets more predictable.

This is also the stage where supporting tools can help. Guideless, Anything.com, Wispr Flow, and Dub can make execution smoother depending on how your workflow is set up. But the principle stays the same: system first, tool second.

The Goal Is Not More Content

This matters more than people admit. The point of an ai copy writer is not to flood channels with more words. The point is to increase the speed and consistency of producing copy that deserves to be published.

When the workflow is built well, the gains are obvious. You spend less time staring at a blank page, less time rebuilding standard assets from scratch, and more time improving message quality, testing angles, and tightening the path to conversion. That is what professional implementation is supposed to do.

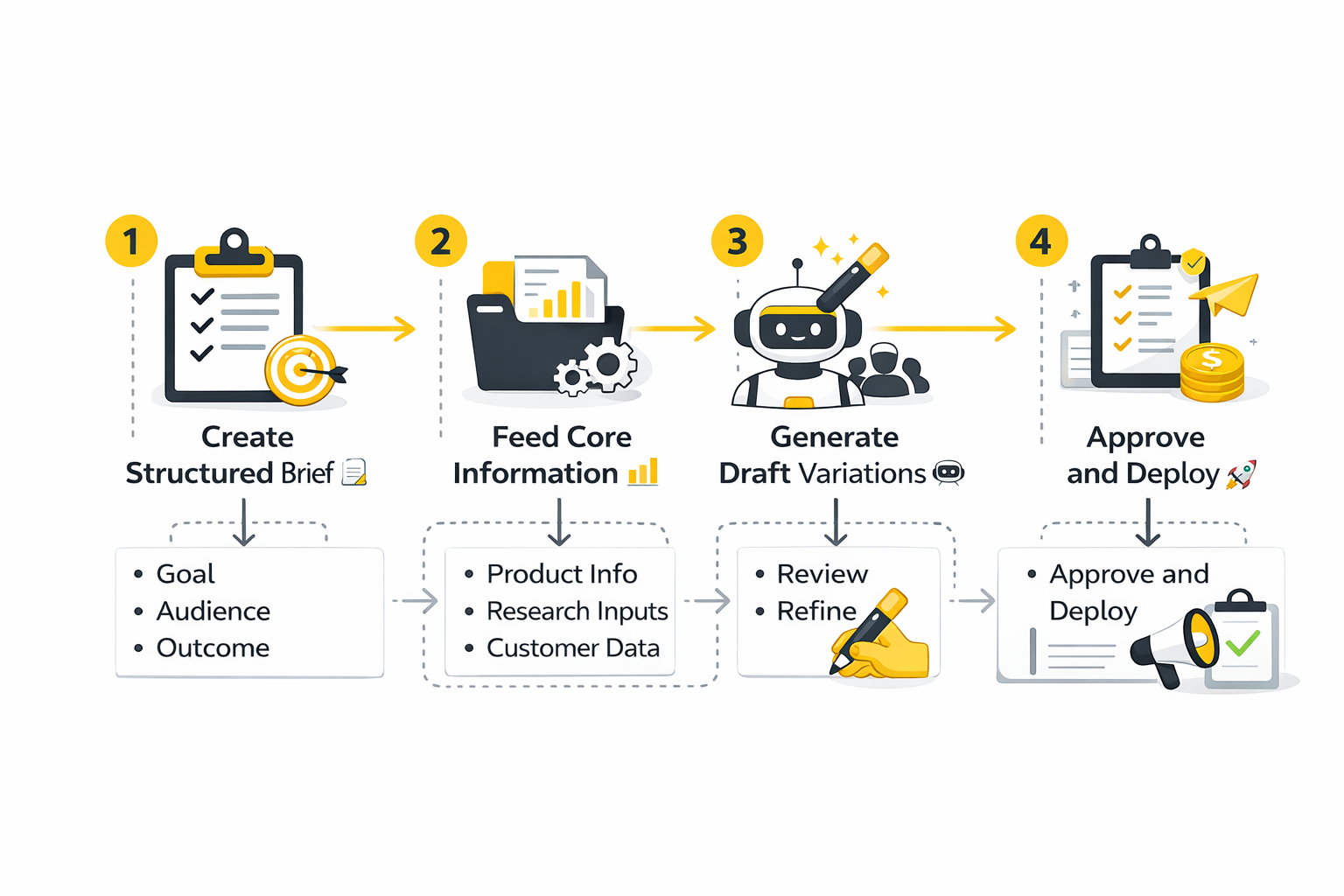

Measuring What an AI Copy Writer Is Actually Improving

Once an ai copy writer is part of the workflow, the next mistake is obvious: teams start measuring the wrong thing. They celebrate output volume, faster drafting, or the number of assets produced, even when those gains do not translate into stronger business results. That is not a measurement system. That is activity tracking dressed up as progress.

The right approach is to measure the workflow in layers. First check whether AI is making production faster. Then check whether it is making performance stronger. Then check whether quality control is holding up as volume increases. If you do not evaluate all three layers together, you can easily mistake more content for better content.

The broader market data makes that distinction important. Ahrefs found that 87% of content marketers use AI to help create content and teams using AI publish 42% more content each month. That is useful, but it is only a starting signal. More output matters only if that extra output improves conversion, engagement, pipeline, retention, or some other result that the business actually cares about.

The First Benchmark Is Speed, but Speed Is Not the Finish Line

Speed is the easiest metric to notice because it changes fast. Drafting time drops, ideation cycles shorten, and asset production becomes less painful. HubSpot’s current AI marketing reporting shows that content creation is the most common use case for AI in content marketing at 55%, which tells you exactly where teams expect efficiency gains first.

That metric matters because time saved can be reallocated toward better work. If an ai copy writer reduces time spent on blank-page drafting, the team can use that margin for research, editing, testing, and message refinement. But speed becomes dangerous when people treat it as proof of success on its own.

This is where interpretation matters. Faster copy production is a positive signal only when it creates room for more thoughtful decisions or more profitable experiments. If speed simply leads to more rushed publishing, then the metric is real but the outcome is backward.

Quality Control Is a Performance Metric, Not an Afterthought

A serious analytics system for AI copy has to include review discipline. This is not just a governance issue. It is a results issue, because weak review allows generic, inaccurate, or overclaimed copy to slip into production, which damages trust and usually hurts performance anyway.

Current survey data makes that gap impossible to ignore. McKinsey found that only 27% of organizations using gen AI say all AI-created content is reviewed before use, while a similar share say 20% or less is checked before publication. That means a lot of businesses are still treating output review as optional, even though the review step is one of the strongest predictors of whether AI helps or harms the final asset.

The practical takeaway is simple. If you use an ai copy writer, review rate should sit next to production rate on the same dashboard. A growing content engine with weak review discipline is not scaling well. It is scaling risk.

Editing Intensity Tells You Whether the Input System Is Working

One of the most useful signals is how much rewriting the drafts still need before they are ready. If your team is constantly doing deep rewrites, that usually means the system upstream is weak. The brief may be unclear, the source material may be thin, or the voice rules may be too vague for the model to produce usable output consistently.

That is why HubSpot’s 2025 survey data is so revealing. Only 7% of marketers say they use AI to create entire pieces without editing, while 56% significantly revise or rewrite AI-generated text and 38% make minor tweaks. The number itself is not a problem. In fact, it is a healthy sign that professionals are still editing. What matters is how you interpret it.

If most of your drafts need heavy reconstruction, the answer is not necessarily “AI is bad.” The answer is often that the implementation is shallow. A better brief, stronger inputs, approved proof points, and tighter voice controls can reduce editing drag without lowering standards.

Benchmarks That Actually Matter

A useful benchmark has to drive action. It should tell you whether to keep the workflow as it is, tighten it, or redesign it. That is why vanity metrics are such a trap in AI-assisted writing. They sound impressive but do not show whether the copy is doing its job.

The most valuable benchmarks are tied to operational leverage and commercial impact. In other words, you want to know whether the ai copy writer helps you ship more strong assets, test more meaningful variations, and improve the economics of content and campaign production. Everything else is secondary.

Production Benchmarks

Production benchmarks tell you whether the machine is moving faster. These usually include time to first draft, time to approved draft, number of variants produced per campaign, and number of assets published per month. Ahrefs’ finding that AI-assisted teams publish 42% more content each month is a useful benchmark because it shows the scale effect clearly.

But the action this should drive is not “publish 42% more content because other teams do.” The action should be to ask whether more variants are leading to better experiments and better coverage of key channels. Volume is valuable when it expands intelligent testing, not when it expands noise.

This is especially important for teams using builders and automation layers like Replo, ClickFunnels, Systeme.io, and GoHighLevel. Once content production and deployment are connected, production benchmarks become more meaningful because the system can actually act on the extra output.

Performance Benchmarks

Performance benchmarks tell you whether the extra output is better, not just faster. These include click-through rate, reply rate, conversion rate, lead quality, assisted revenue, trial starts, booked calls, or retained engagement depending on the channel. This is where an ai copy writer has to prove it is creating stronger messages, not just more messages.

This layer also explains why AI can improve ROI without guaranteeing excellence on every draft. Semrush reports that 68% of businesses say AI increases their content marketing ROI and 65% say it improves SEO results. Those are encouraging signals, but they should be read carefully. They do not mean every AI-assisted asset performs better. They mean the overall system can become more efficient and productive when AI is used well.

That should push teams toward controlled comparison. Measure AI-assisted assets against your previous manual baseline. Compare variant performance, approval time, and downstream conversion, then keep the parts of the workflow that improve the economics of output. If the data does not improve, the process needs work.

Trust and Quality Benchmarks

This layer is less glamorous, but it is where long-term value is protected. Trust and quality benchmarks include factual accuracy, policy compliance, brand consistency, uniqueness, and editorial approval rate. These are harder to summarize in one flashy number, but they are the guardrails that keep the system usable over time.

Content Marketing Institute’s latest statistics show that only 17% of B2B marketers rate AI-generated content quality as excellent or very good, while 44% rate it as good and 35% rate it as fair. That is a very useful benchmark because it tells you where market maturity really is. The average outcome is not magic. It is decent. Which means advantage comes from process discipline, not from using the tool at all.

The action here is straightforward. Build a scorecard for truthfulness, specificity, voice alignment, and usefulness before an asset goes live. If those scores stay flat or fall while production volume rises, the ai copy writer is creating operational strain rather than strategic advantage.

Building an Analytics System Around AI Copy

A strong analytics setup does not need to be complicated, but it does need to be intentional. Most teams only need a simple reporting layer that connects production metrics, editorial metrics, and business metrics in one place. Once those numbers sit together, it becomes much easier to tell whether the system is compounding value or quietly creating mess.

The goal is not to trap the team in reporting overhead. The goal is to make good decisions faster. When the signals are clear, the ai copy writer stops being a black box and starts becoming a measurable production system.

The Three-Dashboard Model

The cleanest setup is to use three dashboards. The first tracks workflow efficiency, including draft turnaround time, edit time, and publishing velocity. The second tracks copy performance, including CTR, conversion rate, reply rate, landing page completion, or pipeline influence depending on the asset type. The third tracks quality and compliance, including review coverage, factual error rate, approval rate, and consistency with brand standards.

This structure matters because it prevents false wins. If production is up, performance is flat, and quality is down, the ai copy writer is not improving the system in a meaningful way. If production is up, performance is up, and quality is stable, then the implementation is doing exactly what it should.

Teams that already operate inside execution tools can make this even cleaner. Buffer, Brevo, ManyChat, and GoHighLevel can surface channel-level performance data directly where the copy is deployed. That makes measurement more practical, which usually means it actually gets done.

What the Data Should Drive Next

The best measurement systems do not just describe what happened. They decide what to do next. If edit intensity is too high, strengthen the brief and input materials. If output volume is up but conversion is flat, improve angle selection and testing discipline. If performance improves but review coverage drops, protect the quality layer before scaling further.

This is also where Google’s guidance stays relevant. Search systems are designed around helpful, reliable, people-first content, and Google has also made clear that using automation, including AI, is not inherently against its guidelines when the goal is producing helpful content rather than manipulating rankings. So if search traffic or organic conversions flatten after a big increase in AI-assisted production, the likely lesson is not “Google hates AI.” The likelier lesson is that quality signals are not keeping pace with output.

That is the real job of analytics in an ai copy writer workflow. It should tell you whether speed is turning into leverage, whether leverage is turning into results, and whether results are being achieved without eroding trust. If the numbers cannot answer those three questions, the measurement system still is not good enough.

Scaling an AI Copy Writer Without Losing Quality

Once the workflow works for one person or one team, the real challenge begins. A small operation can often get away with informal review, loose prompts, and memory-based brand control because the same people are touching every asset. As soon as more channels, more contributors, or more clients enter the system, that approach starts breaking down fast.

That is why scaling an ai copy writer is not mainly a writing problem. It is a systems problem. The bigger the operation gets, the more important it becomes to standardize briefs, source inputs, approval logic, and brand rules so quality does not drift while output rises.

Scale Exposes Weak Process Before It Creates Strong Leverage

This is one of the harsh truths in AI-assisted content. If the process is messy, scaling does not fix it. It makes the mess more efficient. McKinsey’s 2025 survey found that inaccuracy is the AI-related risk organizations report most often, which is exactly what you would expect when more teams produce more assets faster than their review systems can reliably handle.

That should change how teams think about growth. The goal is not simply to let an ai copy writer produce more landing pages, more campaigns, and more nurture sequences. The goal is to build a controlled operating model where more output does not mean more factual drift, more voice inconsistency, or more compliance exposure.

The practical implication is simple. If you cannot maintain standards across ten assets, you will not magically maintain them across one hundred. Fix the workflow before you scale the workflow.

Brand Consistency Gets Harder Before It Gets Easier

A lot of teams assume AI will automatically solve brand consistency because the model can be trained or instructed on tone. In practice, the opposite is often true at first. When multiple people use the same ai copy writer with slightly different briefs, source files, or prompt habits, the output starts sounding similar in sentence rhythm but inconsistent in message hierarchy, proof selection, and offer emphasis.

That is where content operations and brand systems start mattering more than clever prompts. Adobe’s enterprise content reporting frames generative AI as a way to deliver consistent personalized experiences at speed and scale, but that upside only appears when the organization already has strong content governance. Without that, AI tends to scale inconsistency just as easily as it scales production.

So the expert move is not to ask whether the ai copy writer can mimic your tone. The better question is whether your team has documented what your tone, messaging priorities, claims, proof standards, and channel rules actually are. If those pieces are vague, scale will expose the gap immediately.

The Strategic Tradeoffs Most Teams Underestimate

AI copy is full of real upside, but mature teams look at the tradeoffs just as hard as the benefits. That is where better decisions get made. An ai copy writer can save time, increase variation, and lower production friction, but it also introduces new dependencies, new review burdens, and new risks around sameness, accuracy, and over-automation.

None of that means the technology is a bad fit. It means the tool should be handled like infrastructure instead of hype. The businesses that win with it are usually the ones that stay clear-eyed about what it improves and what it can quietly weaken.

Efficiency Versus Originality

This is probably the most important tradeoff of all. An ai copy writer is excellent at patterns, which is precisely why it can help you move fast. But pattern fluency is not the same as original thinking, original reporting, or original experience. If the whole workflow leans too heavily on AI-generated first principles, the brand starts sounding competent but interchangeable.

Google’s search guidance still centers on helpful, reliable, people-first content, and its guidance on AI-generated content makes clear that the issue is not the use of automation itself but whether the content is actually useful rather than produced mainly to manipulate rankings at scale. That matters because sameness is not just a branding problem. It is a discoverability problem too.

The action this should drive is not to avoid AI. It is to pair AI speed with human source material, point of view, and editorial sharpness. Let the model help build the asset, but do not let it define what is worth saying.

Speed Versus Oversight

The second tradeoff is more operational. Speed feels great in the moment because it reduces friction. But every time an ai copy writer makes production easier, it also creates pressure to approve more assets in less time. That can quietly erode the review layer that makes the whole system trustworthy.

McKinsey’s March 2025 survey found little change since early 2024 in the share of organizations reporting negative consequences from gen AI use, even as adoption keeps spreading. That is a useful warning sign. It suggests that many organizations are still expanding usage faster than they are maturing their controls.

So the smart move is to treat review capacity as part of scale capacity. If output doubles, oversight cannot stay flat forever. At some point, the ai copy writer becomes only as strong as the human system behind it.

Lower Production Cost Versus Higher System Complexity

There is also a less obvious tradeoff hiding under the surface. AI can reduce the cost of generating drafts, variants, and campaign assets, but the surrounding system often becomes more complex. Teams need better templates, better asset libraries, better approval flows, and sometimes better integrations between forms, CRMs, builders, and deployment tools.

That is not a reason to avoid the shift. It is just a reason to budget for the full system, not just the model. Once an ai copy writer becomes part of a live stack with GoHighLevel, Brevo, ManyChat, Replo, or Buffer, you are not just running a writing tool anymore. You are running a content production system.

That system can be incredibly powerful. It just deserves to be designed like a system.

Risk Management for Serious Teams

Once AI-assisted copy becomes part of actual revenue operations, risk management stops being a side topic. It becomes part of performance. Inaccurate claims, off-brand promises, regulatory mistakes, and weak sourcing can create direct commercial damage long before they create abstract “AI concerns.”

This is one reason enterprise adoption has become more sober. Deloitte’s enterprise gen AI reporting emphasizes that organizations are moving from experimentation into decisions about governance, controls, and measurable business outcomes rather than curiosity alone. That shift is healthy. It means the conversation is maturing.

The Main Risks Are Usually Boring, Not Futuristic

Most of the time, the risks around an ai copy writer are not dramatic science-fiction failures. They are very normal business failures happening faster. A pricing detail gets stated incorrectly. A brand claim gets stretched too far. A customer segment gets oversimplified. A regulated category gets copy that sounds persuasive but crosses a line.

That is why the best safeguards are usually boring too. Approved claims libraries, source-of-truth documents, review checklists, required proof links, and escalation rules matter more than theatrical “AI policies” that nobody follows. The teams that stay safe usually stay simple.

This also reinforces an earlier point from the data. If inaccuracy is the most commonly reported AI risk in current McKinsey research, then risk control for an ai copy writer should begin with truthfulness and source discipline, not abstract philosophical debates.

The Highest-Risk Moments Usually Happen at the Edges

An ai copy writer is often safest in the middle of a known workflow where the offer, audience, and guardrails are already established. Risk tends to rise at the edges: new markets, sensitive claims, regulated verticals, major pricing changes, crisis communications, or content that tries to sound more authoritative than the source material actually supports.

This is where senior judgment still matters enormously. AI can help draft, reorganize, and sharpen. It should not be the final authority on what the company can responsibly promise. Mature teams know the difference.

The simple rule is this: the further the copy moves from documented truth and into inference, the more human scrutiny it needs. That principle sounds basic, but it prevents a lot of expensive mistakes.

Expert-Level Guidance for Getting More From the System

By this stage, the biggest gains usually do not come from finding a “better prompt.” They come from improving the structure around the ai copy writer so the model consistently starts from stronger material and gets evaluated against sharper standards. That is what separates surface-level usage from real operational advantage.

This is also where experienced teams become less impressed by raw generation and more focused on orchestration. They care about how information enters the system, how drafts are routed, how approvals are handled, and how performance data loops back into the next iteration. That is a much better place to operate from.

Build Around Reusable Assets, Not One-Off Prompts

One-off prompting is fine for exploration. It is weak for scale. The better model is to create reusable components: brief templates, offer templates, voice guides, objection banks, CTA libraries, approved proof modules, and channel-specific frameworks. That way the ai copy writer is not asked to improvise its way through every new asset.

This approach also makes iteration smarter. When performance improves, you can trace which input structures helped. When quality falls, you can see which part of the system failed. In other words, reusable assets make the workflow more teachable and more debuggable.

That is why supporting infrastructure matters. Tools like Fillout, Copper, Firecrawl, Wispr Flow, and Dub can matter far more than people expect. Better inputs and cleaner handoffs often create more value than another layer of prompt cleverness.

Treat Human Editors as Performance Multipliers

A common mistake is to think the human role shrinks as the ai copy writer gets better. In practice, the value of strong human editors often goes up. When the machine handles first-draft labor, human attention can move toward what actually drives quality: argument strength, proof selection, emotional precision, structural tightening, and conversion logic.

That is a better use of talent. It also produces a healthier operating model. The editor is no longer just cleaning up rough grammar. The editor is deciding what deserves to survive.

This is the mindset that usually produces the best long-term outcome. Use AI to reduce friction. Use people to raise standards. When those roles are clear, the system tends to get stronger with scale instead of weaker.

Know When Not to Use an AI Copy Writer

This might be the most advanced advice in the whole article. Sometimes the highest-leverage move is not using the tool at all. If the asset depends on first-hand expertise, sensitive nuance, legal precision, executive judgment, or genuinely original reporting, a human-first process is still the better starting point.

That is not a limitation to be embarrassed about. It is a sign of maturity. The best teams do not force an ai copy writer into every job just because it is available.

They use it where it creates leverage, avoid it where it creates distortion, and keep refining the system where the two overlap. That is the difference between using AI because it is fashionable and using it because it is genuinely useful.

FAQ for the Complete Guide

What is an ai copy writer, really?

An ai copy writer is software that helps generate, expand, rewrite, structure, or optimize marketing copy using large language models. In practice, that can mean headlines, ad copy, emails, landing page sections, product descriptions, follow-ups, chatbot scripts, or social captions. The reason it matters now is simple: AI has moved from a novelty to a mainstream workflow layer, with HubSpot reporting that 94% of marketers plan to use AI in content creation processes in 2026.

Can an ai copy writer replace a human copywriter?

Not in the way many people imagine. An ai copy writer can dramatically reduce blank-page time, speed up ideation, and generate more variants than most humans would produce manually in the same timeframe. But strategy, judgment, proof selection, and originality still matter, especially when Google continues to emphasize helpful, reliable, people-first content.

Is AI-written copy bad for SEO?

No, not automatically. Google has been very clear that the issue is not whether content was created with AI, but whether it is helpful and created for people rather than mainly to manipulate rankings, as explained in its guidance on AI-generated content in Search. The problem is not the tool. The problem is low-value output.

What types of copy benefit most from an ai copy writer?

The strongest use cases are usually structured, repeatable formats where speed and variation matter. That includes email sequences, paid ads, landing pages, nurture flows, retargeting messages, chatbot scripts, and social content repurposing. It tends to be less reliable as a first-pass replacement for highly original thought leadership, investigative content, or copy that depends heavily on first-hand expertise.

How do I get better output from an ai copy writer?

Better inputs usually beat better prompts. Give the system real customer language, real proof points, real offer details, and clear conversion goals. That is also why tools that strengthen the input layer, like Fillout, Copper, and Firecrawl, can make a bigger difference than endlessly tweaking prompt wording.

How much editing should AI-generated copy need?

Some editing is normal and healthy. In HubSpot’s 2025 reporting, only 7% of marketers said they use AI-generated text without editing, while 56% significantly revise or rewrite it and 38% make minor edits. That should not scare you. It should remind you that an ai copy writer works best as a drafting and acceleration tool, not as an unquestioned final author.

How should I measure whether an ai copy writer is working?

Track three things together: production speed, performance outcomes, and quality control. Faster drafts are nice, but they are not enough on their own. What matters is whether the ai copy writer helps you create stronger assets, test more meaningful variants, and maintain standards while scaling output.

What are the biggest risks of using an ai copy writer?

The biggest risks are usually boring ones: inaccurate claims, generic messaging, weak brand consistency, and under-reviewed content going live too fast. McKinsey’s 2025 research shows that inaccuracy is the AI-related risk organizations report most often. That is exactly why review discipline matters so much.

Should freelancers and small teams use an ai copy writer differently than larger companies?

Yes, mainly because the bottlenecks are different. Small teams often need AI to reduce production friction and ship faster without hiring immediately. Larger teams usually need AI to standardize workflows, manage approvals, and keep voice consistency across channels, regions, or client accounts.

What tools fit around an ai copy writer in a real workflow?

That depends on what you are building, but the pattern is consistent. You need a place to collect input, a place to generate and refine copy, and a place to deploy and measure it. In live workflows, that often means combining messaging and automation tools like ManyChat, Brevo, and GoHighLevel with page and funnel systems like Replo, ClickFunnels, or Systeme.io.

Can an ai copy writer help with personalization at scale?

Yes, and this is one of the strongest reasons the category keeps growing. AI makes it easier to adapt the same core offer for different audience segments, intent levels, channels, and campaign stages without rewriting everything manually. That becomes even more valuable as marketers face audience expectations shaped by faster, more tailored digital experiences, a trend reflected in HubSpot’s 2026 marketing statistics showing 65% of marketers say consumers now expect more personalized experiences because of AI.

Is the market getting flooded with AI-generated content?

Yes, and that is exactly why quality matters more now, not less. HubSpot’s 2026 trend reporting found that 56% of marketers say the internet is now flooded with AI-generated content, and 65% say consumers are getting better at recognizing it. That means using an ai copy writer successfully is no longer about merely producing more. It is about producing more distinctive, more useful, and more trustworthy content than the average team does.

When should I avoid using an ai copy writer?

Avoid using it as the primary engine when the work depends on original reporting, highly sensitive claims, legal precision, executive positioning, or nuanced subject-matter expertise that is not well documented in source materials. In those cases, a human-first process is still the better starting point. AI can still help with structure or editing later, but it should not be asked to invent authority it does not have.

What is the smartest way to start if I am new to AI-assisted copy?

Start with one workflow, not ten. Pick something repeatable like welcome emails, landing page variants, outreach sequences, or ad creative angles. Build a simple process with a clear brief, solid inputs, structured generation, and human review, then improve it before expanding further.

Work With Professionals

Explore 10K+ Remote Marketing Contracts on MarkeWork.com

Most marketers spend too much time chasing clients, competing on crowded platforms, and losing a percentage of every project to middlemen. MarkeWork gives you a better way. Browse thousands of remote marketing contracts and connect directly with companies desperate to hire skilled marketers like you, without platform commissions and without unnecessary gatekeepers.

If you're serious about finding better opportunities and keeping 100% of what you earn, explore available contracts and create a profile for free at MarkeWork.com.