Benchmark email marketing the wrong way and you get fake comfort. Benchmark it the right way and you get a clear view of where your list, offer, creative, deliverability, and revenue tracking are actually leaking performance.

That distinction matters because email data is messy now. Open rates still help, but privacy changes mean they cannot carry the whole analysis. Clicks, conversions, unsubscribe rates, bounce rates, complaint rates, delivery rate, and revenue per recipient need to be read together, not as isolated dashboard numbers.

Recent industry data shows why this needs a disciplined approach. The DMA’s 2025 Email Benchmarking Report reported 98% delivery rates, 35.9% open rates, and 2.3% unique click rates across 2024 email activity, while Gmail’s sender rules now require stronger authentication and easier unsubscribes for high-volume senders, making deliverability a strategic benchmark rather than a technical afterthought: DMA email benchmarking data and Google sender requirements.

Article Outline

- Part 1: Why Benchmark Email Marketing Needs A Better Framework

- Part 2: The Core Email Marketing Benchmarks To Track

- Part 3: How To Compare Your Benchmarks By Industry, List Type, And Campaign Goal

- Part 4: Professional Implementation: Building A Benchmarking System

- Part 5: Turning Benchmark Data Into Better Campaigns

- Part 6: Common Benchmarking Mistakes, Tool Choices, And FAQ

Why Benchmark Email Marketing Needs A Better Framework

Most marketers do not suffer from a lack of email metrics. They suffer from too many numbers with no hierarchy. A campaign can show a strong open rate, a weak click rate, and decent revenue, and each number tells a different story.

That is why benchmark email marketing work should start with context before comparison. A newsletter, abandoned cart flow, product launch, webinar reminder, and reactivation campaign should not be judged by the same standard. The useful question is not “Is this number good?” but “Is this number good for this audience, this intent, this offer, and this stage of the customer journey?”

The biggest mistake is treating industry averages like targets. Benchmarks are reference points, not ceilings. Your real job is to compare against the right external range, your own historical baseline, and the commercial outcome the campaign was meant to create.

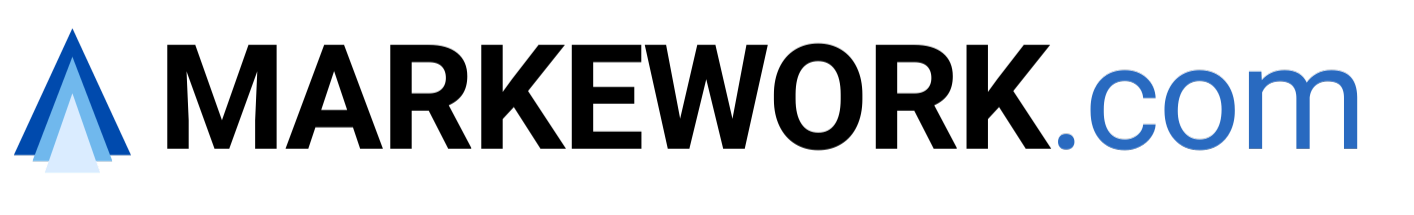

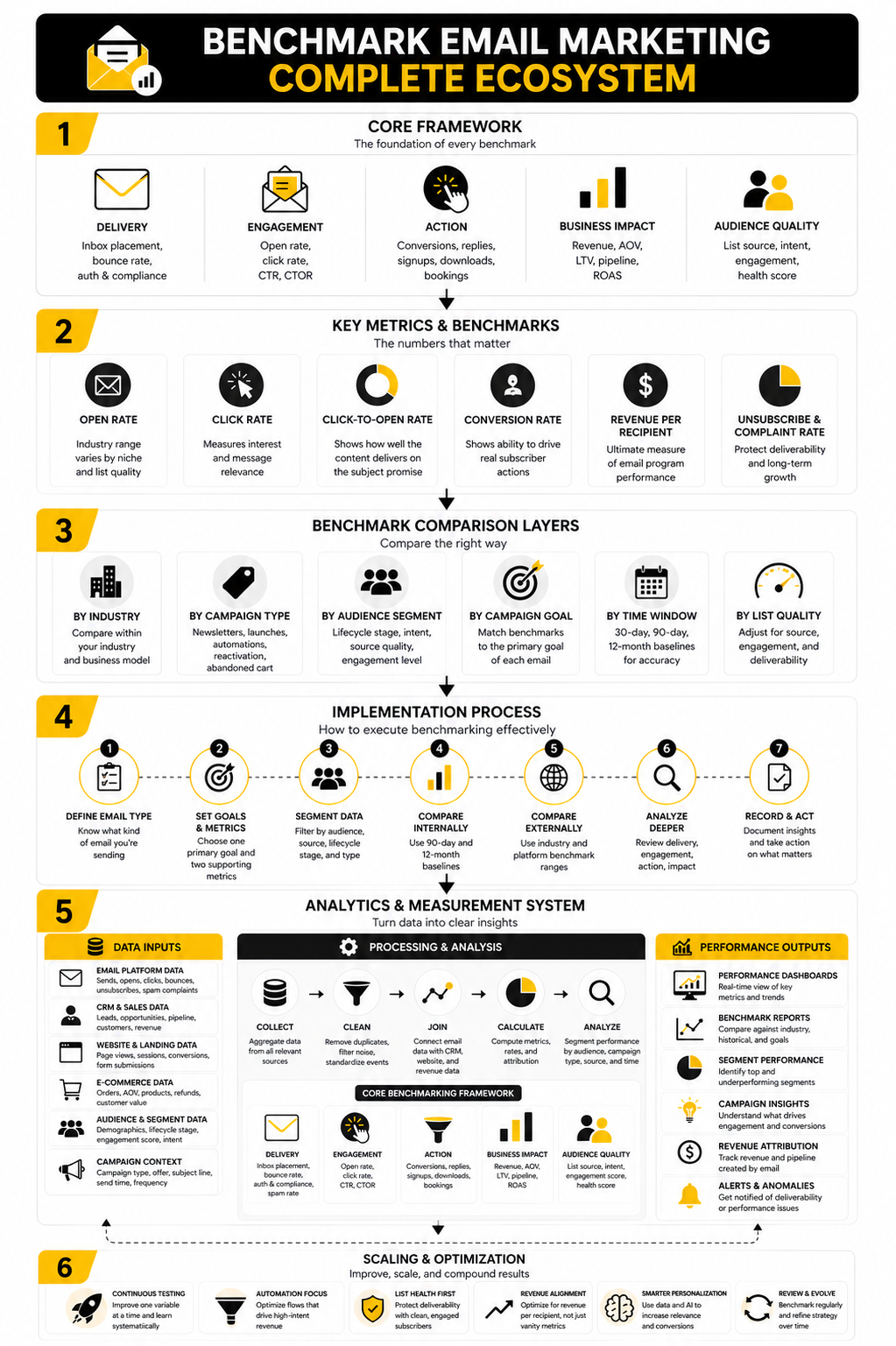

Framework Overview

A practical benchmark email marketing framework has four layers: delivery, engagement, action, and business impact. Delivery answers whether the email had a fair chance to be seen. Engagement shows whether the audience cared enough to open, read, click, or ignore it.

Action benchmarks measure what happened after the click. That can include purchases, demo bookings, form fills, replies, upgrades, or trial starts. Business impact then connects the campaign to revenue, retention, margin, pipeline, or customer lifetime value.

This framework prevents shallow optimization. You do not rewrite subject lines when the real issue is list quality. You do not redesign the email when the real issue is a weak landing page. And you do not celebrate clicks when none of those clicks convert into meaningful business outcomes.

The Core Email Marketing Benchmarks To Track

Good benchmark email marketing starts with the metrics that show whether your emails can actually do their job. That means you should not begin with revenue, even though revenue is the outcome everyone wants. Start with delivery and list health, then move into engagement, conversion, and commercial performance.

The cleanest way to think about this is simple. Each benchmark answers one specific question. When a number moves, you should know what it is trying to tell you.

Delivery Rate

Delivery rate shows the percentage of sent emails that were accepted by receiving mail servers. The DMA’s 2025 benchmark data reported a 98% delivery rate across 2024 email activity, which makes this a useful high-level reference point for healthy permission-based programs: 2025 email benchmarking data. But delivery is not the same as inbox placement, and this is where many marketers get sloppy.

An email can be “delivered” and still land in spam, promotions, or another low-visibility area. That is why delivery rate should be paired with bounce rate, complaint rate, authentication status, and inbox placement data when available. If delivery is weak, do not obsess over copy yet. Fix the sender reputation, list hygiene, and technical setup first.

Bounce Rate

Bounce rate tells you how many emails could not be delivered. Hard bounces usually mean the address is invalid or no longer exists, while soft bounces are temporary issues like a full inbox or server problem. A high bounce rate is one of the fastest ways to damage sender reputation because it signals poor list quality.

For most professional programs, the target should be boring: low, stable, and monitored every campaign. If bounce rate jumps after a new lead source, partner campaign, giveaway, or imported list, that source needs to be reviewed immediately. Benchmark email marketing is not just about judging campaigns after they launch. It should also protect the list before bad data reaches your main sending infrastructure.

Spam Complaint Rate

Spam complaints are brutal because they come directly from recipients. Gmail’s sender guidance says bulk senders should keep spam rates below 0.10% and avoid reaching 0.30% or higher, while Yahoo also tells senders to keep complaint rates below 0.30%: Gmail sender guidelines and Yahoo sender best practices. This is one of the few benchmarks where “close enough” is not good enough.

A rising complaint rate usually means one of four things. The audience did not expect the email, the content did not match the signup promise, the frequency changed too aggressively, or the unsubscribe path was not clear enough. Treat complaints as a trust metric, not just a deliverability metric.

Unsubscribe Rate

Unsubscribe rate shows how many people actively chose to leave your list after receiving a message. A small number of unsubscribes is normal, especially when you send more frequently, clean inactive subscribers, or make stronger offers. Zero unsubscribes is not automatically a win because it can also mean people are ignoring you or marking you as spam instead.

This benchmark becomes useful when you segment it by campaign type. A hard sales email will usually behave differently from a newsletter, onboarding email, or transactional update. The goal is not to trap people on the list. The goal is to keep the right people engaged and make leaving easy for everyone else.

Open Rate

Open rate still has value, but it should not be treated like the scoreboard. Apple’s Mail Privacy Protection and similar privacy changes have made opens less precise, so open rate is better used as a directional signal than a hard measure of audience intent. The DMA’s 2025 report showed open rates reaching 35.9%, but that number should be read in the context of list type, sector, and measurement changes: DMA email benchmark report.

Use open rate to compare similar emails sent to similar audiences under similar conditions. It can help you evaluate sender name, subject line, timing, and audience familiarity. But never let open rate override click, conversion, or revenue data. A clever subject line that earns opens without action is not a business win.

Click Rate

Click rate is usually a stronger engagement benchmark than open rate because it reflects intentional action. The DMA reported 2.3% unique click rates for 2024 email activity, giving marketers a useful broad reference point: unique click benchmark data. Still, a “good” click rate depends heavily on the purpose of the email.

A newsletter with multiple articles, a flash sale, and a product education email are not playing the same game. For benchmark email marketing, click rate should be tied to the email’s primary job. If the email has one clear call to action, measure whether people took it. If the email has several links, separate curiosity clicks from revenue-driving clicks.

Click-To-Open Rate

Click-to-open rate compares clicks to opens, which makes it useful for judging whether the content delivered on the promise of the subject line. If open rate is strong but click-to-open rate is weak, the email may be creating interest without enough motivation to act. That often points to a mismatch between subject line, offer, body copy, layout, or call to action.

This metric is especially useful when you test creative angles. You can see whether a curiosity-driven subject line attracts the wrong attention or whether a direct benefit-led subject line brings in fewer opens but better clicks. That is the kind of nuance basic benchmark tables miss.

Conversion Rate

Conversion rate measures the percentage of recipients or clickers who completed the intended action. This could be a purchase, booking, form submission, reply, upgrade, trial activation, or another meaningful step. It is where email performance starts connecting to the business instead of staying inside the email platform.

The important move is to define the conversion before the campaign goes out. Otherwise, you end up moving the goalposts after seeing the numbers. A campaign built to drive demo bookings should not be judged mainly by product page visits. A campaign built to recover abandoned carts should not be judged mainly by opens.

Revenue Per Recipient

Revenue per recipient is one of the most practical benchmarks for ecommerce and offer-driven email programs. It shows how much revenue each delivered or sent email generated on average, which makes it easier to compare campaigns with different list sizes. Klaviyo’s benchmark reporting uses revenue per recipient alongside open rate, click rate, order rate, and conversion metrics because ecommerce teams need to see both engagement and money: Klaviyo benchmark methodology.

This benchmark is powerful because it prevents vanity reporting. A smaller segment with high purchase intent can outperform a massive list blast with weak relevance. That is the point. Benchmark email marketing should help you send better, not just bigger.

How To Compare Your Benchmarks By Industry, List Type, And Campaign Goal

Once the core metrics are clear, the next step is comparison. This is where benchmark email marketing can either become useful or completely misleading. A single “average email benchmark” is too broad to guide real decisions because different industries, audiences, and campaign types behave differently.

Retail, SaaS, coaching, local services, media, B2B consulting, and ecommerce all have different buying cycles. A B2B lead nurture sequence may need weeks before it creates pipeline, while an abandoned cart email can drive revenue within hours. If those two campaigns are judged by the same click rate or conversion rate, the analysis will be shallow from the start.

Start With Your Industry Range

Industry benchmarks give you the first layer of context. MailerLite’s 2026 benchmark analysis reported a 43.46% average open rate, 2.09% click rate, 6.81% click-to-open rate, and 0.22% unsubscribe rate across 2025 activity, while the DMA reported 35.9% opens and 2.3% unique clicks across 2024 activity: MailerLite benchmark data and DMA benchmark data. Those numbers are useful, but only as a starting point.

The smarter move is to compare against companies that sell in a similar way. Ecommerce brands should pay close attention to revenue per recipient, order rate, and automation performance. B2B teams should care more about reply rate, booked meetings, qualified pipeline, and progression through the buying journey.

Separate Campaigns From Automations

Campaign emails and automated flows should never sit in the same benchmark bucket. A one-time newsletter goes to a broad audience at a chosen moment. An automation is triggered by behavior, timing, or lifecycle stage, which usually means the intent level is more specific.

This difference changes the numbers. A welcome flow, abandoned cart sequence, post-purchase email, renewal reminder, or reactivation flow has a different job from a weekly broadcast. Klaviyo’s benchmark approach separates campaign and flow performance because ecommerce teams need to see how automations perform against their own type of revenue behavior: Klaviyo email benchmark methodology.

Match The Benchmark To The Email’s Job

Every email should have one primary benchmark that matches its purpose. If the goal is education, the benchmark may be click depth, replies, or content consumption. If the goal is sales, the benchmark should move closer to conversion rate, order rate, revenue per recipient, or pipeline created.

This sounds obvious, but it is where many reports fall apart. Teams often judge every email by opens because opens are easy to see. That creates lazy optimization. A subject line can win the open and still lose the business.

Build A Clean Benchmarking Process

A strong benchmark email marketing process does not need to be complicated. It needs to be consistent. The goal is to create a repeatable system that lets you compare like with like and spot the real reason performance changed.

- Define the email type before launch.

- Assign one primary goal and two supporting metrics.

- Segment results by audience, source, lifecycle stage, and campaign type.

- Compare the campaign against your own 90-day and 12-month baseline.

- Compare the same campaign type against external benchmark ranges.

- Review delivery, engagement, action, and business impact in that order.

- Record the decision, not just the result.

The last step matters more than most people think. Benchmarking is not reporting. Reporting says what happened. Benchmarking should tell you what to change, what to protect, and what to test next.

Use Internal Benchmarks As Your Main Standard

External benchmarks are helpful, but your own data should become the main standard over time. A mature list with strong brand trust may outperform industry averages. A newer list built from paid traffic may need a different expectation while it warms up and proves intent.

Create internal baselines by campaign type, not just by month. Track newsletters against newsletters, launches against launches, and cart recovery emails against cart recovery emails. This keeps the analysis honest and prevents one strong campaign from hiding weak performance elsewhere.

Adjust For Audience Quality

Audience quality can make two identical emails perform very differently. A buyer segment, webinar attendee list, cold lead magnet list, inactive subscriber pool, and VIP customer group should not be compared as if they are equal. The same copy can look brilliant in one segment and broken in another.

This is why source-level tracking matters. If a giveaway list generates high unsubscribes, low clicks, and weak conversions, the problem may not be the email. The problem may be the acquisition channel. Good benchmark email marketing connects list performance back to how the subscriber entered the database.

Adjust For Deliverability And Compliance

Before judging creative, check whether the email had a fair chance to work. Gmail requires senders to authenticate mail, support easy unsubscribe, and keep spam rates low, with bulk sender rules applying around the 5,000-message-per-day threshold for personal Gmail accounts: Gmail sender requirements and Gmail bulk sender FAQ. If authentication, complaint rate, or list quality is weak, campaign-level benchmarks become contaminated.

This is especially important for larger senders and agencies managing multiple client accounts. A drop in clicks may look like a copy issue when the real problem is inbox placement. Fix the foundation before rewriting the house.

Keep The Comparison Window Tight

Benchmark windows should be long enough to smooth out random swings but short enough to reflect current reality. A 90-day baseline is useful for recent performance, while a 12-month view helps account for seasonality, launches, holidays, and buying cycles. Looking only at last week creates noise. Looking only at last year can hide a recent decline.

The practical approach is to use both. Compare the campaign against the last 90 days for momentum and against the same period last year for context. That gives you a cleaner read on whether performance is improving, fading, or simply behaving normally for the season.

Statistics And Data

Data only helps when it changes what you do next. That is the rule. Benchmark email marketing should not become a dashboard full of numbers that everyone reviews and nobody acts on.

The useful numbers explain where performance is strong, where it is weak, and which part of the email system needs attention. Delivery metrics protect access to the inbox. Engagement metrics show whether the message earned attention. Conversion and revenue metrics show whether the campaign created business value.

Read Benchmarks As Signals, Not Scores

A benchmark is not a grade. It is a signal that tells you where to look. If your open rate is below the market range but click rate is healthy, you may have a subject line, sender name, timing, or inbox visibility issue.

If open rate is strong but click rate is weak, the problem is usually inside the email. The message may be too vague, the offer may not be compelling, or the call to action may not be obvious enough. If clicks are strong but conversions are weak, stop blaming the email first. Look at the landing page, checkout flow, offer clarity, pricing, load speed, and trust signals.

Use Current Market Ranges Carefully

Recent benchmark reports show why context matters. MailerLite’s 2026 benchmark analysis reported a 43.46% average open rate, 2.09% click rate, 6.81% click-to-open rate, and 0.22% unsubscribe rate across 2025 campaigns: MailerLite benchmark report. Brevo’s 2025 benchmark analysis, based on more than 44 billion emails, showed an overall 21% open rate and 3.96% click-through rate across its dataset: Brevo email marketing benchmarks.

Those numbers are both useful, and they are also clearly different. That does not mean one is automatically wrong. It means each platform has a different customer base, measurement method, industry mix, geography, and sending behavior. Use external benchmarks to build a reasonable range, then let your own list history become the standard that matters most.

Watch Deliverability Before Engagement

Deliverability is the first measurement layer because weak deliverability distorts every other metric. Gmail tells bulk senders to keep spam rates below 0.10% and avoid reaching 0.30% or higher, while Yahoo’s sender guidance also sets 0.30% as the complaint-rate ceiling: Gmail sender requirements and Yahoo sender best practices. Once complaints rise, the campaign is no longer just underperforming. It is training inbox providers to trust you less.

This is why complaint rate, bounce rate, authentication, and unsubscribe friction should sit above creative testing in your analytics review. If the list is dirty or the audience did not clearly opt in, better copy will not save the system. Fix the source of the problem before you optimize the message.

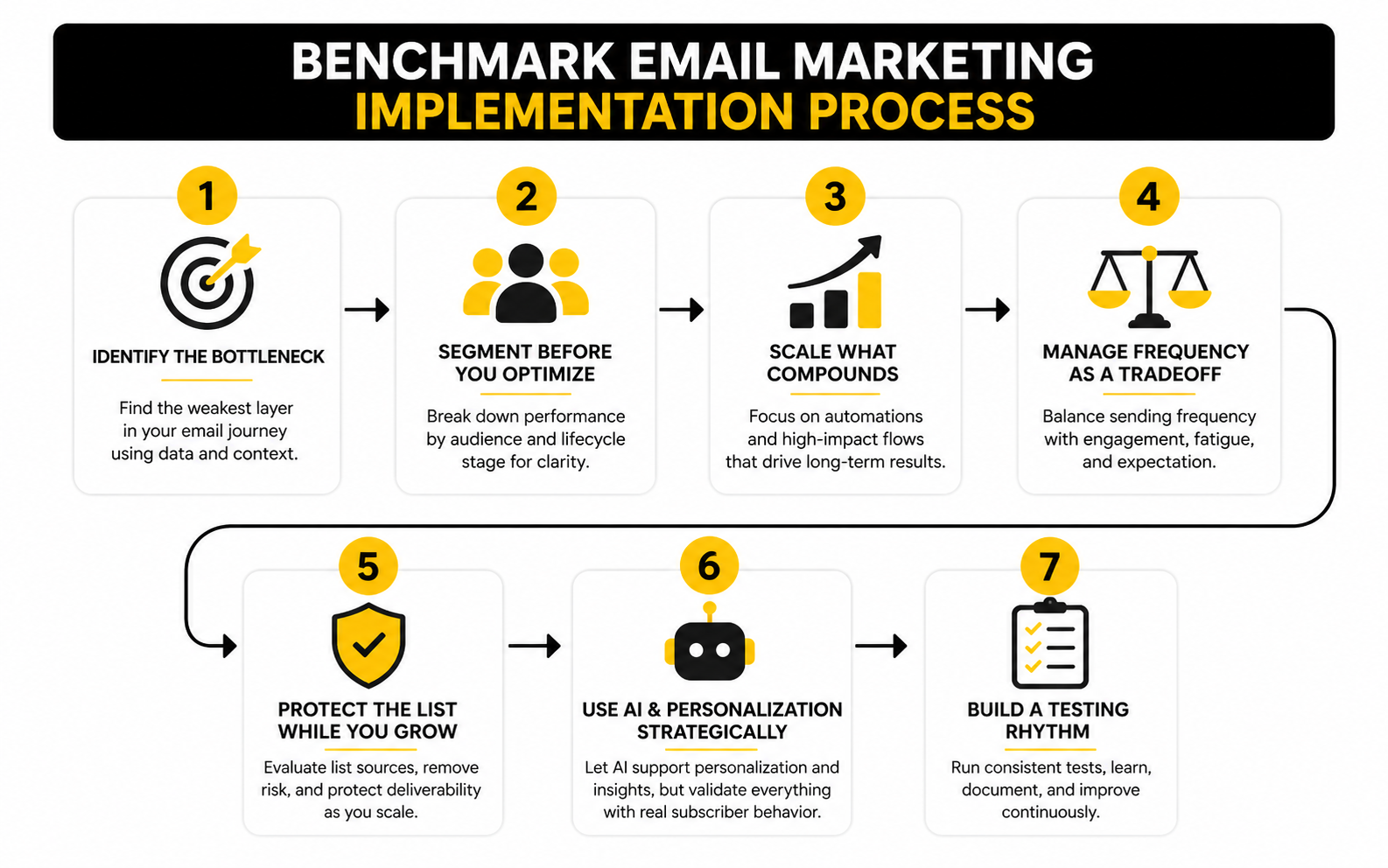

Build A Measurement System That Explains Cause

A clean analytics system should show the email journey from send to sale. You want to see how many emails were sent, delivered, opened, clicked, converted, unsubscribed, bounced, and complained. Then you want to connect those events to list source, segment, campaign type, offer, and revenue.

The most practical setup is simple:

- Track delivery, bounce rate, complaint rate, and unsubscribe rate for list health.

- Track open rate, click rate, and click-to-open rate for message engagement.

- Track conversion rate, order rate, booked calls, replies, or form completions for action.

- Track revenue per recipient, revenue per click, customer value, or pipeline for business impact.

- Review the numbers by segment, not just as one blended average.

This structure keeps the team honest. If a campaign wins attention but loses money, you know where to investigate. If an automation drives revenue from a tiny share of total sends, you know it deserves more attention, not less.

Give Automations Their Own Data View

Automations often behave differently from scheduled campaigns because they respond to intent. Omnisend’s 2025 ecommerce report found that automated emails generated 37% of email sales from just 2% of email volume, and that abandoned cart, welcome, and browse abandonment messages drove most automated orders: Omnisend ecommerce marketing report. That is exactly why automation benchmarks should not be buried inside general campaign averages.

A welcome email, cart recovery email, renewal reminder, and win-back sequence should each have its own baseline. These emails are closer to customer behavior, so they often reveal the biggest profit leaks. If one of them drops, the issue may be timing, segmentation, offer strength, product availability, or customer intent changing.

Turn The Numbers Into Decisions

The final step is translating data into action. A benchmark report should end with a decision, not just a summary. If the problem is delivery, clean the list, check authentication, reduce risky sources, and monitor complaints. If the problem is engagement, improve segmentation, subject line relevance, email structure, and offer framing.

If the problem is conversion, move outside the email platform. Audit the landing page, sales page, checkout, booking form, or follow-up process. Tools like GoHighLevel, ClickFunnels, Systeme.io, and Brevo can help when you need email, funnels, CRM, automations, or campaign tracking in one operational workflow. But the tool is not the strategy. The strategy is knowing which signal matters and what you will change because of it.

Turning Benchmark Data Into Better Campaigns

At this stage, benchmark email marketing stops being a reporting exercise and becomes a growth system. The point is not to admire your dashboard. The point is to decide what gets tested, what gets fixed, what gets scaled, and what gets cut.

This is where advanced teams separate themselves. They do not chase every metric at once. They identify the constraint in the email system, improve that constraint, and then move to the next one.

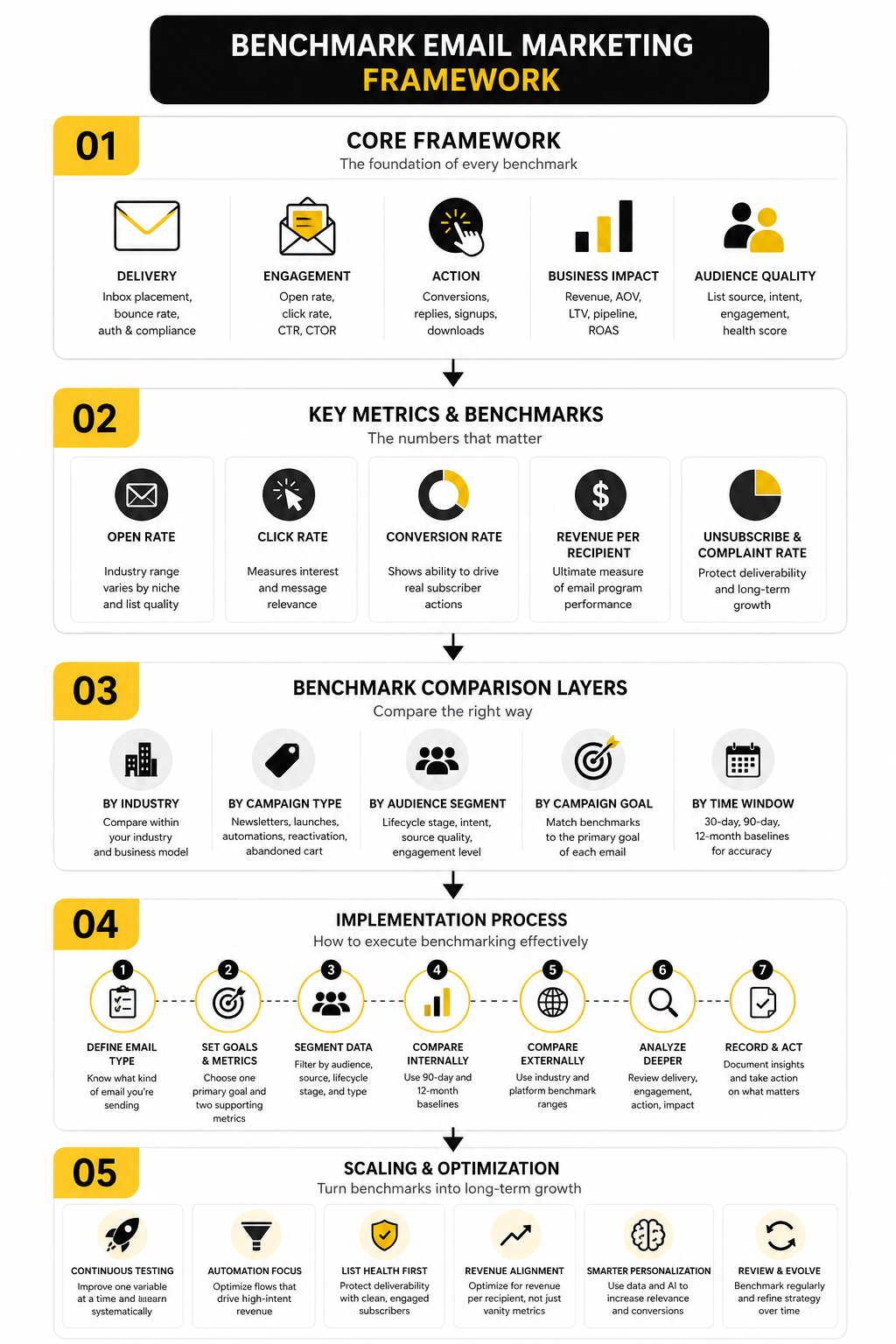

Prioritize The Bottleneck

Every campaign has a bottleneck. Sometimes the bottleneck is reach because emails are not landing well. Sometimes it is attention because the subject line or sender relationship is weak. Sometimes it is action because the email creates interest but not enough urgency or clarity.

The mistake is improving the wrong layer. If spam complaints are climbing, do not start by testing button copy. If clicks are strong but purchases are weak, do not assume the subject line failed. Fix the part of the journey where the data shows friction.

Segment Before You Optimize

Segmentation changes what “good” looks like. A buyer list, warm lead list, inactive subscriber group, cold lead magnet audience, and abandoned cart segment should not be judged by one blended benchmark. Blended averages hide the truth.

A strong benchmark email marketing system breaks performance down by audience quality, lifecycle stage, acquisition source, and purchase intent. This makes your next move obvious. You may find that one segment needs a better offer, another needs more education, and another should be suppressed because it is hurting deliverability.

Scale What Compounds

Not every email improvement deserves the same attention. A one-time campaign lift is useful, but an automation lift can compound every day. That is why automated flows deserve serious focus, especially when they are tied to clear intent.

Omnisend’s 2025 ecommerce report found that automated emails generated 37% of email sales from just 2% of email volume, which shows why welcome, cart recovery, browse abandonment, and post-purchase flows deserve their own optimization roadmap: Omnisend ecommerce marketing report. If an automation touches high-intent subscribers repeatedly, even a small improvement can create meaningful long-term revenue.

Treat Frequency As A Strategic Tradeoff

Sending more often can increase revenue, but it can also increase fatigue. Sending less often can protect the list, but it can also reduce momentum and leave money on the table. There is no universal answer because frequency depends on expectation, offer strength, audience relationship, and message quality.

The practical move is to benchmark frequency by segment. Buyers may tolerate more product updates than cold subscribers. Engaged readers may welcome a weekly newsletter, while inactive contacts may need a slower reactivation path. Watch revenue, clicks, unsubscribes, and complaints together because frequency decisions always involve tradeoffs.

Protect The List While You Grow

Growth creates pressure. More paid traffic, more lead magnets, more giveaways, more partners, and more imported contacts can make the list look bigger while making the email program weaker. Bigger is not better if the new audience does not engage, convert, or trust you.

This is where list source benchmarking matters. Track each acquisition source by bounce rate, complaint rate, unsubscribe rate, click rate, conversion rate, and revenue quality. If a source brings cheap leads but damages deliverability, it is not cheap. It is expensive in a way your ad dashboard may not show.

Be Careful With AI And Personalization

AI can help with subject line variations, segmentation ideas, content drafts, customer journey analysis, and reporting summaries. But it can also create generic messaging at scale, and generic messaging at scale is still generic. The benchmark that matters is not whether AI helped you send faster. It is whether the campaign became more relevant and more profitable.

Use AI to support judgment, not replace it. Let it help find patterns, draft testing angles, and summarize performance shifts. Then validate the output against real subscriber behavior. Tools such as Brevo, GoHighLevel, and ManyChat can support automation and personalization, but the strategic filter still has to come from you.

Know When A Benchmark Should Be Ignored

Sometimes a campaign should underperform a normal benchmark. A list-cleaning campaign may produce weaker clicks but improve long-term deliverability. A price increase announcement may trigger more unsubscribes but protect customer quality. A reactivation campaign may look ugly on engagement but still identify a profitable group worth saving.

This is why intent matters so much. Benchmarks should serve the campaign goal, not bully every campaign into looking average. If the strategic purpose is clear and the risk is controlled, a temporary dip in one metric can be acceptable.

Build A Testing Rhythm

A testing rhythm keeps benchmark email marketing from becoming random. Test one meaningful variable at a time when possible, and prioritize changes tied to the bottleneck. Subject lines, sender names, offer framing, segmentation, send time, email length, landing page alignment, and automation timing can all matter, but they do not matter equally in every situation.

A practical testing rhythm looks like this:

- Identify the weakest layer in the journey.

- Pick one test that directly addresses that weakness.

- Define the success metric before launch.

- Run the test on a meaningful audience size.

- Keep the winner only if it improves the metric that matters.

- Document the learning so the next campaign starts smarter.

This is how benchmarks become institutional knowledge. You stop relearning the same lesson every quarter. Your email program gets sharper because every test leaves behind a decision, not just a chart.

Common Benchmarking Mistakes

The most expensive benchmark email marketing mistakes are not usually technical. They are interpretation mistakes. The team sees a number, reacts too fast, and changes the wrong part of the system.

A weak campaign does not always mean weak copy. A strong open rate does not always mean strong demand. A high unsubscribe rate does not always mean the email failed, especially if the campaign intentionally filtered unqualified subscribers out of the list.

Treating Opens Like The Main Truth

Open rate is still useful, but it is not the main truth anymore. Privacy changes and inbox behavior have made opens less reliable as a direct measure of human attention. They can help you spot directional changes, but they should not control the whole strategy.

A better approach is to use open rate as an early signal, then validate it with clicks, replies, conversions, revenue, complaints, and unsubscribes. If opens rise but clicks and revenue do not, the campaign did not really improve. It only became better at earning curiosity.

Comparing Every Campaign To The Same Average

A launch email, newsletter, abandoned cart email, webinar reminder, reactivation campaign, and customer onboarding message are different machines. They should not be compared to the same average. When you blend them together, you lose the ability to diagnose what is really happening.

Benchmark email marketing works best when each campaign type has its own expectation. That means one baseline for newsletters, another for sales emails, another for automations, and another for lifecycle messages. This makes the data more honest and the decisions much easier.

Ignoring The Quality Of The List

Bad lists create fake problems. They make subject lines look weak, offers look uninteresting, and campaigns look broken. In reality, the audience may simply be low intent, outdated, poorly sourced, or no longer aligned with the offer.

This is why acquisition source should always be part of the benchmark review. A lead from a serious buyer-intent page is not the same as a lead from a casual giveaway. If the list source is weak, the campaign is forced to fight an uphill battle before anyone reads a word.

Optimizing Before The System Is Stable

Do not over-optimize a messy system. If tracking is inconsistent, segments are unclear, attribution is broken, or deliverability is unstable, your tests will create noise instead of learning. This is where marketers waste months.

Stabilize the basics first. Confirm that events are tracked correctly, campaign types are labeled consistently, and conversions are tied to the right source. Once the system is clean, optimization becomes far more reliable.

Tool Choices For A Better Email Benchmarking System

The right tool depends on the kind of business you are running. A solo creator, ecommerce brand, SaaS company, agency, coach, local service business, and B2B sales team all need different levels of automation, CRM depth, segmentation, and reporting. Do not buy complexity just to feel sophisticated.

If you need email and automation in a practical marketing stack, Brevo and Moosend are natural options to evaluate. If your benchmark email marketing system needs CRM, pipelines, funnels, and follow-up automation in one place, GoHighLevel fits that kind of workflow better.

If the main gap is the post-click experience, email software alone will not fix it. A campaign can drive strong clicks and still lose money on a weak page. In that case, ClickFunnels, Systeme.io, or Replo may be more relevant because the benchmark problem is happening after the click.

FAQ - Built for Complete Guide

What Is Benchmark Email Marketing?

Benchmark email marketing is the process of comparing your email performance against useful reference points. Those reference points can include industry averages, platform benchmarks, your own historical performance, and campaign-specific goals. The goal is not to copy the average, but to understand whether your email system is improving or leaking performance.

What Is A Good Email Open Rate?

A good open rate depends on your industry, audience, list quality, and campaign type. Recent benchmark sources show wide ranges, with the DMA reporting 35.9% opens across 2024 email activity and MailerLite reporting a 43.46% average open rate across 2025 campaigns: DMA benchmark data and MailerLite benchmark data. Use those numbers as context, not as a universal target.

What Is A Good Email Click Rate?

A good click rate is one that reflects real interest in the offer or next step. The DMA reported 2.3% unique click rates, while MailerLite reported a 2.09% average click rate, which gives a practical external range for broad comparison: DMA email benchmarks and MailerLite email benchmarks. Your internal benchmark matters more once you have enough campaign history.

Which Email Metric Matters Most?

The most important metric depends on the campaign goal. For deliverability, complaint rate and bounce rate matter most. For engagement, click rate is usually more useful than open rate. For sales, revenue per recipient, conversion rate, order rate, or pipeline created should carry more weight.

How Often Should I Review Email Benchmarks?

Review campaign-level benchmarks after every major send, but do not overreact to one result. A 30-day view helps spot short-term movement, a 90-day view helps reveal momentum, and a 12-month view helps account for seasonality. The more volatile your sending volume is, the more careful you need to be with quick conclusions.

Should I Compare My Emails To Industry Averages?

Yes, but only as a starting point. Industry averages help you understand the wider market, but they do not know your list quality, offer, price point, brand trust, or customer journey. Your best benchmark becomes your own performance by campaign type and audience segment.

Why Are My Open Rates High But Clicks Low?

High opens with low clicks usually means the email earned curiosity but did not create enough motivation to act. The subject line may be stronger than the body copy, or the offer may not be clear enough. It can also mean the email has too many competing links or a call to action that feels weak.

Why Are My Clicks Good But Sales Weak?

Good clicks with weak sales usually point to a post-click problem. The landing page, checkout, booking form, offer, pricing, proof, or page speed may be creating friction. In that case, the email did its job by creating intent, and the next step in the journey needs to be fixed.

What Spam Complaint Rate Should I Stay Under?

Gmail says senders should keep spam rates below 0.10% and avoid reaching 0.30% or higher: Gmail sender FAQ. This should be treated as a serious operational benchmark, not a casual guideline. If complaint rates rise, slow down and review list source, consent, frequency, content relevance, and unsubscribe visibility.

Are Automated Emails Better Than Campaign Emails?

Automated emails are often more profitable because they are triggered by behavior or lifecycle timing. Omnisend’s 2025 ecommerce report found that automated emails generated 37% of email sales from just 2% of email volume: Omnisend ecommerce marketing report. That does not mean every automation is good, but it does mean automations deserve separate benchmarks and serious optimization.

How Do I Benchmark A Small Email List?

Small lists need careful interpretation because one or two actions can swing the numbers. Instead of obsessing over every percentage, look for repeated patterns across several sends. Track clicks, replies, conversions, unsubscribes, and complaints, then compare similar campaigns over time.

Should I Remove Inactive Subscribers?

Yes, but do it thoughtfully. Inactive subscribers can lower engagement and hurt deliverability if they never open, click, or respond. Before removing them, run a reactivation sequence, separate recent inactivity from long-term inactivity, and keep buyers or high-value contacts in a different review group.

What Is The Biggest Benchmarking Mistake?

The biggest mistake is using one average to judge every email. That leads to bad decisions because different emails have different jobs. Benchmark email marketing works when you compare the right metric to the right campaign type, audience segment, and business goal.

Work With Professionals

Explore 10K+ Remote Marketing Contracts on MarkeWork.com

Most marketers spend too much time chasing clients, competing on crowded platforms, and losing a percentage of every project to middlemen.

MarkeWork gives you a better way. Browse thousands of remote marketing contracts and connect directly with companies desperate to hire skilled marketers like you, without platform commissions and without unnecessary gatekeepers.

If you're serious about finding better opportunities and keeping 100% of what you earn, explore available contracts and create a profile for free at MarkeWork.com.