Copywriting AI is no longer a side experiment for content teams. In LinkedIn’s 2025 B2B benchmark research, 95% of B2B marketers said they use AI at least weekly, and the same report found that copywriting, SEO, and task automation are already among the most common use cases. That matters because the market has moved past the question of whether writers will try AI and straight into the harder question of how professionals should use it without lowering quality.

The pressure is coming from both sides. On one side, teams want more output, faster turnaround, and leaner production cycles, while Microsoft’s 2025 Work Trend Index says 82% of leaders see this year as pivotal for rethinking strategy and operations around AI. On the other side, Google makes it clear that using generative AI to publish lots of pages without adding value can violate scaled content abuse policies, so speed alone is not a strategy.

That tension is exactly why copywriting AI deserves a serious framework instead of shallow prompt hacks. McKinsey still puts the broader enterprise opportunity at trillions in potential productivity gains, but the same research also shows that very few companies are truly mature in deployment. In other words, the advantage is not available to everyone equally, and that creates room for disciplined teams to pull ahead.

Article Outline

- Why Copywriting AI Matters Now

- The Copywriting AI Framework

- Core Components of High-Performing AI Copy

- How Professionals Build a Reliable Workflow

- Channel-Specific Execution and Tool Stack

- Risks, Governance, and What Comes Next

Why Copywriting AI Matters Now

The practical case for copywriting AI is simple: most teams are overloaded, but most teams also cannot afford generic messaging. LinkedIn’s 2025 benchmark found that marketers report about four hours saved per week per task area, adding up to as much as 20 hours weekly across workflows, while SAS reported that 85% of marketers are already using generative AI for marketing in 2025. That combination changes the economics of content production because AI is no longer just a novelty tool for drafting blog intros or headline ideas.

But adoption does not automatically create good copy. Salesforce’s latest State of Marketing says it is based on insights from nearly 4,500 marketers worldwide, and its top-line takeaway is that marketers are trying to deliver more personalized, two-way engagement at scale. That sounds promising until you remember Google’s guidance: AI can help with research and structure, but content that shows little originality or little added value is still a problem for search performance and for users alike.

So the real issue is not whether copywriting AI works. It is whether your process turns AI into leverage or into noise. Teams that use it as a drafting assistant usually get faster, but teams that connect it to positioning, editorial standards, customer insight, and revision discipline are the ones most likely to see measurable gains such as the 13% revenue growth and 13% cost savings LinkedIn reported for stronger strategic AI use.

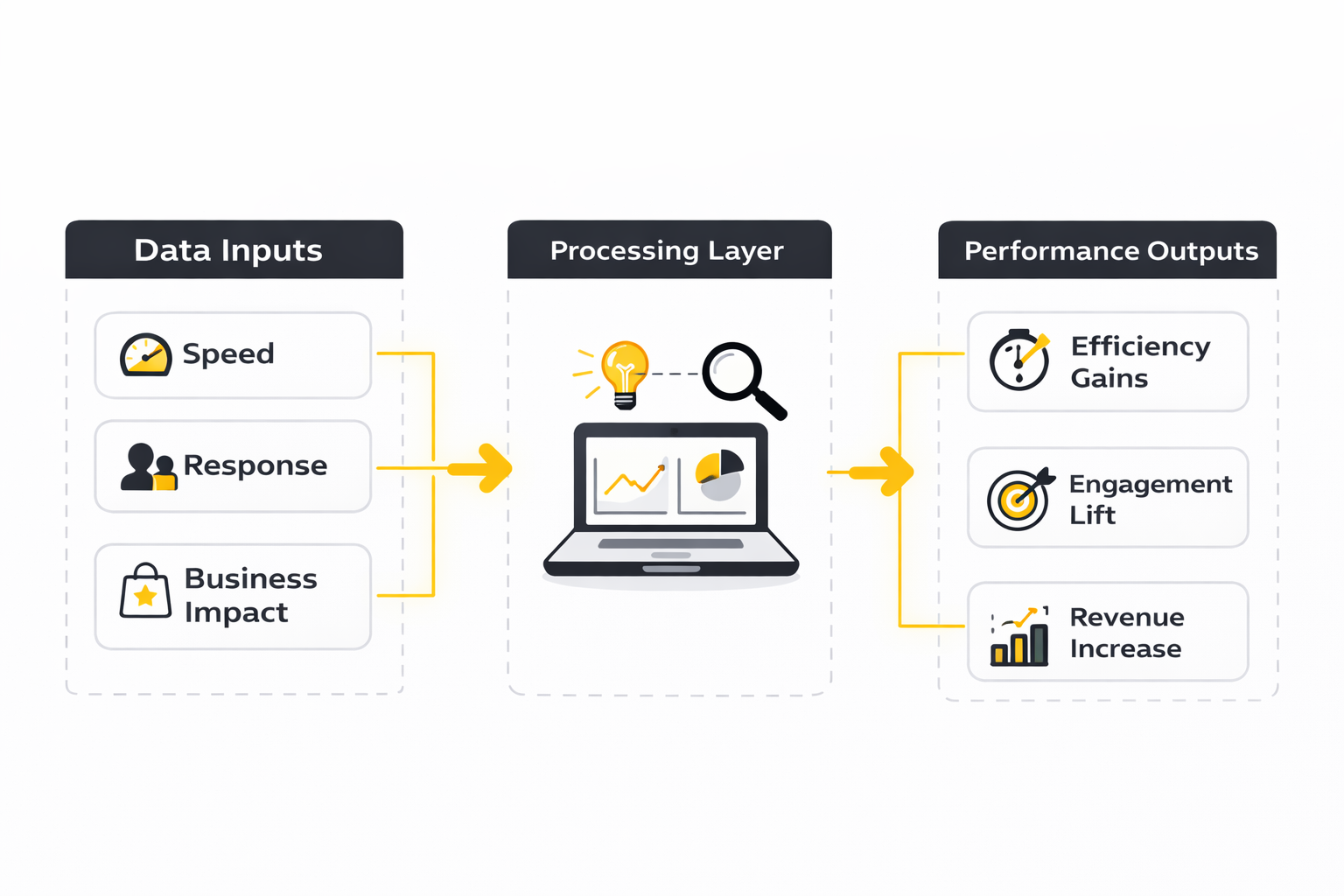

The Copywriting AI Framework

A useful copywriting AI framework starts with a shift in mindset. Microsoft describes the next wave of work as human-led but AI-operated systems, and that is exactly the right lens for copy: the machine expands capacity, but the human still owns judgment, strategy, and final accountability. When teams forget that distinction, they end up publishing polished-looking copy that sounds acceptable on the surface and weak everywhere that actually matters.

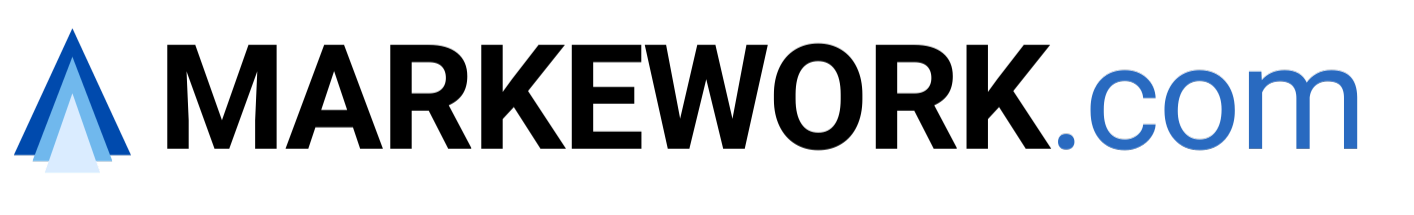

At a high level, the framework for strong AI-assisted copy has four moving parts: direction, inputs, generation, and editorial control. Direction means the strategic brief is clear before prompting begins. Inputs mean the model gets real context such as product detail, audience pain points, offer mechanics, brand voice, and channel constraints, because even Google’s own guidance says generative AI is most useful when it helps with research and structure rather than replacing value creation outright.

The last two parts are where professional implementation usually separates itself from casual use. Generation should produce options, angles, and drafts, not unchecked final copy, and editorial control should tighten claims, sharpen clarity, remove fluff, and verify that the piece says something worth reading. That discipline fits the wider market trend too, because McKinsey notes that almost all companies are investing more in AI while only 1% describe themselves as truly mature in deployment, which means process quality is becoming the real competitive moat.

Core Components of High-Performing AI Copy

The first component is strategic context, because copywriting AI gets dramatically better when it knows what job the copy actually needs to do. OpenAI’s prompt guidance stresses giving the model clear instructions, relevant context, and an explicit output format, while Anthropic’s newer work goes even further by framing strong results as a context engineering problem, not a clever one-line prompt problem. That is why serious teams stop treating AI like a slot machine and start treating it like a junior collaborator that needs a real brief, real constraints, and a real definition of success.

The second component is source material with enough substance to produce something useful. Google’s own guidance says generative AI is especially helpful for research and structure, but it also warns that publishing large amounts of low-value material can cross into scaled content abuse, which means weak inputs eventually create weak and risky outputs. In practice, that means the best copywriting AI workflows feed the model product notes, positioning documents, customer objections, offer mechanics, interview transcripts, sales call themes, and approved claims instead of asking it to invent authority from scratch.

The third component is brand voice control, and this is where a lot of teams still lose the plot. HubSpot’s 2025 marketing guidance makes the point in plain English: even when AI helps generate content, brands still need humans to infuse the work with personality, tone, and values so it does not read like generic machine output. That is not a cosmetic issue either, because once everything sounds interchangeable, conversion usually gets harder, retention gets weaker, and the brand starts competing on volume instead of distinctiveness.

The fourth component is editorial judgment after generation. Jasper’s 2025 marketing research highlights output quality and data privacy as major barriers to scaling AI use, which is exactly why raw drafts should never be the finish line. Good teams review for factual precision, unsupported claims, repetition, weak transitions, search intent fit, and whether the copy says anything a competent human would actually be willing to publish under the company name.

The fifth component is measurement, because copywriting AI is only valuable when it improves outcomes you can see. Adobe’s latest digital trends reporting shows that many organizations are still stuck with linear, resource-heavy content operations, and only a minority are using generative or agentic AI for journey design or omnichannel activation at scale. That gap matters because if you do not measure draft speed, revision load, publish rate, click-through rate, conversion rate, or assisted revenue, then you are not running a system yet. You are just producing more text.

Strategic Direction Comes Before Prompting

A lot of bad AI copy is really bad briefing in disguise. When the task is vague, the promise is fuzzy, and the audience is undefined, copywriting AI will usually produce something smooth, safe, and forgettable. The output may look finished, but it is still strategically empty because the system never got a clear angle to work from in the first place.

Strong direction usually includes five things: audience, pain point, desired action, differentiator, and channel constraint. That is not theory for theory’s sake. It is the minimum amount of context needed to help a model choose the right language, level of detail, and emotional emphasis without drifting into filler or cliché.

This is also why the best teams separate ideation prompts from production prompts. One prompt can be loose and exploratory when you want angles, hooks, and objections, while the next prompt should be strict and structured when you need a landing page section, ad variation, email sequence, or product description. Treating every request as one giant mega-prompt usually creates messy outputs and messy revision cycles.

Inputs Decide the Ceiling of the Output

Copywriting AI does not magically replace customer knowledge. It compresses and reorganizes what you feed it, and sometimes that is enough to unlock speed, but only when the source material is strong enough to begin with. Teams that provide real customer language from demos, support tickets, surveys, and CRM notes usually end up with sharper copy than teams relying on abstract prompts about being “high converting” or “persuasive.”

That matters even more as personalization expectations rise. Salesforce’s current marketing research centers AI, data, and personalization together, which is a useful reminder that better copy is rarely just a writing problem anymore. It is increasingly a data and workflow problem, where relevance depends on whether the system can connect the message to the right segment, context, and stage of the buying journey.

For practical implementation, the smartest move is usually to build reusable input blocks instead of rewriting prompts from zero every time. A voice guide, offer summary, customer objection bank, approved proof points, and channel rules can all be reused across campaigns. That keeps quality more stable and makes copywriting AI feel less random from one asset to the next.

How Professionals Build a Reliable Workflow

Professional teams do not use copywriting AI as a one-step writer. They use it inside a workflow that narrows risk and compounds judgment at every stage. That difference is easy to miss from the outside, but it is usually the reason one team gets usable drafts in minutes while another spends hours cleaning up polished nonsense.

A reliable workflow usually starts before any prompt is written. Someone defines the goal, pulls together source material, and decides what kind of draft is needed, because a conversion-focused email, a search-driven article section, and a paid social hook should not be generated from the same default instruction. Once that prep work exists, AI can move fast without dragging the team into endless rework.

The other big shift is that professionals build review into the workflow by default. Jasper’s 2025 findings point to output quality as a major scaling barrier, and Google’s guidance makes it clear why unchecked bulk production is a bad bet. So the workflow has to include a human pass for clarity, accuracy, originality, compliance, and brand fit before anything goes live.

A Practical Production Sequence

Start with a brief, not a prompt. Write down the audience, the problem, the desired action, the offer, the proof available, and the channel where the copy will live. That single step does more to improve copywriting AI output than most prompt tricks people obsess over online.

Next, assemble the context pack. This can include product information, differentiators, testimonials that are already approved for use, customer language, competitor positioning notes, and voice guidance. Anthropic’s context engineering work is useful here because it reflects what experienced teams already know: the quality of what the model sees strongly shapes the quality of what it can produce.

Then generate multiple drafts with different jobs. One version can optimize for clarity, another for emotional tension, and another for brevity or stronger offer framing. This is where copywriting AI shines, because it can create structured variation quickly, giving the human editor better raw material to work with instead of locking the team into the first pass.

After that, edit like a professional, not like a spellchecker. Cut repetition, verify claims, tighten transitions, remove empty intensifiers, and rewrite anything that sounds like it was written to satisfy the prompt rather than persuade the reader. The editor’s job is not to make the copy feel human in some vague sense. The job is to make the copy useful, credible, and specific enough to earn attention.

Finally, send the asset into a measured distribution system instead of publishing it in isolation. That could mean an email workflow in Brevo, social scheduling in Buffer, chatbot-assisted lead qualification through Chatbase, or a fuller funnel build inside GoHighLevel. The point is not the software itself. The point is that good copy compounds when it sits inside a workflow that can test, route, personalize, and learn.

Build the System Before You Chase Speed

The fastest way to waste copywriting AI is to skip the operating system around it. OpenAI’s current guidance still comes back to the same basics: clear instructions, strong context, and explicit output criteria produce more reliable results than vague prompting ever will. That sounds simple, but in practice it means your team needs a repeatable workflow before it needs more tools. OpenAI’s prompt engineering guide

A practical implementation starts with role clarity. Someone owns the brief, someone owns the source material, someone owns the editorial pass, and someone owns performance once the copy goes live. When those responsibilities blur together, copywriting AI tends to generate a lot of drafts and very little progress. Anthropic’s context engineering write-up

This is also where a lot of teams get a little too excited about automation. Google is clear that AI can support content creation, but the outcome still has to be useful, original enough to deserve attention, and aligned with what the user actually needs. That means the workflow has to protect quality at the same time it increases speed. Google’s generative AI content guidance

How Professionals Run Copywriting AI Day to Day

A professional copywriting AI process is not complicated, but it is disciplined. The goal is to move from raw information to publishable assets with fewer delays, fewer rewrites, and less guesswork. Once that system is in place, the tool becomes genuinely useful instead of feeling impressive only in demos.

The process usually works best when it moves through five stages in order: brief, context assembly, draft generation, human editing, and channel deployment. Adobe’s recent enterprise material around the AI-powered content supply chain keeps pushing the same idea from a bigger-company angle: scale only works when the workflow itself is designed to support it. That applies just as much to a solo operator writing sales emails as it does to a large content team shipping across channels. Adobe’s AI-powered content supply chain session

Step 1: Write the Brief Like It Will Control the Outcome

Start with the job the copy needs to do, not with the model. Define the audience, the stage of awareness, the offer, the objection profile, the proof you can legally and credibly use, and the action you want the reader to take. If those details are missing, copywriting AI usually fills the gaps with safe assumptions, and safe assumptions are exactly how bland marketing gets published. OpenAI’s best practices for prompt engineering

A good brief also forces sharper decisions before generation begins. You have to decide whether this asset is trying to educate, convert, qualify, re-engage, or support a sale already in motion. That one decision changes the language, the structure, and the amount of friction the copy should create or remove. OpenAI’s prompt engineering guide

This step matters because most prompt problems are actually briefing problems. Teams often blame the model when the real issue is that nobody defined the angle tightly enough. Fix the brief first, and the draft quality usually improves fast.

Step 2: Assemble a Real Context Pack

Once the brief is clear, load the model with information that actually deserves to shape the copy. That includes customer research, product notes, pricing logic, offer mechanics, approved testimonials, brand voice examples, sales call patterns, and common objections. Anthropic’s framing of context as a finite resource is useful here, because it pushes teams to curate inputs instead of dumping random documents into the model and hoping for magic. Anthropic’s context engineering write-up

This is also the point where many weak AI workflows quietly break. If the model gets generic input, it produces generic output with slightly better formatting. If it gets sharp, decision-ready context, it has a much better chance of generating copy that feels specific, relevant, and commercially useful.

Over time, the smartest move is to turn those inputs into reusable assets. A voice guide, proof library, objection database, approved claim sheet, and channel rules can be reused across campaigns so the quality of your copywriting AI output becomes more stable. That is how you reduce randomness without losing speed.

Step 3: Generate Variations With a Clear Job for Each Draft

The best way to use copywriting AI is rarely to ask for one final answer. It works better when you ask for structured variation, because that gives the human editor better raw material to choose from and combine. One draft can aim for clarity, another can push emotional tension, another can compress the message for paid traffic, and another can expand it for educational content. OpenAI’s prompt engineering guide

This is where professionals save serious time. Instead of staring at a blank page and trying to guess the right angle on the first attempt, they create several viable angles quickly and then edit from strength. The model is doing exploration, not making the final publishing decision.

That distinction matters a lot. When teams ask AI for the finished answer too early, they often lock themselves into mediocre copy that looks polished enough to keep but weak enough to underperform. Multiple draft paths keep the process flexible and make the final edit much stronger.

Step 4: Edit for Specificity, Credibility, and Friction

This is the step casual users underestimate. Raw AI text can sound fluent while still being soft, repetitive, overexplained, or just a little too eager to agree with the prompt. Human editing is where the copy becomes commercially serious.

A strong editorial pass usually does five things. It removes padded phrases, sharpens claims, checks every factual statement, aligns the voice with the brand, and makes sure the reader always knows what to do next. Google’s guidance on AI-generated content makes this part non-negotiable, because usefulness and originality still determine whether the asset deserves to rank, convert, or be trusted in the first place. Google’s generative AI content guidance

This is also the moment to protect the brand from hidden sloppiness. Delete unsupported claims, flatten exaggerated hype, and rewrite anything that sounds like it was generated to please the model rather than persuade a real person. Good editing is not cleanup. It is where value gets added.

Step 5: Deploy the Copy Into a Working Distribution System

Copy does not perform in a vacuum, so implementation is not finished when the draft is approved. The next move is to connect the asset to the channel where it will actually be tested, personalized, scheduled, or automated. That is where workflow quality starts compounding.

For social distribution, a tool like Buffer fits when you need to turn one approved message into platform-specific posts and keep a consistent publishing calendar. Buffer’s own product pages position its AI assistant around brainstorming, rewriting, and creating channel-ready content, which makes it more useful as a deployment layer than as a source of strategy. Buffer’s AI Assistant page

For conversational follow-up, ManyChat makes sense when your copy needs to keep working after the click inside DMs, comments, or messaging flows. Its official positioning is all about automating two-way conversations across channels, which matters because strong copy often fails when the handoff into conversation is clunky or slow. ManyChat homepage

For landing page execution, Replo is a natural fit if you want the copy and page experience to move together. Replo’s current product messaging leans heavily into AI-built, on-brand pages and faster testing, which is useful when you want copywriting AI to support conversion experiments rather than sit in a document waiting for design handoff. Replo AI Builder page

For businesses that want the CRM, funnel, messaging, workflow, and AI layer under one roof, GoHighLevel becomes more relevant. HighLevel now positions its AI stack around voice, chat, funnels, content, reviews, and workflow automation, which makes it useful when the copy is only one part of a larger revenue system. HighLevel AI tools page

Channel-Specific Execution and Tool Stack

The reason channel-specific execution matters is simple: good copy changes shape depending on where it lives. A landing page needs sequencing and proof, email needs timing and momentum, social needs compression and pattern interruption, and DM automation needs conversational clarity. Copywriting AI becomes much more effective when the prompt and the review standard are tied to the channel instead of treated like one generic writing task.

For email, the main goal is not to make every line sound clever. The goal is to create a message that gets opened, read, and acted on without losing trust. That is why AI-assisted email works best when the brief includes the segment, the trigger, the offer logic, and the exact next step, especially when the copy will be deployed inside systems like Brevo or Moosend where testing and automation continue after the copy is written.

For social content, the win is usually speed plus consistency, not literary brilliance. Buffer’s current positioning around creating, organizing, and repurposing posts across channels fits that reality well, because social teams often need a system that turns one approved message into multiple publishable variants quickly. Buffer homepage

For funnels and lead qualification, the stack becomes more integrated. A strong offer page in ClickFunnels or Systeme.io can handle the page experience, while conversational tools and CRM automation keep the message moving after the form fill or reply. The key idea is that copywriting AI should not stop at draft creation. It should feed a process that can actually turn attention into response, and response into revenue.

What the Performance Data Actually Says

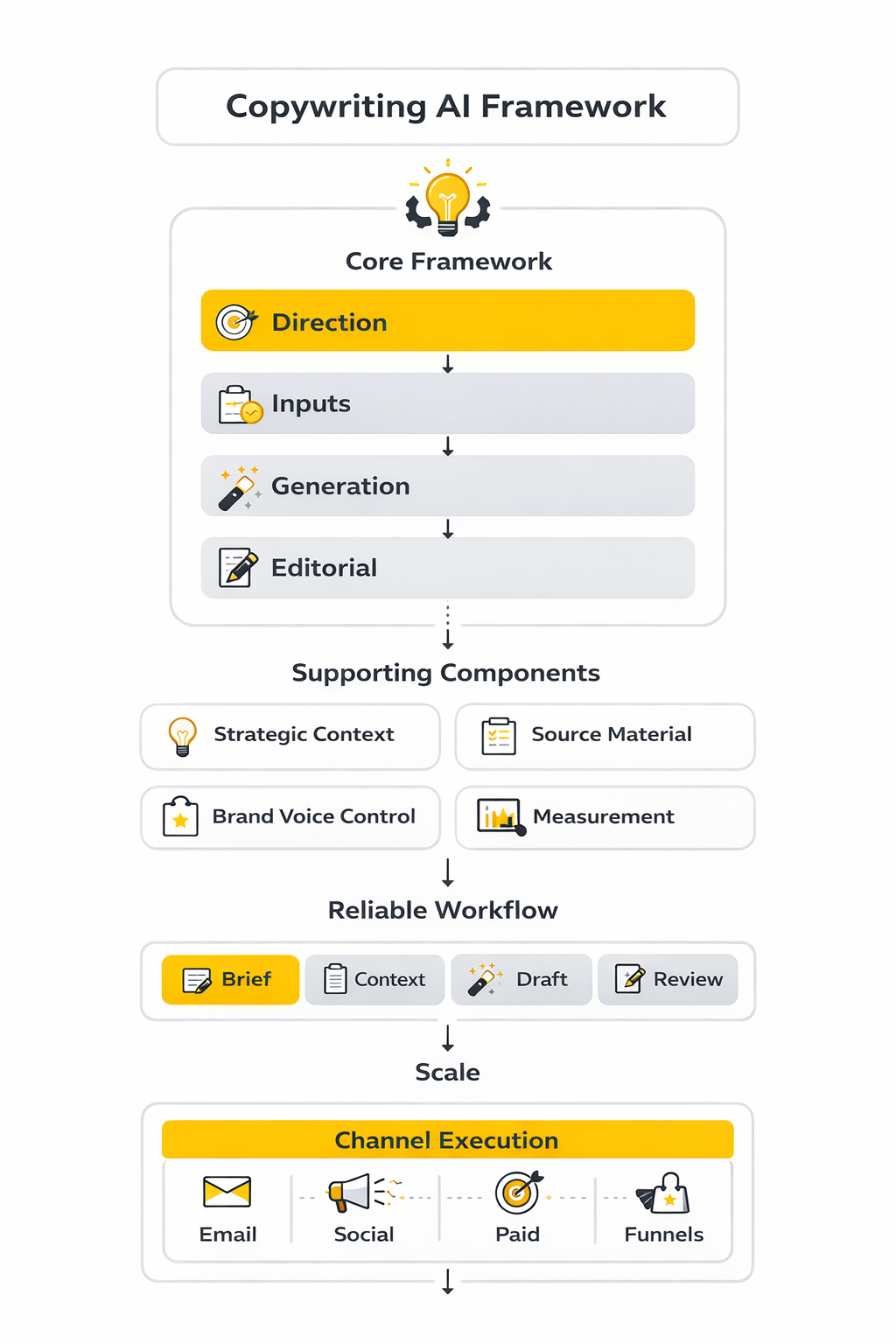

The easiest way to misuse copywriting AI is to celebrate output while ignoring outcomes. More drafts, more variants, and faster turnaround can look impressive inside the team, but those numbers only matter if they improve engagement, conversion, retention, or revenue. That is why the best measurement systems separate efficiency metrics from performance metrics instead of treating them like the same thing.

The market data already points in that direction. LinkedIn’s 2025 B2B benchmark found that marketers report saving about four hours per week per task area, with totals reaching up to 20 hours weekly across workflows, while Jasper’s 2025 AI marketing research lists productivity and improved ROI among the most frequently cited benefits of AI adoption. Useful, yes, but those are still intermediate signals. They tell you the machine is helping people move faster, not that the copy itself is winning in market.

That distinction matters because speed can mask quality problems for a while. SAS found that 90% of marketers trust agentic AI to some extent, but that trust is conditional on human oversight, which is a pretty direct signal that leaders still see quality control as part of the operating model rather than an optional extra. In plain English, the teams getting value from copywriting AI are not the ones publishing the most words. They are the ones turning time saved into better decisions, tighter testing, and stronger assets.

Productivity Metrics Tell You If the System Is Working

Start by measuring cycle-time reduction because it is the clearest early sign that your implementation is doing something useful. Track time to first draft, time to approved draft, number of revision rounds, and the percentage of assets that make it from brief to publish without getting stuck. These numbers matter because they tell you whether copywriting AI is reducing friction or just moving the friction later into editing and approvals.

A second useful layer is throughput quality. Count how many assets a team ships per week or month, but put that beside editor rejection rate, factual correction rate, legal or compliance flags, and how often a draft needs substantial rewriting. Adobe’s current enterprise view on AI content operations keeps coming back to workflow maturity for exactly this reason: scale is only valuable when the system can produce more without creating downstream chaos.

This is the action point most teams miss. If your time-to-draft is falling but editor intervention is rising, you do not have a copywriting AI success story yet. You have an upstream acceleration problem that is being paid for downstream by humans.

The Analytics System That Actually Helps

The measurement stack for copywriting AI does not need to be fancy, but it does need to connect content production to business impact. At minimum, you want one layer for workflow efficiency, one layer for audience response, and one layer for conversion or pipeline contribution. When those layers are disconnected, teams end up arguing about whether AI is helping without having a shared definition of help.

The first layer is operational. That includes time saved, asset volume, edit load, and publishing speed. The second layer is behavioral. That includes impressions, click-through rate, scroll depth, click-to-open rate, reply rate, and landing page engagement depending on channel. The third layer is commercial. That includes assisted conversions, demo bookings, qualified leads, revenue per send, and win-rate influence where your attribution model can support it.

Once that stack is in place, interpretation becomes much cleaner. If copywriting AI improves volume but weakens click-through rate, the message quality probably dropped. If click-through rate rises but conversion rate falls, the hook may be stronger than the offer-page follow-through. If time saved rises and conversion efficiency holds steady, that is usually a real operational win because the same performance is being produced with less effort.

Channel Benchmarks Need Context, Not Blind Comparison

Benchmarks are useful, but only if you understand what they can and cannot tell you. DMA’s 2025 email benchmarking data reported average open rates of 35.9%, unique click rates of 2.3%, and delivery rates of 98% in 2024 campaign data, while MailerLite’s 2025 benchmark analysis reported a higher 43.46% average open rate and a 2.09% click rate. Those numbers are directionally helpful, but they are not interchangeable because methodology, list composition, privacy effects, and industry mix all change the baseline.

That is exactly why copywriting AI teams should benchmark against their own historical performance first. A campaign that moves your click-to-open rate from 5.5% to 7% can be more meaningful than comparing yourself to a public average built from a completely different audience and send pattern. External benchmarks help you spot whether you are wildly off-course, but internal benchmarks tell you whether the new workflow is actually improving your business.

The same logic applies to content and landing pages. Google’s people-first content guidance is a reminder that search performance is still tied to usefulness and reliability, not to whether AI was involved in the drafting process. So for search-driven assets, watch impressions, click-through rate from search, engagement after the click, and whether the page earns the kind of response signals that suggest it truly answered the query.

What a Good Lift Looks Like

A good lift is one that survives context. That means it holds after enough traffic, after enough sends, and after you rule out obvious confounders like seasonality, promotion overlap, list quality changes, or offer changes happening at the same time. The more integrated your marketing system becomes, the easier it is to accidentally credit copywriting AI for gains that were really caused by audience selection, send timing, or pricing changes.

This is why experimentation velocity matters, but not by itself. Optimizely’s 2025 benchmark around its AI workflows emphasizes experiment speed, success rate, marketing output, and content quality as the metrics that matter together, which is a useful framing because faster testing is only valuable when it improves the hit rate of good decisions. More tests with worse hypotheses are not a win.

In practice, a strong signal usually looks like this: draft time falls, test volume rises, click or reply rate improves, and downstream conversion stays flat or improves. That is the pattern you want. If the only thing moving is output volume, the model may be helping your team feel productive while quietly making the audience less responsive.

The Numbers Should Drive Process Changes

Measurement is only useful when it changes behavior. If editor rejection is high, improve the brief and tighten the context pack. If email opens are fine but clicks are weak, the subject line is probably doing its job while the body copy or offer framing is not. If landing page engagement looks healthy but form completion is poor, the copy may be attracting attention without reducing enough friction near the decision point.

The same principle applies to tooling. If you are deploying email-heavy campaigns, a platform like Brevo gives you a benchmark reference plus send-level measurement that helps connect copy to response. If your bottleneck is social scheduling and repurposing, Buffer is more relevant because the operational metric is consistency across channels, not just headline quality. The tool should follow the bottleneck, not the other way around.

That is the bigger takeaway from the data. Copywriting AI should not be measured like a toy and it should not be defended with vague claims about the future. Measure how fast it helps you move, how well the audience responds, and whether the business result improves. Everything else is noise.

Risks, Governance, and What Comes Next

Once copywriting AI is running across multiple channels, the challenge changes. It is no longer about whether the tool can write a decent draft. It becomes a question of control: who approves claims, where the model gets its information, how brand voice stays consistent, and what happens when speed starts outrunning judgment.

That is where weak systems begin to break. McKinsey’s 2025 global survey found that companies capturing more value from generative AI are redesigning workflows and assigning senior leaders to governance, which is a strong signal that mature use is becoming operational, not experimental. In other words, the teams getting serious gains from copywriting AI are not just prompting harder. They are building rules around how the work gets done. McKinsey’s 2025 State of AI report

Salesforce is pointing in the same direction from the marketing side. Its newest State of Marketing report is built on insights from nearly 5,000 marketers worldwide and centers AI, unified data, and personalization as connected priorities rather than separate projects. That matters because copywriting AI becomes much harder to govern once it is generating content inside email, CRM, chat, landing pages, and follow-up sequences at the same time. Salesforce State of Marketing

The Biggest Strategic Tradeoff Is Speed Versus Control

The most obvious tradeoff is speed versus oversight. Faster drafting sounds like a pure win until teams realize they now need stronger review standards, cleaner source libraries, and better approval paths to stop weak or risky content from slipping through. If those controls are not built in, copywriting AI can create a lot of movement without creating much trust.

The real issue is not only hallucinated facts. It is softer than that and often more dangerous. Models can overstate confidence, flatten nuance, smooth away legal caveats, and make average ideas sound more credible than they deserve. That is why the FTC has kept focusing on deceptive AI claims and data-handling practices, which should be a warning for any brand using AI-generated marketing to make aggressive promises. FTC AI resource hub

This should directly shape your operating model. The faster the workflow gets, the more explicit your claim rules need to be. Approved proof, approved comparisons, approved outcomes, and approved testimonial usage should all be documented before the model starts generating copy at scale.

Brand Voice Breaks Quietly Before It Breaks Obviously

Most teams expect the biggest risk to be factual error, but brand erosion is often the more expensive problem. Copywriting AI can sound polished while slowly flattening the tone, structure, and point of view that made the brand recognizable in the first place. The result is not usually a disaster. It is something worse: a long drift into sameness.

That drift gets harder to notice when output volume rises. A team might be publishing more landing pages, more lifecycle emails, and more social posts than ever before while the brand is becoming less memorable with each cycle. This is one reason SAS found that marketers furthest along with generative AI are also more likely to have stronger governance, literacy, and risk management capabilities in place. SAS Marketers and AI 2025 report

The practical fix is not vague advice about staying authentic. It is a real voice system. That means approved examples, banned phrases, preferred sentence rhythms, evidence standards, emotional boundaries, and channel-specific tone rules. Without that layer, copywriting AI will usually converge toward whatever the model sees as safe and broadly acceptable, which is rarely where memorable marketing lives.

Scaling Fails When Teams Scale Prompts Instead of Inputs

A lot of teams try to scale copywriting AI by building larger prompt libraries. That helps for a while, but it eventually hits a ceiling because prompts do not solve weak information architecture. What really scales is a better source system: cleaner customer research, better message hierarchies, stronger proof libraries, and more reliable content blocks.

This is where the conversation shifts from writing to infrastructure. IBM’s recent material on enterprise AI governance frames scalable AI around governance, security, and data foundations, and that is useful even for marketing teams because copy quality depends heavily on the quality and traceability of the underlying information. You do not get reliable output from messy inputs just because the model sounds fluent. IBM on scalable enterprise AI governance

That is also why the best operators build reusable systems around copywriting AI instead of treating each campaign as a fresh start. Offer libraries, persona notes, objection banks, approved case-study fragments, and brand-safe CTAs create a much stronger base than endlessly rewriting prompt templates. The prompt still matters, but it matters less than most people think once you are working at scale.

Data Privacy and Access Rules Need To Be Boring and Strict

This part is not glamorous, but it is critical. The more useful copywriting AI becomes, the more temptation there is to feed it customer records, internal strategy documents, call transcripts, revenue data, and sensitive roadmap details. That can improve relevance, but only if access policies are tight and the organization knows exactly what can and cannot be used.

NIST’s AI Risk Management Framework is helpful here because it pushes organizations to govern, map, measure, and manage AI risks as a system rather than as isolated incidents. That mindset fits content operations well. If teams are going to use AI in copy workflows tied to CRM or customer communications, they need documented rules for data use, review, escalation, and accountability. NIST AI Risk Management Framework

The practical move is straightforward. Separate public marketing inputs from sensitive internal inputs, restrict access by role, and keep a documented list of what source types are approved for prompts, what source types require redaction, and what source types are off-limits entirely. Copywriting AI becomes much safer once data discipline is treated like part of content quality, not like an IT problem happening somewhere else.

Expert-Level Guidance for Teams That Want To Scale Without Getting Sloppy

At a certain point, the next improvement is not better wording. It is better orchestration. The team needs tighter handoffs between research, drafting, review, publishing, and measurement so that the system learns over time instead of repeating the same mistakes faster.

That means the most useful next step is usually not another writing tool. It is a workflow layer that connects the copy to segmentation, approvals, campaign logic, and feedback loops. For some teams, that might look like funnel and CRM orchestration in GoHighLevel. For others, it might mean page testing in Replo, messaging automation in ManyChat, or distribution and scheduling in Buffer. The right stack depends on where the bottleneck really is.

The key is to stop thinking about copywriting AI as a writer and start thinking about it as part of a content operating system. Once you make that shift, better decisions get easier. You can define what deserves automation, what requires a human editor, what must be approved by legal or product marketing, and what metrics prove the system is working.

That is also where a lot of false excitement falls away. Not every task should be automated. Not every asset needs a model in the loop. Some high-stakes pages, high-value nurture sequences, or founder-led thought leadership pieces still benefit from slower, more deliberate writing. Strong teams know the difference, and that judgment is part of the advantage.

What Smart Teams Will Do Next

The next phase of copywriting AI will reward teams that combine three things well: strong data hygiene, strong editorial standards, and strong workflow design. The technology is improving quickly, but the moat is not the model alone. The moat is the system wrapped around it.

That is why the winning move from here is not to ask whether AI can replace writers. It is to decide where AI should accelerate research, where it should create options, where humans should shape the final argument, and where governance needs to stay firm even when the workflow gets faster. Teams that answer those questions clearly will scale better and sound better at the same time.

That brings us to the final piece of the article: the practical questions people still ask when they want to use copywriting AI seriously without wrecking quality, trust, or results.

FAQ for the Complete Guide

Is copywriting AI actually good enough for professional work?

Yes, but only when it is used inside a real process. Google’s guidance makes it clear that the issue is not whether AI was involved, but whether the final content is helpful, reliable, and made for people instead of search engines. That means copywriting AI is strong enough for professional work when strategy, inputs, editing, and review are all handled properly. Google’s people-first content guidance

Will Google penalize content written with AI?

Not just because AI was used. Google has repeated that automation is not the core problem, while low-value, search-engine-first, or scaled spam content absolutely is. So the right question is not “Was AI involved?” but “Did this page add something useful and specific for the reader?” Google’s guidance about AI-generated content Google’s people-first content guidance

What is the best way to start using copywriting AI without making a mess?

Start with one workflow, not your whole marketing department. Pick a repeatable asset like email campaigns, paid ad variations, or landing page sections, then build a brief template, a context pack, and an approval process around it. That approach gives you measurable results fast without turning the whole system into prompt chaos. OpenAI’s prompt engineering guide

What should I give the model before asking it to write?

Give it the same kind of information you would give a capable junior strategist. That usually means audience, offer, proof, objections, brand voice direction, channel constraints, and the exact job the asset needs to do. OpenAI’s prompt guidance keeps coming back to the same point: clearer instructions and better context create better outputs. OpenAI’s prompt engineering guide

Can copywriting AI replace human writers?

Not in the way most people imagine. It can reduce blank-page time, speed up variations, and help teams work through more drafts, but human judgment still matters for positioning, proof, nuance, and final editorial quality. The best-performing model is usually not “AI instead of people,” but “AI expanding what skilled people can do.” OpenAI’s prompt engineering guide NIST AI RMF 1.0

How do I keep AI copy from sounding generic?

You stop asking generic questions and start feeding it real material. Customer language, approved proof points, objection patterns, and strong examples of your voice do much more for quality than adding clever adjectives to a prompt. If the inputs are bland, the output will usually be bland too, no matter how advanced the model is. OpenAI’s prompt engineering guide

How much human editing should still happen?

More than most people think, especially on commercial content. Google’s guidance around helpful content and AI-generated material still points toward usefulness, originality, and reader value, which means unchecked drafts are not enough. Human editing is where you tighten claims, remove filler, sharpen transitions, and make sure the copy deserves to exist. Google’s guidance about AI-generated content Google’s people-first content guidance

What are the biggest risks when using copywriting AI at scale?

The obvious risk is factual error, but that is not the only one. NIST’s AI risk management work focuses on trustworthiness, governance, and managing the broader impacts of AI systems, which is a useful reminder that risk also includes brand drift, weak approvals, bad data use, and overconfident claims. In real marketing teams, sloppy governance usually causes more damage than the model itself. NIST AI RMF 1.0 NIST AI RMF for Generative AI

Do I need formal governance if I am a small team?

You do not need a giant committee, but you do need rules. Even a small team should define what source material is allowed, who approves customer-facing claims, what kinds of outcomes cannot be promised, and what gets a final human review before publishing. Simple governance early is much easier than fixing trust problems later. NIST AI RMF 1.0

Can AI-written copy work in Google’s newer AI search experiences?

Yes, but the same core logic still applies. Google’s newer documentation around AI features says site owners should focus on unique, satisfying, non-commodity content that helps users, which means copywriting AI can support visibility if it helps you produce more useful answers rather than more generic filler. The bar is not lower in AI search. If anything, weak commodity content becomes easier to ignore. Google’s AI features and your website guide Google’s guidance on succeeding in AI search

What metrics should I watch first?

Start with three layers: speed, response, and business impact. Speed tells you whether the workflow is becoming more efficient, response tells you whether the audience likes the message, and business impact tells you whether those gains are commercially meaningful. If you only track output volume, you can fool yourself very quickly.

Should I use one AI tool for everything?

Usually not. One model can help with drafting, but the wider system often needs separate tools for deployment, CRM, scheduling, page building, or conversational follow-up. That is why many teams combine copywriting AI with tools like GoHighLevel, Replo, Buffer, Brevo, or ManyChat depending on where execution actually happens.

How do I know if my team is ready to scale copywriting AI?

You are ready when the basics are boring. The brief format is stable, the source material is organized, the approval process is clear, and performance can be measured without guesswork. If those pieces are still fuzzy, scaling copywriting AI usually scales confusion faster than results.

What is the smartest mindset to keep going forward?

Treat copywriting AI like leverage, not magic. It can help you think faster, test more angles, and remove repetitive work, but it still needs strong direction and strong taste. The teams that win with it are usually the teams that stay disciplined when everyone else gets distracted by volume.

Work With Professionals

Explore 10K+ Remote Marketing Contracts on MarkeWork.com

Most marketers spend too much time chasing clients, competing on crowded platforms, and losing a percentage of every project to middlemen.

MarkeWork gives you a better way. Browse thousands of remote marketing contracts and connect directly with companies desperate to hire skilled marketers like you, without platform commissions and without unnecessary gatekeepers.

If you're serious about finding better opportunities and keeping 100% of what you earn, explore available contracts and create a profile for free at MarkeWork.com.

Líbí se ti tato osobnost?