Copywriting AI moved fast from novelty to workflow infrastructure. What changed is not just the quality of large language models, but the economics around them: marketing and sales remain one of the biggest value pools for generative AI, and recent marketer surveys show AI is already embedded in day-to-day execution rather than sitting in a testing phase. At the same time, the rise of AI-assisted discovery is changing how people research products, compare offers, and arrive on commercial pages, which makes better messaging even more important.

That does not mean copywriting AI automatically produces persuasive copy. The teams getting real results are not asking a chatbot for “10 headlines” and hoping for the best. They are building a system that combines positioning, audience insight, brand voice, structured prompts, human review, and channel-specific testing so AI becomes a leverage tool instead of a volume machine.

Article Outline

- Why Copywriting AI Matters

- The Copywriting AI Framework

- Core Components of an Effective Copywriting AI Stack

- Where Copywriting AI Performs Best Across Channels

- How Professionals Implement Copywriting AI Without Losing Brand Voice

- What Copywriting AI Gets Wrong and How to Manage the Risk

Why Copywriting AI Matters

The real reason copywriting AI matters is simple: content demand has exploded, while attention has not. Brands now need more landing pages, more ad variants, more lifecycle emails, more product messaging, and more channel-specific copy than most teams can produce manually at a high level of consistency. That pressure is showing up in both enterprise research and market behavior, with major surveys pointing to widespread AI use inside marketing teams and stronger demand for personalized, two-way communication.

There is also a distribution shift happening underneath the surface. Adobe’s 2025 analytics showed generative AI traffic to U.S. retail sites jumping sharply, alongside similar movement in travel and financial services, which signals that buyers are increasingly using AI tools during the research phase before they ever click through to a brand’s page. In practical terms, that means your copy now has to do two jobs at once: it has to persuade human readers and stay clear enough, specific enough, and structured enough to survive AI-mediated discovery.

The upside is meaningful, but only when copywriting AI is treated as collaboration rather than replacement. Recent research keeps pointing in the same direction: human-plus-AI workflows can improve speed and output quality, yet trust, motivation, and consumer response still depend on how the work is framed and reviewed. That is why the winning question is not whether AI can write, but where AI should draft, where humans should shape, and where brand judgment still decides the final line.

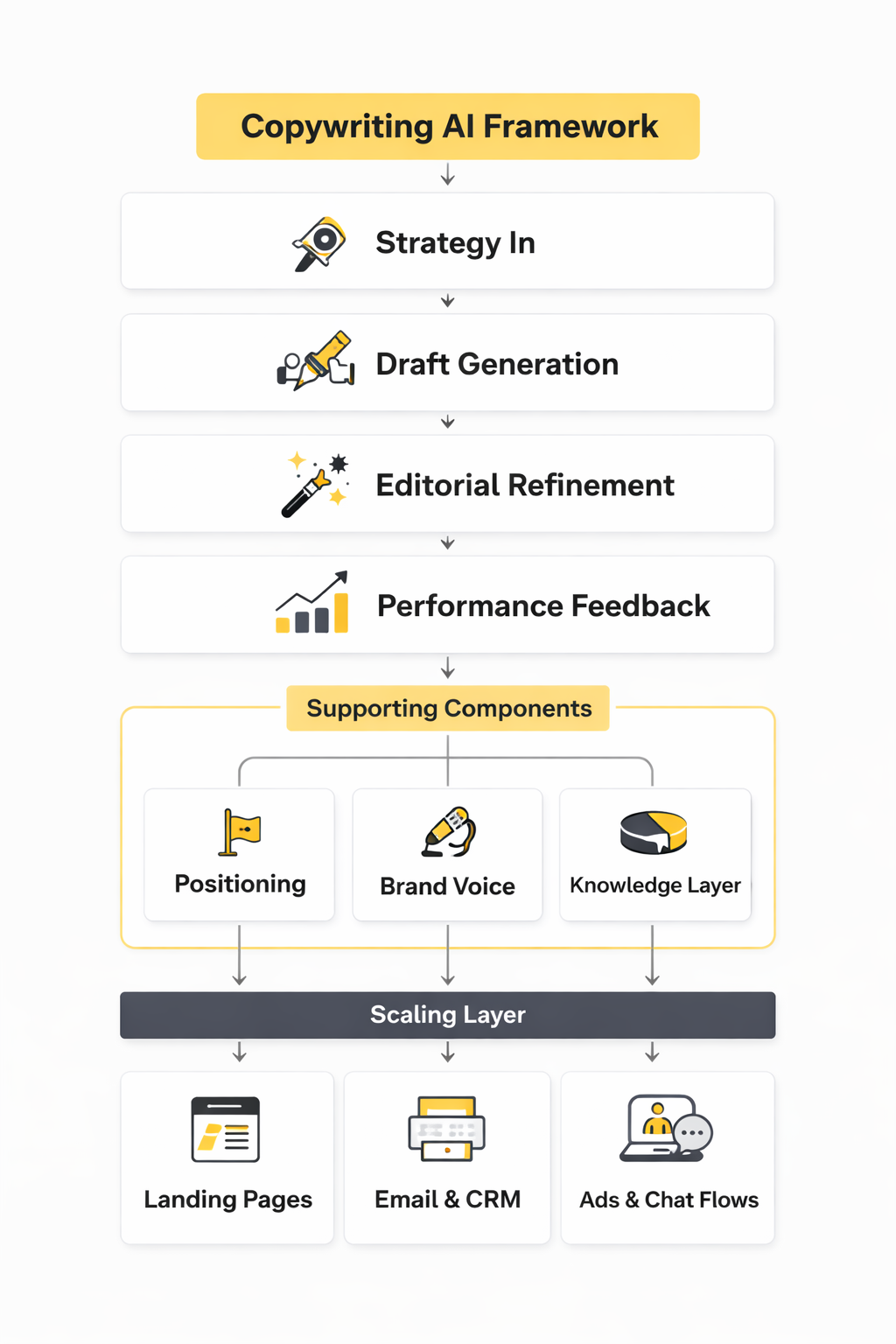

The Copywriting AI Framework

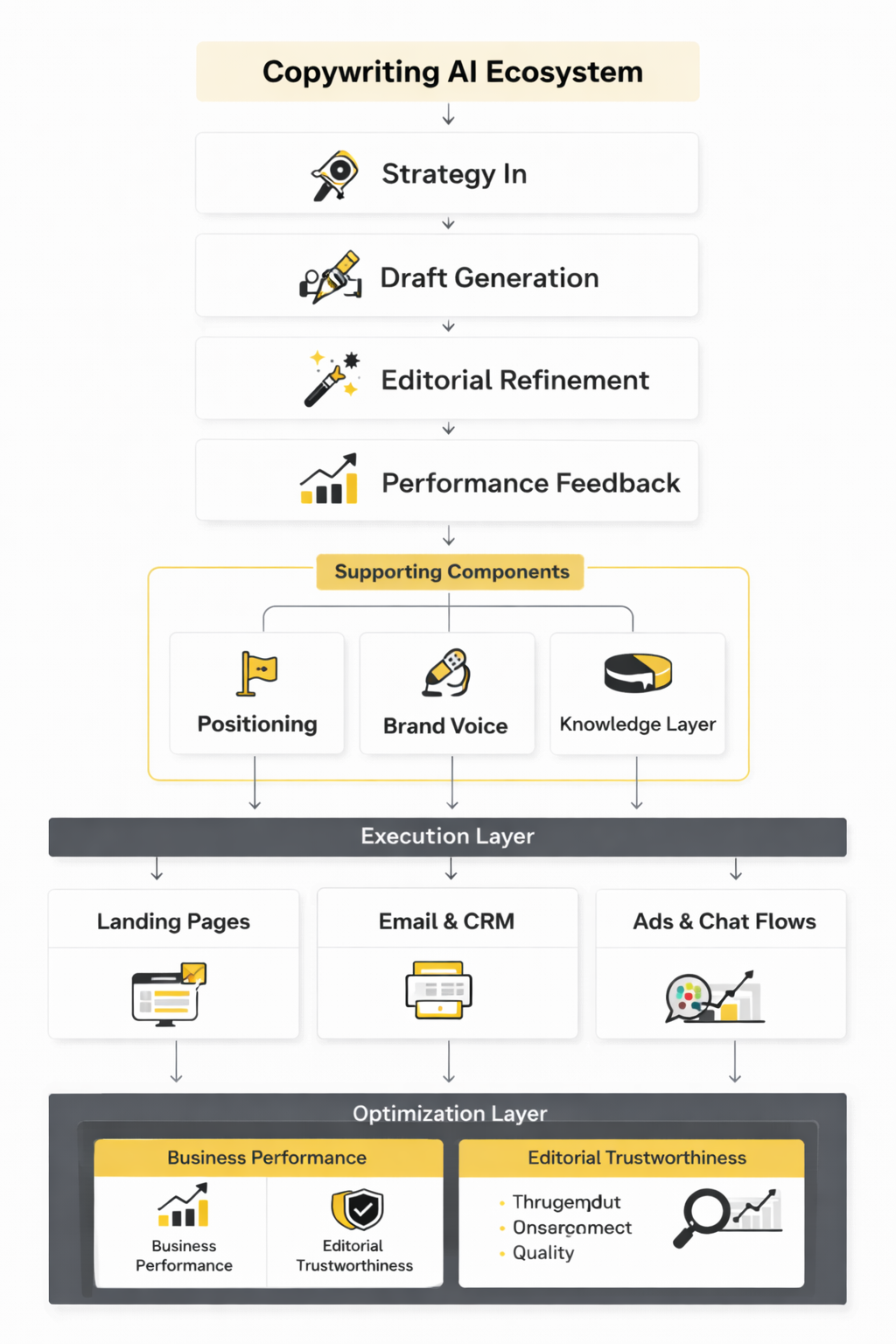

A useful way to think about copywriting AI is as a four-layer system: strategy in, draft generation, editorial refinement, and performance feedback. Most weak results happen because teams start in the middle, with generation, and skip the harder work of clarifying audience pain points, offer mechanics, proof, objections, and voice constraints. When those inputs are thin, AI tends to produce copy that is fluent but forgettable.

The better approach is to give AI a defined job inside a controlled process. Strategy sets the messaging brief, generation creates structured first drafts, editorial review sharpens claims and removes generic language, and performance data feeds the next round of prompts. That loop matters because copywriting AI is strongest when it iterates against real signals such as click-through rate, reply rate, qualified lead rate, or conversion lift, not when it writes in a vacuum.

Professionals also add a knowledge layer before they ever ask for output. That can mean approved positioning documents, product details, customer interview notes, objection libraries, past winners, and compliance rules. If you want to operationalize that kind of brand context, a tool like Chatbase can help centralize source material for AI-assisted workflows, but the principle matters more than the tool: better source context produces better copy.

Core Components of an Effective Copywriting AI Stack

The first component is not the model. It is the brief. When copywriting AI gets vague inputs, it usually returns smooth language with weak sales logic, because it has no real grip on customer pain, buying triggers, objections, or proof. The better teams anchor every prompt to a working brief that includes audience, offer, mechanism, stakes, proof points, voice rules, and the one action the reader should take next.

The second component is source context. AI copy improves fast when you feed it approved product details, customer research, founder language, support transcripts, call notes, and examples of messaging that already converts. That is why a retrieval layer matters so much: instead of asking AI to guess what your brand sounds like, you give it the material it needs to write from something real. If you want a simple way to organize that context for assistants and internal workflows, Chatbase is relevant here because it helps ground outputs in your own information rather than generic internet language.

The third component is editorial control. Copywriting AI is excellent at generating options, reframing angles, and accelerating first drafts, but official risk guidance keeps warning that generative systems can still fabricate details, flatten nuance, or sound more certain than the evidence supports. In practice, that means every serious workflow needs approval steps for claims, compliance, brand voice, and factual accuracy before anything goes live.

The fourth component is feedback. Good copywriting AI workflows are not judged by whether a paragraph sounds clever in isolation, but by whether it improves click-through rate, reply rate, qualified pipeline, revenue per visitor, or some other business metric tied to the page or campaign. That sounds obvious, but it is the line that separates AI-assisted copywriting from AI-generated content spam.

Strategy Inputs Come Before Prompting

Most problems blamed on copywriting AI are actually strategy failures upstream. If the positioning is fuzzy, the offer is weak, or the customer problem is poorly understood, AI will only help you produce weak messaging faster. That is why the highest-leverage work still happens before the first prompt is written.

A strong input set usually includes five things:

- who the buyer is

- what problem they urgently want solved

- why this offer is different

- what proof reduces doubt

- what action should happen next

Once those inputs are clear, prompting becomes much easier and much more useful. Instead of asking for “better copy,” you can ask for three ad angles for first-time buyers, a shorter hero section for comparison shoppers, or a nurture email that addresses one specific objection. That level of specificity is where copywriting AI starts earning its keep.

Brand Voice Needs a System, Not a Vibe

A lot of teams say they want AI to “sound like us,” but they never define what that means. Brand voice becomes usable only when it is broken into rules: sentence length, reading level, emotional range, taboo phrases, how the brand handles certainty, how aggressively it sells, and how it balances clarity against personality. Without that structure, copywriting AI defaults to polished average.

This matters even more now because AI is making content abundance cheap, and abundance tends to erase distinction. HubSpot’s latest marketing framing leans hard into brand point of view for exactly that reason, while newer consumer research from Gartner suggests many people are already wary of generative AI in customer-facing content. In plain English, sounding generic is no longer just boring. It can actively reduce trust.

The practical move is to create a voice guide that AI can actually use. Include approved phrases, banned clichés, examples of strong and weak outputs, and before-and-after edits from your own brand. Then keep refining that guide as you learn what the model consistently gets wrong. Tools that support collaborative workflow around copy, scheduling, and approval can help here too; for example, Buffer fits once AI-assisted social copy has to pass through real publishing workflows instead of staying inside a prompt window.

The Best Stack Connects Creation to Distribution

Copywriting AI becomes far more valuable when it is connected to the channels where copy actually lives. That means landing pages, CRM sequences, chat flows, social scheduling, lead forms, and sales follow-up rather than a disconnected document full of drafts that never ship. The market is moving in that direction because teams want AI inside execution systems, not floating outside them.

That is also why the stack usually expands beyond one writing tool. A brand might use AI to draft lifecycle email, then push approved sequences into Brevo, run conversational lead capture through ManyChat, and tighten product-page messaging inside Replo. The point is not the tool list itself. The point is that copywriting AI works best when drafts can move straight into live systems where testing, segmentation, and revenue feedback happen.

Where Copywriting AI Performs Best Across Channels

Copywriting AI performs best in channels where the structure is clear, the objective is narrow, and the feedback loop is fast. That usually means email sequences, paid ad variants, landing page sections, chatbot flows, and social copy where teams can quickly see what gets opened, clicked, replied to, or ignored. It is a much better fit for iterative conversion work than for high-stakes brand campaigns that depend on original creative leaps or delicate messaging judgment. Salesforce’s latest marketing research and HubSpot’s current AI marketing report both reflect that operational reality: marketers are using AI heavily inside production and optimization, not just ideation.

Email is one of the clearest wins because the copy is modular and the response data is immediate. Subject lines, preview text, nurture sequences, win-back campaigns, onboarding flows, and objection-handling emails all benefit from rapid variant generation as long as the message map is already defined. That lines up with broader channel economics too, because HubSpot’s 2025 marketing data places email among the top ROI channels for B2C brands, which makes small copy improvements disproportionately valuable.

Paid social and performance creative are also strong use cases because copywriting AI can generate angle variations far faster than most teams can manually. The model is especially useful when one offer needs multiple hooks for different awareness levels, customer segments, or creative formats. That matters in a market where HubSpot’s 2025 social media research and its broader 2026 marketing report both point toward heavier AI-assisted execution and more pressure to stand out with a distinct point of view.

Landing pages are another strong fit, but only when humans still control the offer strategy. Copywriting AI is very good at expanding skeletal briefs into hero sections, benefit blocks, FAQ drafts, objection handling, and test variants for different traffic temperatures. It becomes even more relevant as user journeys fragment across search, social, and AI-assisted discovery, with Adobe reporting major growth in traffic from generative AI sources to retail, travel, and financial-services sites. When a visitor arrives more informed, the page has to be sharper, not louder.

Chat and conversational funnels are quietly one of the best environments for copywriting AI because they depend on branching language, fast response generation, and repeated objection handling. Instead of writing one linear sales page, teams can build guided micro-conversations that qualify leads, answer friction points, and move people toward the next step. That is where platforms such as ManyChat make sense in a copywriting AI stack, because they turn prompts into live conversational assets rather than leaving them stranded in a document.

How Professionals Implement Copywriting AI Without Losing Brand Voice

Professional implementation starts smaller than most people expect. The goal is not to “put AI into content” everywhere at once. The goal is to choose one commercially meaningful workflow, define how quality will be measured, and build a controlled process around it so the model becomes reliable before it becomes widespread. That sequencing matters because NIST’s generative AI guidance is clear that risks around inaccuracy, overstatement, privacy, and context failure need governance, not just enthusiasm.

The best rollouts usually begin with one narrow use case such as lifecycle email, paid ad ideation, sales follow-up, or product-page optimization. That creates a clean environment for testing because the format is repeatable and the success metric is already known. Once the team can prove faster throughput or better conversion without a drop in quality, the workflow can expand into adjacent channels. Salesforce’s research supports this kind of structured adoption, with marketers focusing on AI that improves execution and personalization rather than replacing strategy.

What keeps brand voice intact is not talent alone. It is process discipline. The team needs a messaging brief, a voice guide, an approved source library, prompt templates, review rules, and a feedback loop tied to results. Without that infrastructure, copywriting AI almost always drifts toward bland, over-explained, mid-market language.

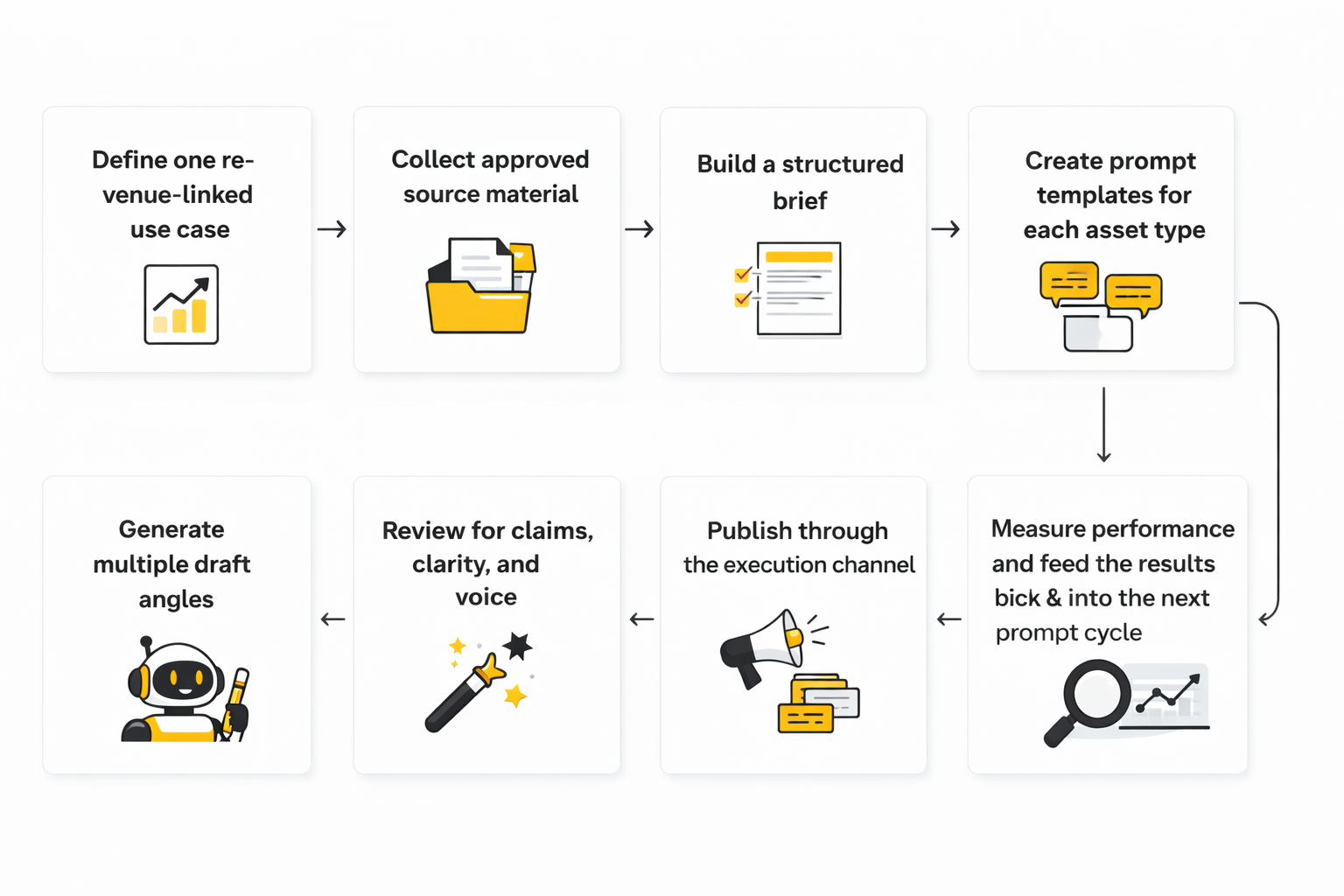

A Practical Copywriting AI Workflow

Here is what a professional implementation process usually looks like when it is done well:

- Define one revenue-linked use case.

- Collect approved source material.

- Build a structured brief.

- Create prompt templates for each asset type.

- Generate multiple draft angles.

- Review for claims, clarity, and voice.

- Publish through the execution channel.

- Measure performance and feed the results back into the next prompt cycle.

That sequence works because it mirrors how strong copy is created in the real world. First comes strategic clarity, then draft generation, then editorial tightening, then live testing. Copywriting AI simply compresses the drafting and iteration stages, but it does not remove the need for judgment. NIST’s framework is useful here as a reminder that good outputs depend on trustworthy inputs, human oversight, and ongoing evaluation.

The source-material step is more important than most teams realize. A practical source pack can include product specs, customer interview notes, sales-call transcripts, winning past campaigns, objection lists, testimonials already cleared for use, and brand voice rules. If you want the process to scale, a knowledge layer built through something like Chatbase or Firecrawl can help centralize the information AI should pull from instead of improvising from memory.

The Review Layer Is Where Quality Is Won

This is the part people try to skip, and it is exactly where the good teams separate themselves. Copywriting AI can draft quickly, but it still needs an editor checking whether the promise is real, whether the proof is solid, whether the message fits the channel, and whether the tone actually sounds like the company. In other words, the editor is not there to fix grammar. The editor is there to protect persuasion, credibility, and trust. HubSpot’s 2026 State of Marketing report keeps emphasizing the same broader theme: AI scale only works when brands preserve humanity and distinctiveness.

A simple review checklist goes a long way:

- Is the core claim true and supportable?

- Is the customer problem specific enough to feel real?

- Does the copy sound like this brand and not like AI?

- Is the call to action aligned with the reader’s stage of awareness?

- Does any sentence feel inflated, vague, or generic?

That checklist gets even more important in regulated or sensitive categories, where the risk of overpromising is not just a style issue. It is a business issue. NIST’s generative AI profile consistently points back to oversight, documentation, and risk management for exactly this reason.

Implementation Gets Stronger When It Lives Inside Real Systems

The last piece is operational. Copywriting AI becomes dramatically more valuable when it is connected to the tools where campaigns are built, shipped, and measured. For email and CRM workflows, that could be Brevo or GoHighLevel. For landing pages, Replo, ClickFunnels, or Systeme.io fit naturally. For social publishing and workflow discipline, Buffer becomes useful once the copy leaves draft mode and enters real scheduling, approval, and reporting.

That operational connection is not a minor detail. It is how copywriting AI moves from a novelty interface to a working growth system. The model drafts, the team reviews, the assets ship, the metrics come back, and the next round gets smarter. That is the implementation loop professionals actually trust.

What the Data Really Says About Copywriting AI Performance

A lot of copywriting AI coverage gets this wrong. It throws out adoption numbers, productivity claims, and giant market-size forecasts as if they automatically prove better marketing. They do not. The numbers only matter when they help you answer a much more practical question: is AI-assisted copy producing better business outcomes, faster, without damaging trust or brand quality.

The strongest data point is not that marketers are experimenting. It is that AI is now embedded in normal workflow. Salesforce’s March 2026 State of Marketing coverage says 75% of marketers are using AI, while HubSpot’s 2026 State of Marketing report says 80% use AI for content creation. That does not prove copywriting AI is working well, but it does prove the baseline has shifted. The question is no longer whether teams use it. The question is whether they can measure it properly.

The First Metric Is Throughput, but It Cannot Be the Last One

Throughput is the easiest number to improve, which is why so many teams stop there. If copywriting AI helps a team generate five times more drafts, faster campaign turnarounds, and more test variants per week, that is useful. It means the system is increasing output capacity and reducing the time between idea and live experiment.

But throughput is still a vanity metric if quality or conversion falls. HubSpot’s current AI-for-marketers material frames ROI around productivity, personalization, and campaign performance, which is a much healthier lens than raw content volume. In practice, faster production only matters when it leads to stronger open rates, higher click-through rates, better lead quality, lower acquisition cost, or more revenue per visitor.

That is the first rule for measuring copywriting AI: separate activity metrics from outcome metrics. Activity tells you whether the machine is busy. Outcome tells you whether the copy is doing its job.

The Signals That Actually Matter by Channel

The right benchmark depends on where the copy lives. For email, the early indicators are usually open rate, click rate, reply rate, unsubscribe rate, and revenue per send. For paid social and paid search, the stronger signals are click-through rate, cost per click, cost per acquisition, qualified conversion rate, and creative fatigue over time. For landing pages, the useful stack is scroll depth, click distribution, form-start rate, form-completion rate, demo-book rate, and revenue or pipeline per session.

This is exactly why copywriting AI needs channel context. A subject line variant that lifts opens but hurts downstream clicks is not actually a win. A landing-page rewrite that improves time on page without increasing conversions may simply be making the page more readable, not more persuasive. Good analytics stops you from celebrating the wrong improvement.

The broader market data around AI-referred traffic makes this even more interesting. Adobe reported in June 2025 that AI traffic showed a 27% lower bounce rate, 38% longer time spent per visit, and 10% higher page views per visit than non-AI traffic. Then Adobe’s January 2026 holiday analysis said retail AI-driven traffic was up 693% year over year, and its March 2025 release found travel visitors from generative AI sources had a 45% lower bounce rate. The takeaway is not “AI traffic is magic.” The takeaway is that some visitors arriving through AI-assisted research look more pre-qualified, which means copy should be measured not only for attention, but for how well it converts an already-informed user.

How to Build a Measurement System Around Copywriting AI

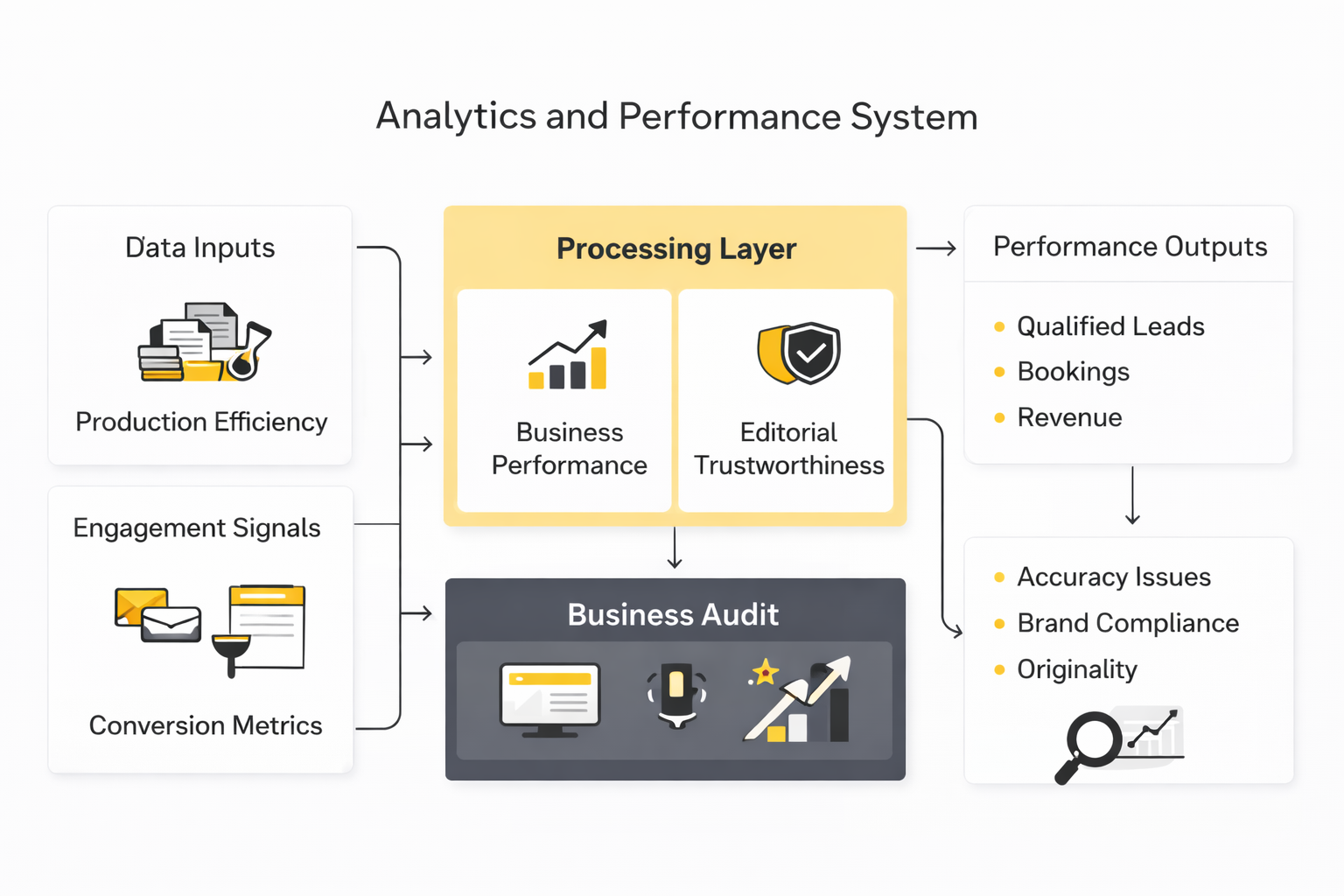

A working analytics system for copywriting AI usually has four layers. The first is production efficiency, where you track time to first draft, total draft volume, revision rounds, and publishing velocity. The second is engagement, where you track channel-specific reactions like opens, clicks, replies, scroll depth, or session quality. The third is conversion, where you follow the copy all the way to lead capture, qualified meetings, purchases, or retained revenue. The fourth is quality control, where humans score accuracy, brand fit, compliance, and originality.

That fourth layer matters more than many teams expect. NIST’s generative AI profile explicitly centers measuring and managing risks such as inaccurate content, harmful bias, privacy issues, and other real-world failures, and its more recent AI evaluation work on ARIA focuses on estimating real-world impacts through scenario-based testing. That is a useful reminder that analytics cannot stop at clicks. If copywriting AI produces a slight response lift but makes claims your team cannot support, the metric story is incomplete.

The cleanest operational model is to score each AI-assisted asset in two ways:

- business performance

- editorial trustworthiness

Business performance tells you whether the market responded. Editorial trustworthiness tells you whether the asset was safe and brand-appropriate to ship in the first place. Both are necessary. One without the other is how teams scale problems.

Benchmarks Should Guide Decisions, Not Replace Them

Benchmarks are useful, but they get misused all the time. Seeing that 75% of marketers use AI or that 80% use it for content creation can help explain why adoption is rising, but those numbers should not pressure you into rolling AI across every workflow overnight. Adoption benchmarks tell you what the market is doing. They do not tell you whether your current implementation is profitable.

The more useful benchmark is internal trendline performance. Compare human-only copy against AI-assisted copy for the same format, same audience, and same offer. Track how long the asset took to produce, how many revisions it required, and how it performed across the funnel. Once you have that internal baseline, outside benchmarks become context instead of noise.

This is where teams often need tighter infrastructure. If you are running copy across CRM, landing pages, chatbot flows, and nurture campaigns, measurement gets easier when the workflow is centralized. That is one reason platforms such as GoHighLevel, Brevo, and Buffer can become more than delivery tools. They can also become the environment where AI-assisted copy is compared, reported, and improved over time.

What the Numbers Should Make You Do Next

When the data shows faster output but flat conversions, the action is not to generate even more copy. It is to go back to the brief, the positioning, and the audience insight because the bottleneck is probably strategic. When the data shows higher engagement but weaker lead quality, the action is usually to tighten claims and better match the promise to the offer. When the data shows lift from AI-assisted traffic or personalization, that is the moment to build more segmented variants, not to fall back into one-size-fits-all messaging.

The biggest measurement mistake with copywriting AI is treating it like a writing shortcut instead of a testing system. The real value is not that it writes faster. The real value is that it lets you test more angles, learn faster, and compound insight across channels. That is why the numbers matter. They should not make you feel impressed. They should make you more precise.

What Copywriting AI Gets Wrong and How to Manage the Risk

The biggest mistake in copywriting AI is not bad grammar. It is false confidence. The output often sounds polished enough to feel finished, even when the logic is thin, the promise is inflated, or the wording quietly drifts away from what the product can actually deliver. That is why NIST’s generative AI risk profile matters so much here: the core risks are not abstract technical issues, but very practical failures like inaccuracy, hallucinated content, and misleading certainty.

This becomes more dangerous as teams scale. A weak human draft usually carries visible rough edges, so it gets challenged. Weak AI copy can look clean on the surface, which means it is more likely to pass through busy teams unless somebody is explicitly checking for unsupported claims, fake specificity, and language that sounds persuasive without saying anything real. The cleaner the prose, the more disciplined the review process has to be.

There is also a strategic risk that does not get enough attention: sameness. When teams rely on copywriting AI without a strong point of view, they tend to produce messages that are structurally correct but commercially interchangeable. That is a serious problem in crowded markets, because once your copy sounds like everyone else using the same tools, speed stops being an advantage and becomes a race to mediocrity.

The Real Tradeoff Is Speed Versus Distinction

Copywriting AI is fantastic at acceleration. It helps teams generate more variants, move through briefs faster, and cover more channels with fewer bottlenecks. But that speed creates a tradeoff: the easier it becomes to produce polished language, the easier it becomes to publish language that sounds fine and means very little.

This is where experienced operators slow down on purpose. They let AI move quickly during ideation, draft expansion, and variant generation, then apply more human friction at the moments that define brand distinction: positioning, promise, proof, tone, and final calls to action. That approach fits the broader enterprise pattern in IBM’s 2025 CMO and CEO research, which shows leaders see AI as a growth driver while still running into fragmented operations and value-realization hurdles.

In practice, this means you do not want AI deciding what your market should care about. You want AI helping you express a strategic choice you have already made. The more important the message, the more that distinction matters.

Consumer Trust Is Now Part of the Copy Brief

Trust is no longer a soft brand topic sitting outside performance marketing. It is directly tied to how copywriting AI is used in public-facing content. Gartner reported in September 2025 that 53% of consumers distrust or lack confidence in the reliability and impartiality of AI search and summaries, and its March 2026 marketing survey found that 50% of U.S. consumers would prefer to do business with brands that avoid GenAI in consumer-facing content. That does not mean brands should abandon AI. It means they need to use it in ways that feel useful, accurate, and appropriately transparent.

This is especially relevant when copy is close to the buying decision. Product pages, pricing explanations, guarantee language, onboarding emails, and objection-handling sequences all shape whether a customer feels informed or manipulated. If AI makes those assets faster but less trustworthy, the downstream cost is real even if the top-line production numbers look great.

The practical move is simple. Use copywriting AI heavily behind the scenes, but make the front-facing experience feel clearer, more specific, and more human, not more synthetic. When transparency helps, use it. When human review is needed, do not fake automation.

Scaling Breaks When Operations Stay Messy

A lot of teams think scaling copywriting AI is mainly a tooling problem. Usually it is an operations problem. IBM’s recent marketing research says 84% of surveyed CMOs report rigid, fragmented operations limit their ability to harness AI, which lines up with what happens on the ground: prompts live in scattered docs, brand rules are inconsistent, approval paths are unclear, and nobody can tell which assets were AI-assisted, who edited them, or why one version performed better than another.

That kind of mess gets expensive fast. The first cost is inconsistency. The second is rework. The third is quiet organizational distrust, where teams stop believing the system can protect quality, so they either over-edit every draft or ignore the workflow altogether. At that point, copywriting AI stops being leverage and starts becoming overhead.

The fix is not glamorous, but it works. Centralize briefs, voice guidance, approved claims, prompt templates, and performance notes into one repeatable system. If you are running this across multiple funnels and customer journeys, infrastructure tools such as GoHighLevel, Brevo, or Copper can help keep execution and customer context in the same operating environment, which makes both measurement and accountability much easier.

Expert Teams Train the System, Not Just the Prompt

Beginners try to fix weak outputs with one better prompt. Experienced teams improve the surrounding system. They update voice rules after every awkward output, refine objection libraries after every sales call, add approved proof after every case study, and track which prompt structures produce usable drafts versus pretty nonsense. Over time, the quality lift comes less from clever prompting and more from better training data, better editorial standards, and better operational memory.

That is also why scaling copywriting AI often pushes teams toward a richer internal knowledge layer. A tool like Chatbase can help ground assistants in approved material, while something like Firecrawl can help structure source content for reuse. The specific stack can vary. The principle does not: if the system cannot learn from your best material, it will keep averaging toward generic output.

This is where many organizations hit a wall. IBM’s May 2025 CEO study found 64% of CEOs admit the risk of falling behind drives investment before they clearly understand the value, and that pressure easily creates shallow AI rollouts. When that happens, the team ends up scaling prompts before it has scaled judgment.

The Smartest Use of Copywriting AI Is Selective, Not Maximal

Not every asset should be handed to AI in the same way. High-frequency, structured, testable formats are usually excellent candidates. High-stakes narrative work, sensitive brand moments, crisis communication, and foundational positioning often need much tighter human ownership. Strong operators know the difference, and they build workflows around that difference instead of pretending one process fits every format.

This is also where governance stops sounding bureaucratic and starts sounding useful. Deloitte’s 2025 enterprise and retail GenAI research emphasizes governance, targeted use cases, and scalable delivery infrastructure as the conditions for durable value and https://www.deloitte.com/nl/en/Industries/retail/about/unlocking-value-generative-ai-retail-and-consumer-products.html. That is exactly the right frame for copywriting AI. The goal is not to maximize AI usage. The goal is to maximize commercial performance without losing trust, clarity, or brand distinction.

That is the mature view. Copywriting AI is not a magic writing machine and it is not a threat to every serious marketer. It is an amplifier. If your inputs, positioning, and operating discipline are strong, it can multiply them. If they are weak, it will multiply that too.

Building a Long-Term Copywriting AI Ecosystem

By this point, the shape of the system should be clear. Copywriting AI works best when strategy, knowledge, production, review, publishing, and measurement are connected instead of treated like separate tasks. That is the real ecosystem play: not one magical model, but a stack of decisions and tools that keep the copy useful, on-brand, and commercially accountable.

At a practical level, most teams end up needing four layers. They need a place to store brand context and source material, a place to generate and refine drafts, a place to publish across channels, and a place to track how the copy actually performs in the market. Once those layers talk to each other, copywriting AI stops being a novelty and starts acting like an operating system for modern marketing.

That is also why the best stack is rarely built around one app alone. A team may use Chatbase to ground outputs in approved information, Replo to turn messaging into better landing-page experiences, ManyChat for conversational flows, Brevo for lifecycle messaging, and Buffer to manage social distribution. The exact stack can change, but the principle stays the same: the more connected the workflow, the more useful copywriting AI becomes.

For operators building a broader funnel machine, all-in-one systems can make the workflow less fragile. A setup built around GoHighLevel, ClickFunnels, or Systeme.io can reduce handoff friction between pages, follow-up, CRM activity, and reporting. That matters because copy is rarely the bottleneck in isolation. Usually the bottleneck is the messy path between draft, deployment, and feedback.

FAQ

What is copywriting AI, really?

Copywriting AI is software that helps generate, refine, or adapt persuasive marketing language using large language models and related automation. In practice, it is most useful for structured assets like emails, landing-page sections, ads, chatbot flows, and sales follow-up. It is not a substitute for positioning, judgment, or customer understanding, which is why the best results still come from human-led systems.

Can copywriting AI replace a human copywriter?

It can replace some manual drafting tasks, but it cannot replace strategic thinking on its own. Great copy still depends on market insight, offer design, emotional judgment, and knowing what not to say. The smarter move is to use copywriting AI to remove repetitive production work so human effort can stay focused on the decisions that actually create leverage.

Is copywriting AI good for SEO content?

It can help with structure, speed, and content expansion, but only if the underlying article has a real point of view and useful information. AI-generated SEO content fails when it becomes generic, repetitive, or detached from what users genuinely need. For search-driven content, copywriting AI should support editorial quality, not replace it.

What types of copy benefit the most from AI assistance?

The strongest use cases are usually high-volume, testable formats where fast iteration matters. That includes email sequences, ad variants, landing-page modules, retargeting copy, chatbot scripts, and sales enablement material. These formats benefit because performance feedback comes back quickly, which helps the system improve faster.

What should I give copywriting AI before asking it to write?

Start with a real brief, not a vague request. The minimum useful input is audience, problem, offer, proof, objections, voice direction, and the exact action you want the reader to take. Once those ingredients are clear, the model can generate drafts that feel relevant instead of generic.

How do I keep AI-generated copy on brand?

You need rules, examples, and editorial feedback. A strong voice guide should explain what the brand sounds like, what it never sounds like, what phrases are approved, what clichés are banned, and how the tone changes across channels. Over time, the brand gets stronger not because the AI magically learns it, but because the team keeps training the system with better source material and better edits.

How do I know whether copywriting AI is actually improving results?

Measure it against business outcomes, not just production speed. Faster drafting is useful, but the real test is whether the copy improves clicks, replies, qualified conversions, booked calls, purchases, or retention. The cleanest way to judge it is to compare AI-assisted assets against human-only baselines for the same offer and audience.

What are the biggest risks with copywriting AI?

The big risks are blandness, overstatement, factual drift, and overconfidence disguised as polished writing. The output can sound credible even when it lacks proof or says something the brand should never publish. That is why every serious workflow needs a review layer for claims, clarity, compliance, and tone before the copy goes live.

Should small businesses use copywriting AI or is it mainly for large teams?

Small businesses can benefit a lot because they often need more marketing output than they have time or headcount to produce manually. Copywriting AI can help them build email sequences, landing pages, nurturing campaigns, and social assets much faster than doing everything from scratch. The key is staying disciplined with inputs and editing, because small teams usually cannot afford the cost of sloppy messaging.

What tools fit naturally into a copywriting AI workflow?

That depends on the channel mix, but the categories are predictable. Most teams need a knowledge layer, a writing layer, a publishing layer, and a measurement layer, which is why tools like Chatbase, Replo, ManyChat, Brevo, and Buffer show up so often. If the workflow is more funnel-heavy, GoHighLevel, ClickFunnels, and Systeme.io can make more sense.

Is copywriting AI useful for freelancers and agencies?

Yes, especially when the challenge is balancing speed with quality across multiple clients. Freelancers and agencies can use it to accelerate first drafts, build clearer approval workflows, create more test variants, and document voice guidelines faster. The real advantage is not just saving time, but being able to offer more strategic service because less energy is wasted on repetitive production.

What is the smartest way to start using copywriting AI this week?

Pick one asset type and one business goal. Build a clear brief, generate a few structured variants, edit them properly, publish them, and track the result against what you were doing before. Starting narrow is what keeps the learning clean, and clean learning is what turns copywriting AI into a real advantage instead of another abandoned tool.

Work With Professionals

Explore 10K+ Remote Marketing Contracts on MarkeWork.com

Most marketers spend too much time chasing clients, competing on crowded platforms, and losing a percentage of every project to middlemen.

MarkeWork gives you a better way. Browse thousands of remote marketing contracts and connect directly with companies desperate to hire skilled marketers like you, without platform commissions and without unnecessary gatekeepers.

If you're serious about finding better opportunities and keeping 100% of what you earn, explore available contracts and create a profile for free at MarkeWork.com.