Most people looking for drip campaign examples are not looking for theory. They are trying to see what a real sequence looks like after someone subscribes, starts a trial, abandons a cart, buys once, or disappears for 30 days. That is why good examples matter so much: they turn automation from an abstract idea into an actual path a customer can follow.

That path is worth getting right. Litmus found that marketers most often report email ROI between 10:1 and 36:1, Campaign Monitor says automated emails generate 320% more revenue than non-automated emails, and Ascend2 found email automation was the most-used email tactic in 2024 at 58%. In plain English, this is not a side tactic anymore. It is one of the clearest leverage points in modern marketing.

The interesting part is that the biggest wins usually do not come from dozens of complex flows. They come from a few high-intent sequences used at the right moment, which is exactly why Klaviyo’s 2024 benchmark found abandoned cart flows had the highest average revenue per recipient at $3.65 and the highest average placed order rate at 3.33%, while Omnisend reports that abandoned cart, welcome, and browse abandonment emails accounted for 87% of automated orders. The rest of this article will focus on those patterns, not fluff.

- Why Drip Campaign Examples Matter

- The Drip Campaign Framework at a Glance

- Welcome, Lead Nurture, and Onboarding Drip Campaign Examples

- Ecommerce Drip Campaign Examples That Recover Revenue

- SaaS and B2B Drip Campaign Examples for Activation and Retention

- Core Components, Professional Implementation, and FAQ

Why Drip Campaign Examples Matter

A drip campaign is simply a sequence that goes out because time passed or behavior happened. Brevo defines drip marketing as emails sent at certain intervals or after predefined user actions, and Mailchimp explains that automation triggers can come from actions like joining a list, buying, filling out a form, or even inaction like abandoning a cart. That trigger-first logic is what separates a useful sequence from a random batch of newsletters.

This is also why examples beat templates. A template gives you a layout, but a real example shows the trigger, the order of messages, the promise in the first email, the proof in the second, and the objection handling that closes the loop. That structure matters because Klaviyo’s 2025 benchmarks put average welcome email open rates at 51%, with top-performing welcome emails reaching click rates of 15% and placed order rates near 10%, which means the earliest emails in a relationship carry outsized weight.

Real brands keep proving the point. Mailchimp’s Blink case study says automated welcome emails became a cornerstone of a strategy that fueled sales of more than 400,000 units, and Klaviyo documented Callie’s Hot Little Biscuit growing flows revenue 157.8% year over year after launching loyalty automations. The lesson is not to copy those brands line by line. The lesson is that timing, relevance, and sequence design beat generic “just checking in” emails almost every time.

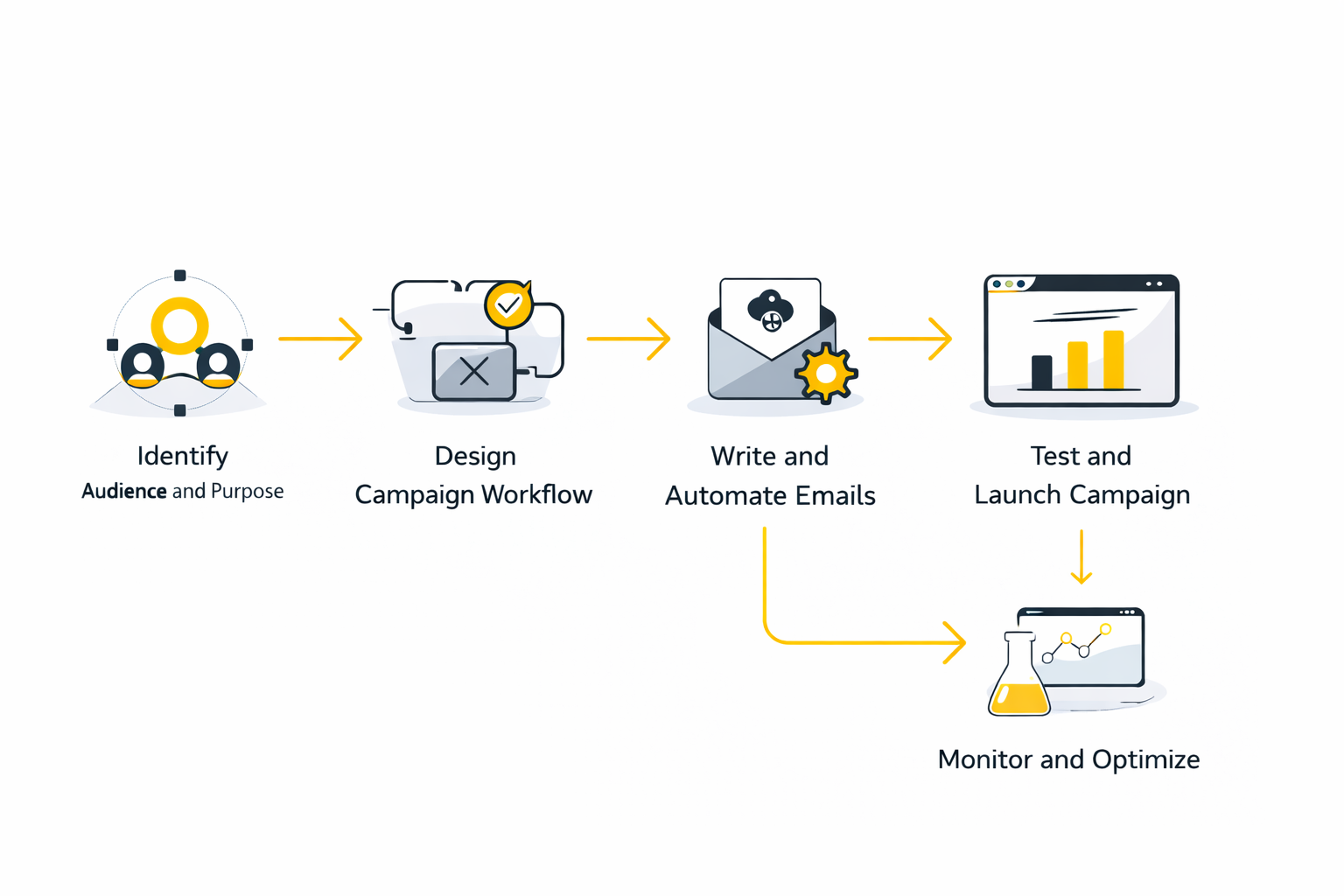

The Drip Campaign Framework at a Glance

Across almost every strong set of drip campaign examples, the framework stays surprisingly consistent. You start with a trigger, narrow the audience, decide what one job the sequence needs to do, and build each email so it moves the reader one step closer to that outcome. That is why Customer.io frames lifecycle emails around moments that improve product experience, build trust, and drive growth, rather than around whatever message the brand happens to feel like sending that week.

- Trigger: A signup, cart abandonment, trial start, purchase, inactivity window, or product usage event.

- Audience: A clearly defined segment such as new leads, first-time buyers, active trial users, high-intent browsers, or at-risk customers.

- Sequence: A set order of emails that usually moves from orientation to value to proof to action.

- Destination: A landing page, product page, help doc, or booking page that matches the promise made in the email.

- Success Metric: Not just opens, but clicks, replies, booked calls, purchases, placed order rate, revenue per recipient, or reactivation.

For most businesses, the highest-leverage starting set is not complicated. It is welcome, onboarding, abandoned cart, post-purchase, browse abandonment, and win-back, which shows up repeatedly in Mailchimp’s automation guide, Customer.io’s lifecycle email framework, and Omnisend’s current benchmark roundup. Once those are live, you are no longer guessing where automation belongs in the customer journey. You are mapping it to the moments that already predict action.

Execution still matters, though, because strategy without a workable stack dies in a planning doc. Teams that want a simpler place to start usually look at Brevo, agencies and service businesses that need CRM plus follow-up in one stack often evaluate GoHighLevel, and ecommerce brands that care about matching email promises with fast branded landing pages often explore Replo. That is the lens the rest of this article will use: not random screenshots, but drip campaign examples tied to a trigger, a sequence, and a business outcome.

What the Data Actually Shows

Good drip campaign examples are helpful, but the numbers tell you which ones deserve real attention. The gap between a decent sequence and a serious revenue driver is usually not subtle. Campaign Monitor says automated emails generate 320% more revenue than non-automated emails, Klaviyo says flows can generate up to 30x more revenue per recipient than one-off campaigns, and Omnisend’s 2025 ecommerce report found automated emails drove 37% of sales from just 2% of email volume.

Those numbers matter because they point to the same underlying truth: timing beats volume. A scheduled campaign can still work, but a triggered sequence usually wins because it arrives when the buyer has already shown intent. That is why measurement in drip campaigns should not be built around vanity, but around where intent turns into revenue, activation, retention, or reactivation.

Why Automation Outperforms Broadcasts

The performance gap is not happening because automated emails are automatically better written. It happens because they are tied to behavior, which makes them more relevant the moment they arrive. Omnisend reports automated messages delivered 52% better open rates, 332% higher click rates, and 2361% better conversion rates than manual campaigns, while Campaign Monitor reports automated emails produce 196% higher click-through rates than standard promotional emails.

That should change how you prioritize your work. If your team is still spending most of its energy on one-off sends while core triggers are missing, the data is telling you exactly where the leak is. In practical terms, that usually means building or tightening welcome, abandoned cart, browse abandonment, onboarding, post-purchase, and win-back before obsessing over another newsletter redesign.

The Benchmark Trap Most Teams Fall Into

Benchmarks are useful, but only when you interpret them in context. Klaviyo’s 2025 benchmark report explicitly notes that revenue per recipient becomes less useful as a comparison metric when your product prices differ from the average in your industry. That means a premium brand and a low-ticket brand should not stare at the same RPR number and draw the same conclusion.

The smarter move is to compare each flow against its real job. A welcome series should be judged by how many subscribers become first-time buyers or qualified leads. An onboarding flow should be judged by how quickly users reach a first meaningful action. A post-purchase flow should be judged by repeat purchase rate, review rate, referral actions, or product adoption, not just immediate next-day revenue.

Which Numbers Matter for Which Sequence

Abandoned cart is the clearest example of a flow that deserves aggressive attention. Klaviyo’s 2025 report shows abandoned cart flows have the highest average placed order rate of all core ecommerce flows, and its 2025 automation guide puts average abandoned cart revenue per recipient at $3.65 versus $0.11 for standard campaigns. The action this should drive is obvious: if you do not have this flow live, it deserves priority ahead of lower-intent automation ideas.

Welcome flows are a different kind of signal. Klaviyo’s benchmark report says the average welcome flow placed order rate is 1.97%, while the top 10% reach 9.89%. That gap tells you personalization in the first few emails is not a nice bonus. It is one of the fastest ways to move a new subscriber from curiosity to purchase.

Browse abandonment metrics need a different reading. Klaviyo’s report shows browse abandonment emails average a 4.74% click rate and a 0.82% placed order rate, which is still 10x the placed order rate of a standard campaign at 0.08%. The point is not that browse abandonment should outperform abandoned cart. The point is that it is a strong return-to-site flow, so you optimize it for relevance and product discovery rather than expecting immediate checkout behavior.

Post-purchase data is where people often misread performance entirely. Klaviyo’s 2025 report says post-purchase flows have the highest average open rate at 59.77% but the lowest average placed order rate at 0.48%. That does not mean the flow is weak. It means the wrong team is probably looking at it through the wrong lens, because post-purchase sequences are often there to reinforce satisfaction, reduce returns, generate reviews, educate usage, and set up the second purchase later.

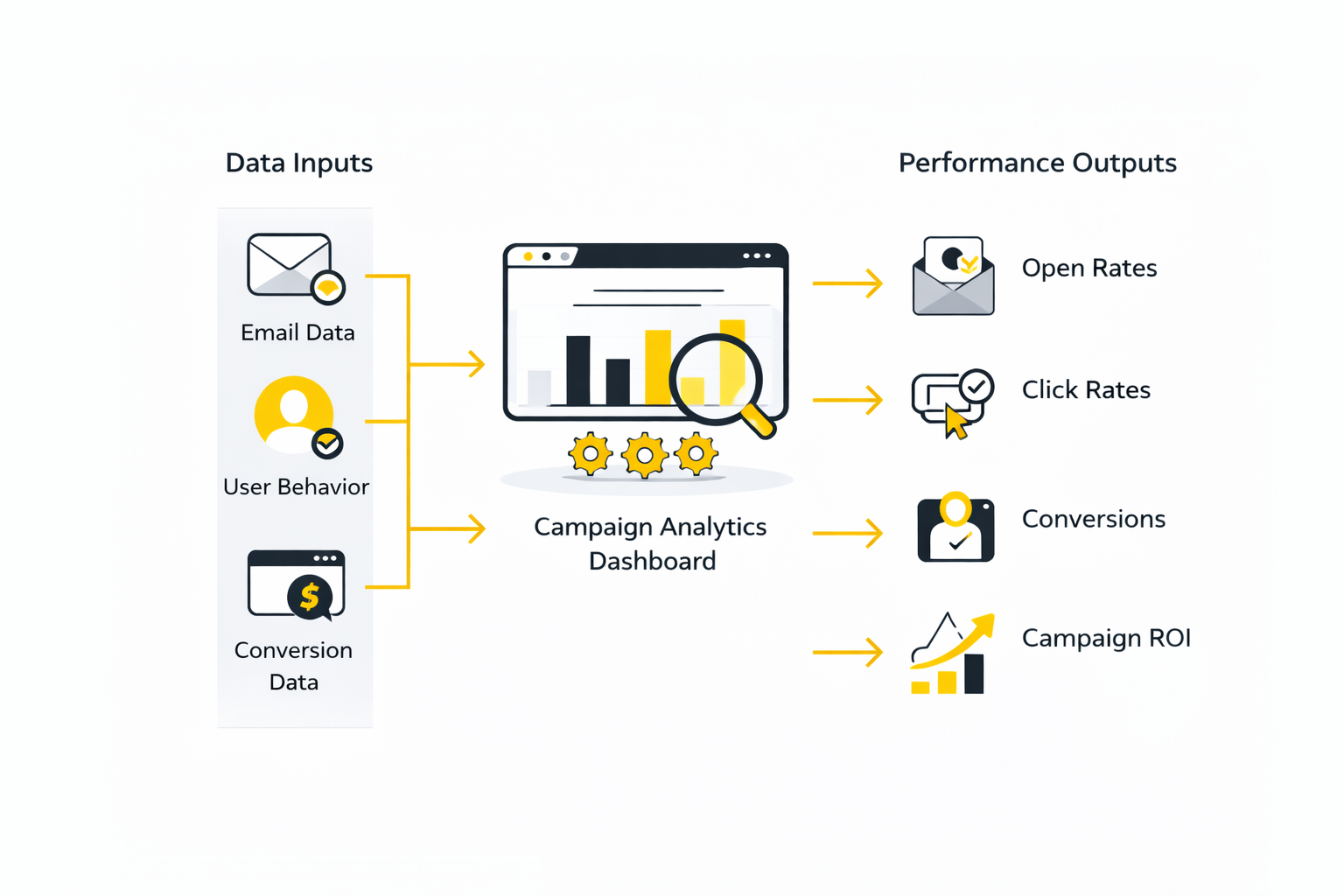

Build a Dashboard That Matches Reality

The cleanest analytics system for drip campaigns has three layers. The first tells you whether the message was delivered safely, the second tells you whether anyone engaged with it, and the third tells you whether that engagement changed business behavior. Once you build reporting this way, the numbers stop feeling random and start becoming directional.

- Delivery health Track deliverability, bounce rate, unsubscribe rate, and spam complaints first. Google’s sender guidance says senders should keep spam rates below 0.1% and prevent them from reaching 0.3% or higher, which means a sequence with strong revenue but rising complaint rates is not actually healthy. It is borrowing results from the future.

- Engagement quality Track clicks, click-through rate, click-to-open rate when useful, replies where relevant, and page visits after the click. Open rates still have directional value for subject line and inbox placement, but they cannot carry the whole story anymore because Twilio recommends using click activity as the primary engagement indicator as open data becomes less reliable, and Litmus says marketers are shifting away from unreliable open-rate-led reporting toward CTR, conversion rate, unsubscribe rate, revenue per email, and ROI.

- Business outcome This is where reporting becomes useful to leadership. Customer.io’s 2026 lifecycle measurement framework says teams should report activation, retention, and expansion outcomes rather than stopping at opens and clicks. For ecommerce, that usually means order rate, revenue per recipient, repeat purchase rate, and reactivation. For SaaS or B2B, it usually means time to value, feature adoption, demo bookings, trial-to-paid conversion, churn reduction, and upgrades.

What the Numbers Should Make You Do

Data is only valuable if it changes the next decision. If a flow gets strong opens but weak clicks, the problem is usually the offer, CTA, or message-to-page match. If clicks are healthy but conversions are soft, the bottleneck often sits on the landing page, checkout, or activation experience rather than in the email itself.

If a sequence converts well but complaints or unsubscribes rise, that is your warning sign that the targeting or cadence is too aggressive. This is exactly why a useful dashboard needs both performance metrics and guardrails. Teams that want all of this in one place often prefer a CRM-centered setup like GoHighLevel or a sales-linked reporting layer with Copper, because attribution gets easier when campaign data and customer state live closer together.

The most important action, though, is simpler than most teams expect. Start by identifying which single flow creates the largest gap between current performance and likely upside. The benchmark data already gives the answer for most brands: abandoned cart, welcome, and browse abandonment are usually the first places where measurement turns directly into money.

Scaling Drip Campaigns Without Losing Relevance

The next challenge after finding a few winning drip campaign examples is scale. What works for one welcome flow or one abandoned cart sequence can break fast when you add more products, more audiences, more regions, and more channels. That is why scaling is not mainly a copy problem. It is a systems problem, and the brands that handle it well usually rely on cleaner customer data, tighter segmentation, and fewer unnecessary branches. Klaviyo’s 2025 consumer research found 74% of consumers expect more personalized experiences, so the pressure is not just to automate more. It is to stay relevant while doing it.

The practical move is to scale by lifecycle stage, not by creative enthusiasm. Add depth only where a behavior truly changes the message, such as first purchase versus repeat purchase, trial active versus trial idle, or high-value cart versus low-value cart. That logic aligns with Klaviyo’s segmentation guidance, which points brands toward strategic behavioral segments instead of broad list blasts, and it is one of the clearest differences between automation that compounds and automation that becomes a maintenance headache.

The Real Tradeoff: More Branches or Better Data

A lot of teams assume sophistication means more branches. In reality, sophistication often means better data flowing into fewer, sharper sequences. Customer.io’s lifecycle research says disconnected systems are one of the biggest blockers to effective lifecycle marketing, which is exactly why bloated automation maps often underperform cleaner systems with stronger event tracking.

This tradeoff matters because every extra branch adds creative work, QA work, reporting complexity, and more chances for a user to land in the wrong path. The better question is not “What else can we automate?” It is “Which extra data point would let this sequence make a better decision?” In many cases, one reliable event like last product viewed, plan type, or purchase frequency is worth more than five decorative conditional paths.

That is also why stack decisions start mattering more at this level. If your automation, CRM, and attribution are split across too many tools, the campaigns get harder to trust and harder to optimize. Teams that want a tighter command center often consolidate into platforms like GoHighLevel or connect their segmentation and customer record more closely before adding more campaign complexity.

The Risks That Quietly Kill Performance

Most automation problems do not show up as dramatic failures. They show up as slow decay. Open rates flatten, click quality weakens, unsubscribe rates creep up, and the team keeps sending because the dashboard still looks “fine” at first glance.

Deliverability is the first silent risk. Google requires bulk senders to support one-click unsubscribe for subscribed marketing messages over 5,000 emails per day, Yahoo also requires or strongly enforces one-click unsubscribe practices for relevant senders and asks that requests be processed quickly, and Google says spam rates above 0.3% keep bulk senders ineligible for mitigation. That means a “successful” sequence that pushes complaints too high is not successful. It is quietly training mailbox providers to trust you less.

The second risk is measurement distortion. Open-rate-led thinking has become much less dependable because mailbox privacy protections changed how opens are recorded. Twilio’s Apple Mail Privacy Protection guidance recommends getting comfortable with clicks and identifying machine opens where possible, and Twilio’s deliverability guidance says inbox placement, bounce rate, complaint rate, and click activity are better health signals than opens alone. If your team still treats opens as the main signal for advanced drip campaigns, you are steering with a blurry windshield.

The third risk is consent confusion. Marketing messages and transactional messages are not the same thing, and pretending they are can create both trust and compliance problems. Customer.io’s transactional messaging docs distinguish transactional messages like receipts, password resets, and shipping updates from marketing messages that require explicit opt-in under laws such as CAN-SPAM and GDPR. That line matters more as your automation gets more advanced, because it is easy for “helpful” lifecycle messaging to drift into territory the subscriber did not actually agree to receive.

Where AI Helps and Where It Hurts

AI can make drip campaigns faster to build, but speed is not the same thing as judgment. The smart use of AI is usually in support roles: summarizing behavioral patterns, suggesting segments, drafting variants, flagging anomalies, or helping personalize at scale. The wrong use is letting it generate generic sequences that sound smooth but ignore the real customer moment.

This matters because buyer expectations are clearly moving toward more relevant communication. Klaviyo’s 2025 consumer research says 74% of consumers expect more personalized experiences, but that does not mean people want robotic hyperactivity. It means they want messaging that reflects what just happened, what they care about, and what would help next.

The practical rule is simple. Let AI help with pattern recognition and production speed, but keep humans in charge of trigger logic, offer hierarchy, suppression rules, and brand judgment. If you want conversational follow-up layered onto your email flows, tools like Chatbase can be useful for handling intent after the click, and if your sequence naturally continues in social or messaging apps, ManyChat can make the handoff feel native instead of forced. The win is not “using AI.” The win is reducing friction without losing clarity.

How Experts Actually Improve Drip Campaigns Over Time

At the expert level, optimization becomes less about finding one magic subject line and more about building a repeatable testing habit. Mailchimp’s A/B testing guidance recommends testing one variable at a time, which sounds basic, but it becomes more important as sequences get more complex. When teams change timing, content, CTA, and segmentation all at once, they usually learn nothing useful.

The highest-value tests are usually tied to the biggest decision point in the sequence. In a welcome flow, that might be whether the second email should push proof or product discovery. In an onboarding flow, it might be whether a checklist outperforms a tutorial. In a win-back flow, it might be whether category-specific recommendations beat a blanket offer.

It also helps to stop thinking of optimization as email-only work. Some of the best gains come from the page after the click, the form before the signup, or the booking path after the nurture sequence. That is why brands often improve results by tightening the whole path with tools like Replo for better message-matched pages or Cal.com when the right next step is to get a qualified lead onto a calendar instead of asking them to read another email.

The Best Advanced Strategy Is Usually Restraint

This is the part most teams do not expect. The most advanced drip campaign strategy is often restraint, not expansion. Fewer sequences, cleaner entry rules, sharper suppression logic, and better reporting usually beat sprawling automation maps full of overlapping messages and internal confusion.

That is especially true because poor execution has side effects beyond weak conversions. Twilio notes that broken or inconsistent rendering can lead to worse engagement and more negative signals such as complaints or unsubscribes, which means scaling carelessly can hurt both performance and sender reputation. Advanced does not mean complicated. It means intentional.

The best drip campaign examples all point back to the same principle: send the right message when the customer has earned it, make the next step obvious, and remove anything that does not help that move happen. That sets up the final part well, because the remaining questions are usually the practical ones people ask once they are ready to build or fix their own sequences.